Anthropic Safety Interview Question Bank & Guideline in 2026

I’m a PhD in Computer Science (AI/ML) at UCL. Last December, I applied for the Research Engineer position at Anthropic’s London office. While I had heard about the high difficulty level of their interviews for this cutting-edge AI company, I was still stunned by the intensity and high standards of their process.

What stood out to me is that, beyond strong technical skills, AI Safety is a non-negotiable topic at Anthropic and lies at the core of their ethical framework. It’s not enough to just know the tech—you also need to deeply engage with ethical considerations and alignment challenges. Because of this, I thought it would be worthwhile to share some insights specific to this topic in their interviews.

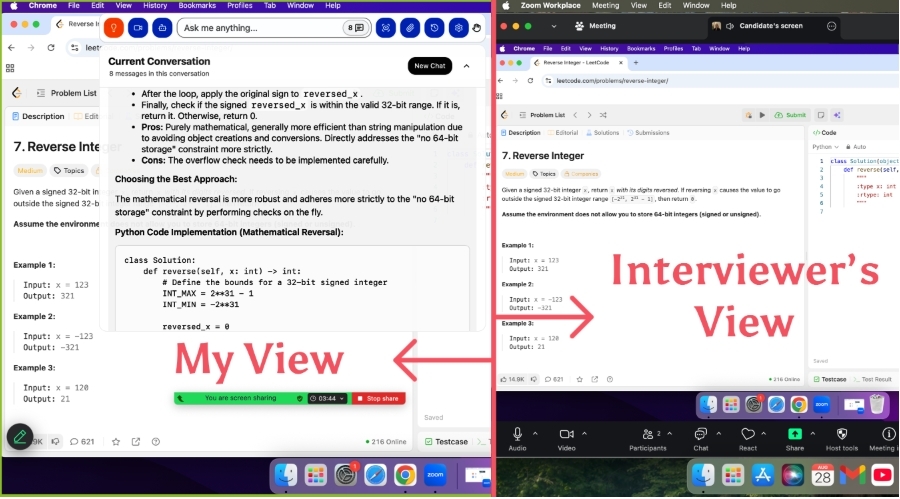

Imagine having a real-time, undetectable AI Interview assistant during screen interviews or online technical tests—keeping you calm and focused throughout. In a time when job opportunities are harder to come by and companies have higher expectations for interview preparation, investing in an AI tool that boosts your efficiency might be one of the smartest decisions you can make!

Anthropic's Safety Philosophy—Responsible Scaling

In 2026, technical skills alone are no longer sufficient to secure a role—candidates perceived as a "safety risk" are often eliminated outright. This is especially true for companies like Anthropic and OpenAI, where interviews focus heavily on thoughtful, nuanced perspectives. Interviewers want to see detailed and responsible thinking—not dismissiveness or an attitude of surrender in the face of technical flaws, but rather an awareness of "Responsible Scaling". This mindset goes beyond technical fixes and requires candidates to weigh the risks and benefits of scaling technology, while understanding its broader implications for society, ethics, and long-term development.

For instance, when discussing the deployment of a new large-scale language model, an interviewer might ask:

"If we discover that this model can generate misleading content in certain scenarios, how would you handle it?"

Dismissive Response: "It's not a major issue; we can fix it later with some simple rules."

This answer demonstrates a lack of depth in understanding the risks and fails to recognize how misleading content could severely undermine societal trust and the information ecosystem.Overly Cautious Response: "If there's a chance of generating misleading content, we shouldn’t deploy the model at all."

While this response shows risk awareness, it suggests an inability to balance risks and benefits, appearing overly conservative and failing to advance practical deployment.Responsible Scaling Response: "First, we’d need to evaluate the frequency and impact of misleading content, then design solutions such as integrating stronger filtering mechanisms and conducting rigorous red teaming before deployment. Additionally, we could define clear usage boundaries and educate users to ensure they understand the model’s limitations."

This approach illustrates a thoughtful risk analysis while demonstrating a pragmatic attitude toward problem-solving.

Key topics in these interviews include Reinforcement Learning from Human Feedback (RLHF), Constitutional AI (especially relevant for Anthropic), Red Teaming, Alignment, Adversarial Robustness, Fairness, and Privacy. Interviewers assess whether candidates can analyze these issues comprehensively from both technical and ethical perspectives.

Behavioral flags can also disqualify candidates. For example:

“Lone Wolf” Tendencies: Refusing to collaborate and believing they can solve everything independently.

Overconfidence: In a field as fast-moving as AI, no one has all the answers, but excessive self-assurance can come across as naïve.

Misaligned Interests: Displaying a purely financial motivation rather than genuine alignment with the company’s mission. Anthropic and OpenAI are driven by the goal of advancing safe AI development, and candidates must clearly demonstrate passion and commitment to that mission.

Ultimately, candidates need to showcase not only their technical expertise but also a deep understanding of how scaling technology impacts society and safety. They must demonstrate responsibility, critical thinking, and problem-solving skills when tackling complex challenges. This "Responsible Scaling" mindset is a central criterion for talent selection at these companies.

AI Safety Interview Question Bank of Anthropic

Technical Interview AI Safety Questions

Anthropic places a strong emphasis on thorough candidate background checks during their interview process—a unique practice that highlights their commitment to social responsibility and reputation.

Interviewers typically frame their questions around topics like AI privacy, alignment challenges, the societal impact of technology, and the long-term risks of innovation, often referencing the candidate's past research or internship experiences. During my PhD, my research focused on AI alignment and interpretability, including work on Constitutional AI, Reinforcement Learning from Human Feedback (RLHF), and explainability techniques. During my master’s program, I completed two internships: one at Hugging Face, where I worked on alignment techniques for large language models, and another at DeepMind, where I evaluated the safety of large-scale models. Below are examples of real and hypothetical questions from the interview process, along with my responses:

Question 1 (Real):

In your PhD research, you mentioned applying differential privacy (DP) frameworks within RLHF. Could you explain how you ensured that privacy techniques didn’t compromise the model’s alignment performance?

My Answer:

Differential privacy often introduces random noise to protect user data, which can impact alignment performance. To address this, I devised a dynamic privacy budget allocation method that adjusts noise intensity based on the sensitivity of the data. This approach ensures that more sensitive data receives stronger protection while minimizing noise interference in alignment-related tasks. For example, our experiments showed that grouping user preference data and applying tailored noise levels effectively reduced the adverse effects of noise on RLHF reward model training.

Question 2 (Hypothetical):

While protecting user privacy, how would you prevent new technologies (like differential privacy) from being exploited to make it harder to track model misuse, such as generating harmful content?

Possible Answer:

The misuse of differential privacy could indeed obscure malicious activities. To mitigate this, I believe in designing multi-layered systems. On one hand, the application of differential privacy should be tightly scoped—restricted to protecting specific sensitive data. On the other hand, red-teaming exercises should be employed to proactively uncover potential misuse scenarios. For instance, in my PhD research, I proposed logging the full execution path of the preference reward function during model generation to trace behavioral patterns, even under privacy constraints. This provides a way to balance privacy protection with accountability in preventing misuse.

Question 3 (Real):

In your PhD research, you focused on RLHF and Constitutional AI. Is it possible for a model to superficially align with human preferences while exhibiting underlying unsafe behaviors? How would you address this issue?

My Answer:

Yes, models can optimize reward functions to appear aligned with human preferences while masking unsafe behaviors. This often stems from flaws in reward function design. To address this, I developed a multi-stage evaluation approach: first, using red-teaming exercises to proactively identify potential failure points in the alignment process; second, implementing behavioral auditing mechanisms to analyze the model’s outputs and introduce context-based constraints. This ensures that the model’s behavior not only aligns with the reward function but also adheres to broader safety and ethical standards.

Question 4 (Hypothetical):

Constitutional AI relies on rule-based guidance for model behavior. If conflicts arise between rules (e.g., ethical principles versus user preferences), how would you handle them?

Possible Answer:

I believe conflicting rules require a prioritized hierarchy. For instance, in high-risk scenarios, ethical principles should take precedence over user preferences. Additionally, I propose incorporating a dynamic adjustment mechanism into the Constitutional AI framework. This mechanism would log conflict scenarios and optimize rule prioritization in real time. Furthermore, gathering extensive user feedback can help identify the sources of conflicts and iteratively refine rule design.

Question 5 (Real):

In your paper, you mentioned protecting user data privacy, but privacy protection could reduce transparency in generated content. How would you balance privacy and transparency?

My Answer:

Balancing privacy and transparency requires a multi-layered approach. On one hand, differential privacy ensures sensitive data remains secure. On the other hand, designing content-generation logging systems allows users to trace the model’s behavior without exposing sensitive data. Specifically, in my research, I proposed an encrypted auditing tool that logs the model’s decision paths in a privacy-preserving environment while avoiding sensitive information leaks.

Question 6 (Hypothetical):

When deploying generative AI, if the model produces harmful or unethical content, how should responsibility be divided, and what preventive measures would you take?

Possible Answer:

Responsibility should be distributed across multiple levels. The development team bears technical responsibility for ensuring alignment and safety testing during training. Deployers are accountable for operational monitoring to detect issues promptly. User education also plays a role, helping users understand the model's limitations. Preventive measures include strengthening red-teaming processes, refining reward function design, and implementing real-time monitoring systems to detect harmful outputs.

Question 7 (Real):

What do you see as the greatest future safety risk from generative AI? How could your research address these risks?

Possible Answer:

I believe the greatest future risk is unpredictability—models behaving in unforeseen ways in complex environments. For example, generative AI could learn unsafe preferences through user interactions. My research offers solutions by introducing dynamic privacy frameworks to limit sensitive information learning during interactions and integrating behavioral auditing mechanisms to monitor and adapt the model’s behavior in real time, ensuring it stays within safe boundaries.

Question 8 (Hypothetical):

What do you see as the limitations of RLHF for AI safety in the future? Are there other techniques that could complement RLHF to overcome these limitations?

Possible Answer:

RLHF’s main limitation is its reliance on human feedback, which can be biased or misleading. I believe it can be complemented by Constitutional AI, which introduces predefined rule-based constraints to compensate for gaps in user feedback. Additionally, exploring multimodal alignment methods—combining visual, linguistic, and behavioral data—could enhance the model’s understanding of human intent while reducing biases from single-modal feedback.

Behavioral Interview AI Safety Questions

If the background check evaluates "who you've been in the past" in terms of your AI safety values, the behavioral interview focuses more on "who you could become in the future." Similarly, here are some real questions asked by interviewers, as well as some hypothetical ones I’ve reconstructed through reflection and discussions with others:

Question 1 (Real):

Imagine that in a future project, you lead a team developing a generative AI model for a client in the healthcare field. The model needs to process large amounts of patient data, with strict privacy requirements. At the same time, the client expects diagnostic-level performance with high accuracy. If privacy measures reduce model performance, how would you balance these trade-offs and move the project forward?

My Answer:

In this scenario, I would start by assessing the gap between the specific privacy requirements and the performance targets. I’d lead the team in iterative experiments to find a technically feasible trade-off between privacy protection and performance optimization. I’d also encourage the client to clarify their priorities between privacy and accuracy, and propose using a dynamic privacy budget mechanism to adjust noise levels—protecting sensitive data while maximizing model performance. Additionally, I’d foster transparent communication with the client, presenting the long-term value of strong privacy protections and the trade-offs involved in achieving high performance, ultimately gaining their support for the proposed approach.

Question 2 (Hypothetical):

Suppose you’re leading a Constitutional AI project and need to define constraint rules to guide the model's behavior. Within the team, there’s a disagreement: some members prioritize implementing highly ethical rules, while others worry that these rules might negatively impact user experience. How would you resolve these differences and ensure the project progresses?

Possible Answer:

When faced with team disagreements, I would organize a priority discussion session to identify each side’s core concerns. First, I’d gather user research and experimental data to evaluate how different rules might concretely impact user experience, ensuring that decisions are data-driven. Second, I’d propose a layered constraint framework: making highly ethical rules “core constraints” to ensure the AI system's safety, while treating secondary rules that might affect user experience as adjustable, guided by ongoing user feedback. Additionally, I’d align the team around the company’s mission and values, emphasizing the importance of ethical priorities, and steer the conversation toward a collaborative solution.

Question 3 (Real):

Suppose you’re collaborating with the company’s ethics team to design a differential privacy framework, and they propose strict guidelines that could slow down development. For example, they might require additional privacy review processes. As the technical team lead, how would you negotiate with the ethics team to ensure privacy reviews are upheld without jeopardizing project timelines?

My Answer:

I would organize a collaborative meeting with both the ethics and technical teams. First, I’d emphasize the critical importance of privacy reviews and the potential long-term risks of bypassing such processes. To maintain progress, I’d propose solutions like parallelizing privacy reviews and development tasks to ensure they advance simultaneously. I’d also suggest leveraging automated privacy review tools or streamlining the review process to minimize timeline impacts. By setting clear milestones and priorities, I’d help both teams balance the need for thorough privacy reviews with the project’s timeline requirements.

Question 4 (Hypothetical):

Suppose you’re leading a generative AI project at the company, and your team consists of members from diverse backgrounds—some focus on technical aspects like algorithm optimization, while others prioritize social and ethical concerns, such as preventing harmful content. How would you ensure effective communication and alignment toward a common goal?

Possible Answer:

I’d start by clearly defining the project’s overall objectives, positioning technical optimization and social ethics as complementary tasks. For example, I’d highlight that preventing harmful content is integral to technical optimization since unsafe behaviors can directly impact the model’s long-term performance and societal acceptance. I’d encourage cross-disciplinary learning by having technical members explain the logic behind algorithm improvements, while ethical members could share case studies of social safety issues to build mutual understanding. Establishing regular communication mechanisms, like biweekly discussions, would help the team address points of conflict and align on shared goals.

Question 5 (Real):

Imagine you’re managing a large-scale generative AI project with millions of users, and you discover that the model may generate unsafe content (e.g., harmful or misleading information) under edge cases. Considering the project’s scale and long-term impact, how would you design mechanisms to monitor and mitigate these risks?

My Answer:

I’d begin by implementing a real-time monitoring system to dynamically evaluate generated content and detect unsafe behaviors under edge cases. Additionally, I’d recommend introducing a red-teaming framework to simulate extreme scenarios and uncover potential vulnerabilities. To further minimize risks, I’d establish a continuous feedback loop to collect user interaction data and regularly update model rules and behavioral constraints. On a longer-term scale, I’d propose creating a transparent auditing platform that makes the model’s decision-making process accessible to external reviewers, enabling collaborative oversight to reduce risks further.

Question 6 (Hypothetical):

Suppose the company plans to scale a generative AI model for multilingual use, and you realize that cross-cultural contexts may lead the model to generate content that conflicts with local ethical norms in certain languages. As the project lead, how would you design a strategy to ensure the system’s safety and ethical compliance across languages?

Possible Answer:

I would advocate for developing a “cross-cultural testing framework” to thoroughly assess the model’s outputs in different languages and identify potential context-specific issues. For example, I’d suggest involving localized red-team experts to analyze ethical concerns unique to specific languages and cultures. During model training, I’d push for the inclusion of multilingual ethical constraints, collaborating with linguists and sociologists to ensure rules are both applicable and comprehensive. For long-term deployment, I’d recommend implementing a feedback mechanism to continuously collect user input to refine and update constraints, ensuring the system evolves to meet the ethical demands of diverse linguistic environments.

Guideline of Anthropic AI Safety Interview Prep

Learning from Anthropic's Official Blogs

Their official blog and research papers are goldmines of information. Be prepared for questions about Claude’s architecture and training optimization strategies, as these often come up in interviews.

Learning from Other AI Companies

When preparing for Anthropic's AI Safety interview, looking into AI Safety practices at other companies can be a very effective approach. For example, prior to my interview, I researched how OpenAI applied a combination of supervised learning and Reinforcement Learning from Human Feedback (RLHF) in their ChatGPT model to make outputs more aligned with user intent while minimizing harmful or unsafe content.

I analyzed OpenAI’s methodologies through their official publications and domain experts’ research blogs, focusing on how they use RLHF and rule-based design to align model behavior (discussing RLHF’s strengths and weaknesses, and how the approach could be adapted or extended). You could also explore potential improvements, such as designing a framework that integrates OpenAI's RLHF approach with Anthropic’s Constitutional AI. For instance, adding a dynamic feedback component to rule-based guidance would enable the model to continuously learn and refine its behavior for better alignment and safety.

Cultural and Geographic Perspectives

In addition to universal technical and ethical standards, I highly recommend incorporating regional cultural and geographical considerations into discussions about AI ethics and safety. Doing so demonstrates your awareness of inclusivity, diversity, and global perspectives in technology.

Different regions have distinct cultural values, legal frameworks, and societal expectations, all of which influence the design of AI ethics and safety protocols. For example:

North American Market (especially the U.S.): There’s a strong emphasis on individual freedom, privacy protection, and technological transparency (e.g., the California Consumer Privacy Act or CCPA).

Asian Market: Asia is highly culturally diverse, with varying languages (Japanese, Mandarin, Vietnamese, Thai, etc.), religious beliefs (Buddhism, Shinto, Hinduism, etc.), and societal values. Countries like China and South Korea may prioritize technology’s role in public welfare and social harmony.

European Market: Europe is renowned for its stringent privacy regulations (e.g., General Data Protection Regulation or GDPR) and its focus on ethics in technology. Transparency, explainability, and fairness are particularly critical for AI systems in this region.

In interviews, you can showcase your understanding of localized ethical considerations by exploring how to integrate "layered rule mechanisms" into existing models—embedding region-specific ethical guidelines to ensure the system’s cultural sensitivity across different markets. For instance, you could propose technical approaches to evaluate AI fairness in multilingual environments, such as creating a test dataset that includes various languages and dialects to ensure the model avoids discriminatory outputs.

Additionally, you might discuss strategies for balancing global frameworks with localized needs, such as emphasizing modular technical architectures that uphold universal rules while enabling the incorporation of region-specific adjustments or behaviors for particular markets. This approach highlights your ability to navigate the complexities of deploying AI in a globally diverse landscape.

FAQ

What technical skills should I focus on?

I focus on machine learning fundamentals, model alignment, interpretability, and robustness testing. I practice coding challenges that reflect real-world safety issues. I review system design concepts and learn how to monitor deployed models.

How can I show my commitment to AI safety?

I share stories from my projects where I prioritized safety. I explain how I handled ethical dilemmas and learned from mistakes. I connect my values to Anthropic’s mission in every answer.

What resources helped me the most?

I use Anthropic’s blog, “Concrete Problems in AI Safety,” and the AI Safety Fundamentals course. I join Discord servers and Slack channels for practice sessions. I connect with engineers on LinkedIn and attend local meetups.

How do I handle questions about knowledge gaps?

If I don’t know something, I admit it. I explain how I would research the answer and improve my skills. I show my willingness to learn and adapt. Anthropic values honesty and growth.

What’s the best way to structure my answers?

I use frameworks like STAR or CAR. I break down my response into clear steps. I state the problem, describe my actions, and share the outcome. This keeps my answers organized and easy to follow.

See Also

My Anthropic Research Engineer Interview Process in 2026

My Anthropic London Interview Process Guideline in 2026

Anthropic Coding Interview: My 2026 Question Bank Collection

How I Practiced Anthropic Codesignal and Passed the Interview