My Azure Databricks Interview in 2025: Real Questions I faced

I just finished my azure databricks interview in 2025, so I know exactly how it feels to face those tough questions. The process puts a big spotlight on Databricks architecture, clusters, notebooks, and how everything connects with Azure. Interviewers love to ask about real-world problems—think aggregations, window functions, data modeling, and performance optimization. They also dive into data quality and security.

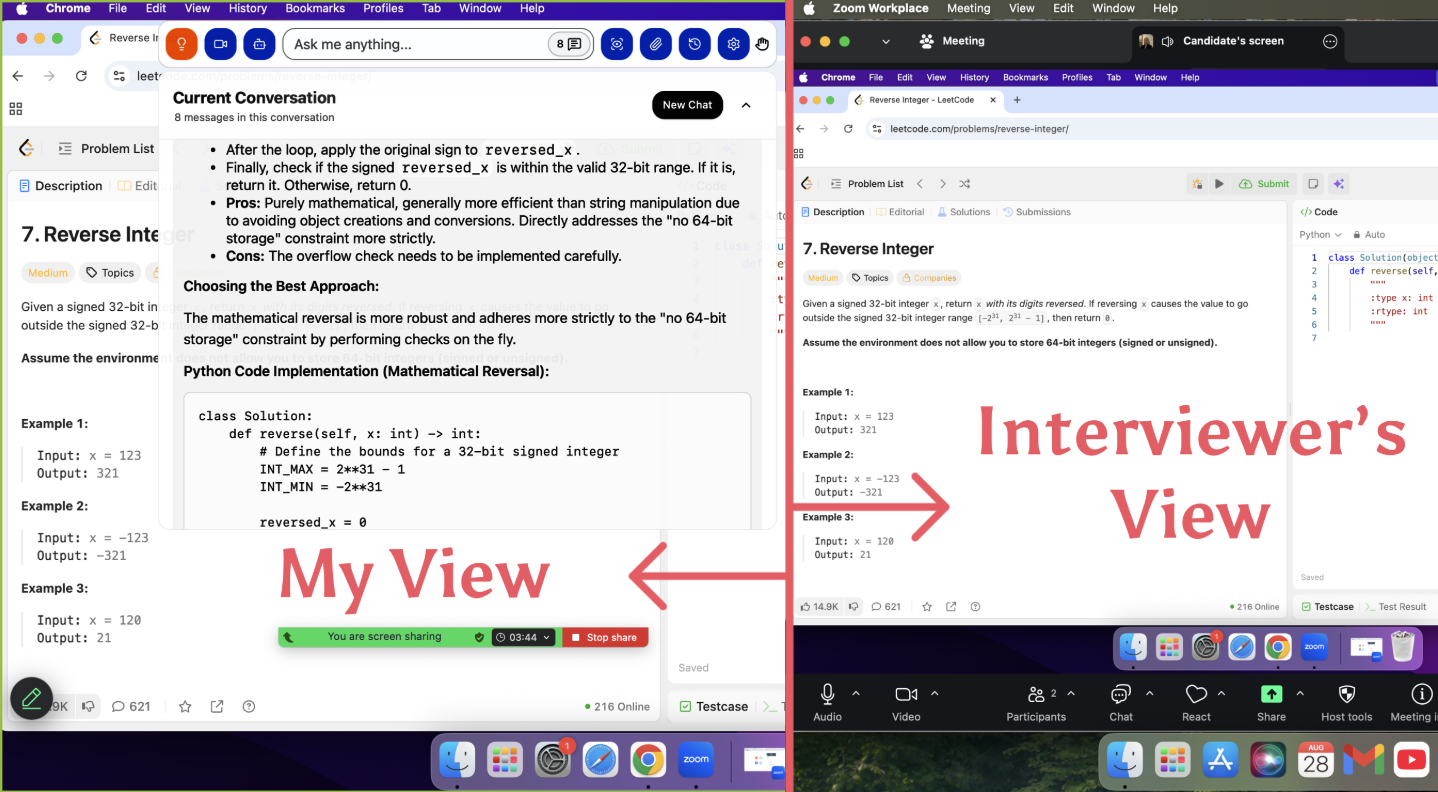

I’m incredibly grateful to Linkjob.ai for their support in passing my interview. As a thank you, I’m posting my OA questions and question bank here. Utilizing an undetectable AI interview copilot truly provides a game-changing edge during the process.

Real questions and practical tips helped me prepare, so I want to pass that on to you.

Real Interview Questions For Azure Databricks Interview

Databricks Basics

I start my prep with the basics. Interviewers want to see if I understand what Azure Databricks is and how it fits into the Azure ecosystem. Here are some questions I faced, along with quick answers:

Question | Answer |

|---|---|

What is Azure Databricks? | Azure Databricks is a cloud-based analytics platform built on Apache Spark for big data and AI workloads. |

What are the key components of Azure Databricks? | The main components are Workspace, Clusters, and Jobs. |

How is Azure Databricks integrated with Azure services? | It integrates with Azure Data Lake, Azure SQL Database, and Azure Synapse Analytics. |

What programming languages does Azure Databricks support? | It supports Python, R, Scala, Java, and SQL. |

What are the benefits of using Azure Databricks? | Benefits include scalability, fast processing, and real-time data insights. |

Tip: I can explain these basics in my own words. It helps me stay confident during the azure databricks interview.

Notebooks and Clusters

The next set of questions usually focuses on how I use notebooks and clusters. Interviewers want to know if I can set up and manage my workspace efficiently.

Question Number | Question |

|---|---|

4 | When referring to Azure Databricks, what exactly does it mean to "auto-scale" a cluster of nodes? |

9 | Which of these two, a Databricks instance or a cluster, is the superior option? |

10 | What is meant by the term "management plane" when referring to Azure Databricks? |

12 | What is meant by the term "data plane" when referring to Azure Databricks? |

I review how auto-scaling works and the difference between the management plane and data plane. These topics come up a lot.

Data Engineering

Data engineering questions test my ability to build and manage data pipelines. I have get asked about ETL processes, data ingestion, and how I handle schema changes.

# Function to check if an IP is within a CIDR range

function matches(ip, cidr):

network_prefix, mask_length = parse(cidr)

# Convert IP to 32-bit integer

ip_int = ip_to_int(ip)

network_int = ip_to_int(network_prefix)

# Create a bitmask (e.g., /24 becomes 24 ones followed by 8 zeros)

mask = (0xFFFFFFFF << (32 - mask_length)) & 0xFFFFFFFF

# Check if the network portions are identical

return (ip_int & mask) == (network_int & mask)

# Main Firewall Logic

function evaluate_firewall(ip_address, rules):

for rule in rules:

if matches(ip_address, rule.cidr):

return rule.action # "ALLOW" or "DENY" (First match wins)

return "DENY" # Default safety policy

Linkjob.ai worked really well during my interview and it's also undetectable. I think the structure of the answers was great because it provided a logic breakdown first, followed by the complete code that was ready to use.

Note: I practice explaining my pipeline designs with simple diagrams or step-by-step lists. It helps me communicate my ideas clearly.

Delta Lake and Governance

Delta Lake and data governance are hot topics in every azure databricks interview. I see questions about data reliability, access control, and cost optimization.

How do you use Azure Purview for data lineage and classification?

What is the best way to implement RBAC and Managed Identities for access control?

How do you optimize costs using Delta Lake for incremental loads?

What tools do you use to monitor and track usage in Databricks?

I also get technical questions about Delta Lake’s architecture:

How does Delta Lake handle concurrent writes?

What is optimistic concurrency control?

How does Delta Lake provide consistent snapshots without using locks?

Tip: I mention partitioning, lifecycle policies for cold data, and using Azure Monitor for tracking.

PySpark and SQL

I expect hands-on coding questions in PySpark and SQL. Interviewers want to see if I can solve real problems using code.

Type of Question | Example |

|---|---|

Coding/Scenario-Based Questions | 1. Create a new column risk_category based on patient records. |

2. Rename subsequent Assigned activities and count reassignments for each user. | |

3. Return IDs of dates where the temperature was higher than the previous day. | |

Conceptual Questions | 1. Explain Spark Architecture. |

2. Difference between Repartition vs Coalesce. | |

3. Explain Job, Stage, Task in Spark execution. | |

4. What is in-memory computation in Spark? | |

5. How would you convert an existing Parquet dataset into Delta format in Databricks? |

I practice writing PySpark code in a notebook before the interview. Here’s a quick example:

from pyspark.sql.functions import col, lag

from pyspark.sql.window import Window

windowSpec = Window.orderBy("date")

df = df.withColumn("prev_temp", lag("temperature").over(windowSpec))

df.filter(col("temperature") > col("prev_temp")).select("id").show()

Optimization Techniques

Optimization always comes up in technical rounds. I get questions about how I improve query performance and manage resources.

I use rule-based and cost-based optimization to speed up queries.

I transform logical plans into optimized physical plans.

I cache intermediate results and use Parquet for storage.

I tune Spark configurations for each workload.

I use range join optimization and adaptive query execution.

Tip: I mention caching and using the right file formats. These are easy wins for performance.

GenAI and ML Topics

Recently, I’ve seen more questions about Generative AI and machine learning in the azure databricks interview. Interviewers want to know if I can build and scale AI solutions.

How do you develop and implement Large Language Model (LLM) solutions on customer data?

Can you explain Retrieval-Augmented Generation (RAG) architectures?

What tools have you used for building GenAI applications (like HuggingFace, Langchain, or OpenAI)?

How do you apply MLOps practices to productionize data science workloads?

I share examples from my own projects, even if they’re small.

Scenario-Based Examples

Scenario-based questions test how I solve real-world problems. Here are some examples I’ve faced:

Scenario Description | Example Question |

|---|---|

Optimizing ingestion of large JSON files (5GB+) into Databricks | How do you optimize ingestion of large JSON files (5GB+) into Databricks? |

Debugging a failed pipeline writing to a Delta table | A pipeline failed overnight while writing to a Delta table. How would you debug it and ensure data recovery? |

Designing a scalable Lakehouse using Databricks | How do you design a scalable Lakehouse using Databricks? |

Structuring Bronze, Silver, and Gold layers for an enterprise-grade data platform | You’re building an enterprise-grade data platform. How would you structure Bronze, Silver, and Gold layers? |

Note: I walk through my thought process step by step. I explain what I would check first, how I’d debug, and how I’d prevent the issue in the future.

I’ve learned that practicing these questions and reviewing my own project experiences gives me a big advantage in the azure databricks interview. If you focus on these topics, you’ll feel ready for anything they throw at you.

Azure Databricks Interview Process

Application Steps

When I started my azure databricks interview journey, I noticed the application focused on both technical and non-technical skills. The process checked if I could handle data ingestion, build ETL pipelines, and work with Databricks notebooks. I also had to show I understood data governance and machine learning integration. Here’s a quick look at what they wanted:

Requirement Type | Key Points |

|---|---|

Functional Requirements | Data ingestion, ETL pipelines, ad-hoc queries, data governance, ML integration, collaboration |

Non-Functional Requirements | Scalability, availability, latency, reliability, security |

Screening and Assessment

After applying, I got a recruiter call. This call lasted about 30 minutes. The next step was a technical phone screen, which took an hour. During this phase, I faced questions about Databricks administration, Spark optimization, big data analytics, and security. The company used online coding platforms and skill assessments. Here’s what they tested:

Skill Area | Description |

|---|---|

Azure Databricks administration | Managing environments |

Apache Spark optimization | Improving performance |

Big data analytics | Analyzing large datasets |

Machine learning with Azure MLlib | Implementing ML solutions |

Data integration with Azure services | Connecting data across Azure |

Security and compliance | Protecting data and meeting standards |

Technical Rounds

The technical rounds felt intense. I had to answer questions about PySpark, Delta Lake, and Spark architecture. The interviewers asked me to troubleshoot memory issues and explain join strategies. Here are the main skills they checked:

PySpark fundamentals

Performance optimization

Delta Lake concepts

Data engineering scenarios

Spark architecture and components

Handling schema changes

Data governance with Unity Catalog

Optimizing ADF pipelines

Understanding different join strategies

Troubleshooting memory issues in Spark

Scenario-Based Questions

The scenario-based part of the azure databricks interview really tested my problem-solving skills. I had to design a pipeline for a retail surge during Durga Pujo, optimize slow queries, and handle streaming IoT data. Here are some examples:

Question | Description |

|---|---|

Handling Durga Pujo E-Commerce Traffic | Design a scalable ingestion pipeline for a retail client during sales surge. |

Avoiding Synapse Query Bottlenecks | Optimize performance for slow queries on large transaction history. |

Streaming IoT Data from Manufacturing Plants | Handle ingestion from diverse formats across pipelines. |

Partitioning Strategies in ADLS | Partition a large dataset for best performance. |

Handling Schema Drift | Future-proof pipelines for changing source schemas. |

HR and Managerial Rounds

The final stage included a hiring manager call and onsite interviews. The manager wanted to know how I work with teams and handle stress. The onsite interviews could last up to five hours. The whole azure databricks interview process took almost eight weeks from start to finish.

Stage | Duration |

|---|---|

Recruiter Call | 30 minutes |

Technical Phone Screen | 1 hour |

Hiring Manager Call | 1 hour |

Onsite Interviews | Up to 5 hours |

Total Process Duration | Up to 8 weeks |

Tip: Understanding Databricks architecture, clusters, and Azure integration made a huge difference for me. I recommend focusing on these areas before your interview.

Shared Experiences and Questions

Commonly Asked Questions

I’ve talked with other candidates and noticed some questions pop up again and again. Interviewers love to ask about designing data pipelines, optimizing Spark jobs, and choosing the right Azure service for a workload. They often want to know how I would handle cost control and system design. Here are a few examples I keep hearing:

How would you design a scalable ingestion pipeline for real-time data?

What steps do you take to optimize Spark job performance?

Can you explain the trade-offs between using Azure Data Lake and Azure Synapse?

How do you manage costs when running large-scale jobs in Databricks?

What tools do you use for monitoring and troubleshooting?

Tip: I prepare stories from my own projects to answer these questions with real examples.

Unique Scenarios

Some interview scenarios stand out because they test how I solve problems under pressure. I’ve seen candidates share stories where they had to replace a slow Python UDF loop with a Spark SQL window function and cut runtime from 40 minutes to under 5. Others talked about fixing executor out-of-memory errors in streaming jobs by tuning worker nodes and memory, which improved throughput and cut costs by 30%. Here’s a quick look at some unique scenarios and outcomes:

Scenario Description | Outcome |

|---|---|

Replacing a Python UDF loop with a Spark SQL window function in a finance project | Reduced runtime from 40 minutes to under 5 minutes |

Addressing executor OOMs in a streaming job by adjusting worker nodes and memory | Improved throughput while reducing monthly costs by 30% |

Containerizing dependencies and migrating a sentiment-analysis Spark job | Achieved zero data loss during cutover with validated outputs |

Optimizing job performance by addressing partition skew | Reduced job time from 70 minutes to 18 minutes |

Caching DataFrame in a customer churn model to optimize joins | Eliminated shuffle stage, saving two minutes per training epoch |

Using Delta Lake for data governance and anomaly detection | Quickly isolated and reverted bad data loads |

Building a churn classifier with distributed XGBoost and MLflow | Successfully orchestrated model deployment within Databricks |

Insights from Candidates

When I chat with others who have gone through the process, I hear a lot about the focus on real-world problem solving. Interviewers want to see if I can design systems, make smart trade-offs, and pick the right tools for each job. Here’s what other candidates say comes up most:

Type of Question | Description |

|---|---|

Architecture Trade-offs | Design ingestion pipelines and discuss trade-offs between different methods. |

Optimization & Cost Control | Focus on optimizing Spark jobs and managing costs effectively. |

Scenario-Based Azure Services | Show the ability to choose the right tools for specific workloads. |

System Design & Architecture | Design scalable and efficient data systems. |

Real-World Problem Solving | Tackle practical scenarios that require critical thinking and system ownership. |

Note: I practice explaining my decisions and the reasons behind them. That helps me stand out.

Interview Patterns

After hearing so many stories, I see a clear pattern. Most interviews start with basics, then move to scenario-based questions. They always include a technical deep dive and finish with system design or optimization. Interviewers want to know if I can think on my feet and adapt to new challenges. If I prepare for these patterns, I feel much more confident.

Preparation Tips and Strategies during Azure Databricks Interview

Study Resources

When I started preparing, I found that the right resources made a huge difference. Here are some that helped me the most:

Mastering Databricks: A Comprehensive Guide to Learning and Interview Preparation

Introduction to Databricks

When to Use Azure Databricks

Dive into Delta Lake

Apache Spark Architecture Fundamentals

Preparing Data for Machine Learning

Deploying Azure Databricks Models in Azure Machine Learning

Reading and Writing Data in Databricks

Structured Streaming with Databricks

Databricks vs. Snowflake

Top 30 Databricks Interview Questions and Answers

I like to mix official docs, online courses, and hands-on tutorials. This way, I get both theory and practice.

Practice Scenarios

I practice with real-world scenarios. I try to build sample pipelines, optimize queries, and handle schema changes. Sometimes, I create my own mini-projects, like streaming data from IoT devices or partitioning large datasets. This helps me get comfortable with the tools and concepts.

Mock Interviews

Mock interviews help me a lot. I use AI-powered platforms or ask friends to quiz me. These sessions show me where I need to improve. I focus on explaining my thought process out loud, just like I would in the real interview.

Time Management

I break my prep into small, focused sessions. Here’s how I manage my time:

Hands-on practice with Databricks Community Edition or trial accounts.

Review SQL and Spark concepts every day.

Use AI interview simulators for realistic practice.

Work through scenario-based questions, like designing streaming pipelines.

This routine keeps me on track and reduces stress.

Final Checklist

Before the interview, I'm used to check:

Did I review the basics and advanced topics?

Can I explain my projects clearly?

Have I practiced coding and debugging?

Am I ready for scenario-based and behavioral questions?

Did I get enough rest?

Tip: Stay calm and trust your preparation. You’ve got this!

2025 Trends in Azure Databricks Interview

Unity Catalog Focus

Unity Catalog has become a hot topic in interviews this year. I noticed interviewers ask about its features, how to set it up, and why it matters for data governance. They want to know if I can explain data lineage, Delta Sharing, and how to create or configure a Metastore. I prepare to talk about how Unity Catalog helps manage permissions and track data across the platform. If you can explain these points with real examples, you’ll stand out.

Tip: I like to mention how Unity Catalog makes it easier to audit data access and share data securely.

Delta Live Tables

Delta Live Tables questions are everywhere now. Interviewers ask me to compare Delta Live Tables with regular notebooks. They want to know if I understand how Delta Lake works, including ACID transactions, schema evolution, and time travel. I often get questions like:

What’s the difference between Managed and External Delta Tables?

How does Delta Lake handle concurrent reads and writes?

What is Z-Ordering and why is it important?

How do you optimize Delta Tables for performance?

I practice explaining these concepts in simple terms.

GenAI Integration

GenAI is making waves in data engineering interviews. I get questions about building Large Language Model (LLM) solutions, using tools like HuggingFace or Langchain, and integrating AI with Databricks workflows. Interviewers want to see if I can connect AI models to real business problems. I share stories about using GenAI for data enrichment or automating data quality checks.

Security and Compliance

Security and compliance questions have become more detailed. Interviewers ask how I protect sensitive data, set up RBAC, and use Managed Identities. They want to know if I understand how to meet compliance standards and monitor data usage. I mention using Unity Catalog and Azure Monitor for these tasks.

Soft Skills

Technical skills matter, but soft skills are just as important now. Interviewers ask how I communicate with teams, handle feedback, and solve problems under pressure. They want to see if I can explain my decisions and work well with others. I practice sharing examples from my past projects to show my teamwork and adaptability.

Note: If you focus on these trends, you’ll feel ready for any new question in your next interview.

I learned a lot from my azure databricks interview journey. Here’s what helped me most:

Practice real questions and scenarios.

Stay updated with new Databricks features.

Use your own experiences to answer questions.

Remember, preparation builds confidence. Use these tips as a guide, not a script. You’ve got this!

FAQ

What topics should I focus on for Azure Databricks interviews?

I start with Databricks architecture, clusters, notebooks, and Delta Lake. I also review Spark optimization, data governance, and integration with Azure services. These topics come up in almost every interview.

How do I prepare for scenario-based questions?

I practice by building sample pipelines and solving real problems. I use my own project experiences to explain my approach. Interviewers want to see how I think and solve issues step by step.

Do I need to know GenAI or machine learning for the interview?

Yes, I see more questions about GenAI and machine learning now. I learn the basics of LLMs, MLOps, and tools like HuggingFace. Even simple project examples help me answer these questions.

What resources helped me the most?

I use official Databricks documentation, online courses, and hands-on tutorials. I also join community forums and practice with mock interviews. Mixing theory and practice gives me confidence.

How do I handle technical questions if I get stuck?

Using the screenshot feature of Linkjob.ai to check out the ideas or answers provided by the AI.

See Also

Navigating the Databricks New Graduate Interview Journey in 2025

Insights from My Perplexity AI Interview and Questions in 2025

A Look into My Roblox Software Engineer Interview Experience in 2025

Exploring My Palantir Interview Process and Questions in 2025

Understanding My Oracle Software Engineer Interview Questions in 2025