I Passed My 2025 Azure Technical Interview and Here is How

I just passed the Azure technical interview for 2025. To be honest, from receiving the interview invitation to making thorough preparations and successfully passing the interview, it wasn't easy. So I want to share my experience with you. Going through all of this was stressful, and sometimes it was even difficult to organize my thoughts. But I learned to stay calm, express myself clearly, and demonstrate my problem-solving steps. I believe that after reading this article, you can do it too!This is the job offer email I received.

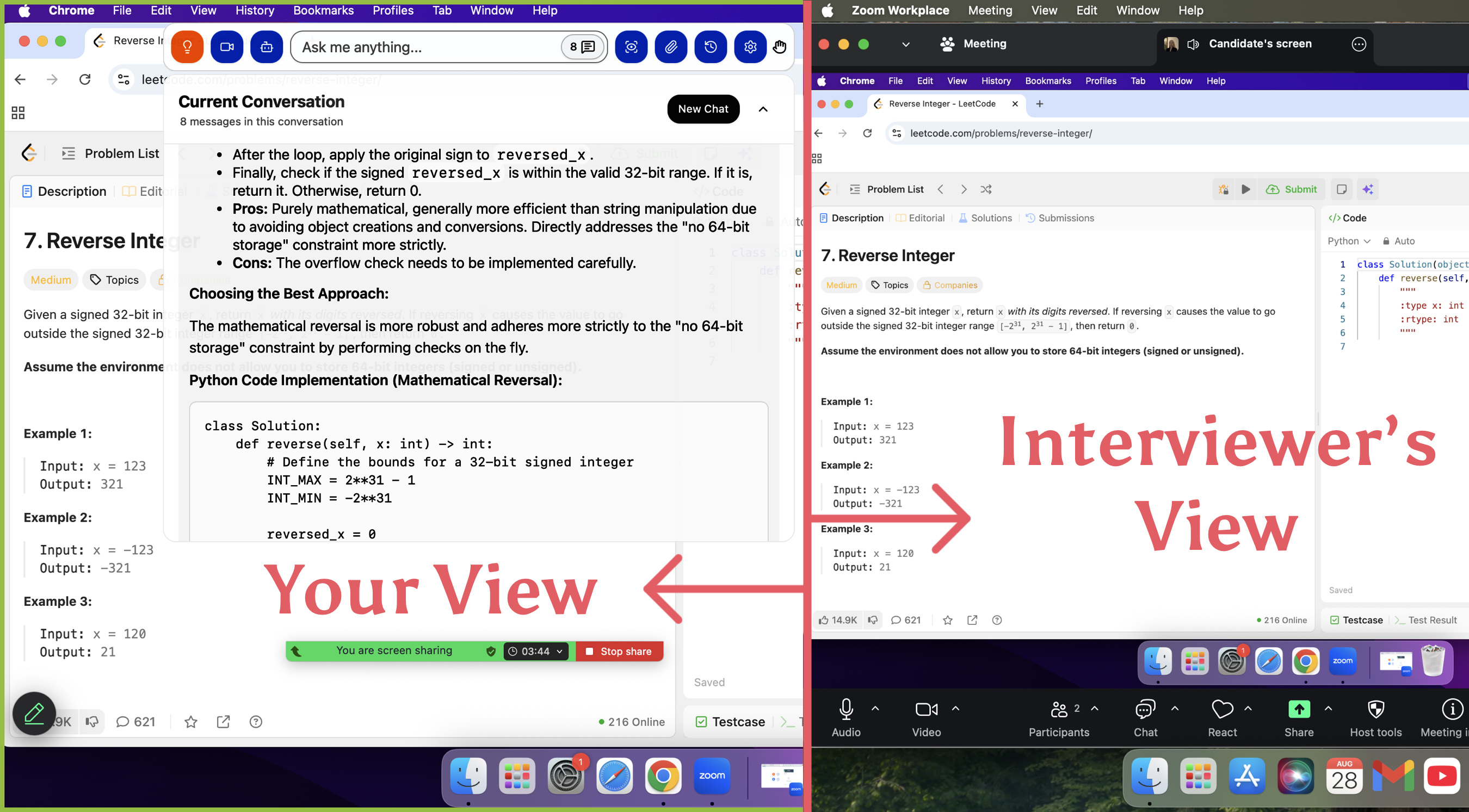

It's worth noting that while thorough preparation is crucial, the AI interview assistant Linkjob.ai also greatly helped me during the online interview process. Especially in the coding and scripting sections of the video interview, it directly helped me solve problems related to quickly and accurately providing answers. In the general interview phase, it also assisted me in communicating with the interviewer, and since it remained invisible within the video interview software throughout, the interviewer was completely unaware of its presence.

My Azure technical interview Questions

System Design (SD)

Topic A: Design a Two-Node RAID System

Problem Statement: Design a storage system across two nodes supporting

write_byteandread_byteoperations.Key Insight: The prompt was intentionally ambiguous. Success relied on an iterative "drilling down" approach—asking clarifying questions to define the scope. Discussions focused on data redundancy (Mirroring), consistency models, and handling node failure/recovery scenarios.

Topic B: Distributed Key-Value (KV) Store

Problem Statement: Design a scalable and highly available distributed KV system.

Key Insight: This covered standard distributed systems concepts, including data partitioning (Consistent Hashing), replication protocols, and managing the trade-offs defined by the CAP theorem.

Coding: Optimization of Expensive API Calls

Scenario: You are given a large dataset where each row contains a store's name, address, city, state, zip code, and latitude/longitude. You have access to an expensive external API that calculates precise coordinates based on address data.

Objective: Verify the correctness of the existing coordinates in the file while minimizing the number of API calls.

Proposed Solutions:

Data Deduplication: Group entries by unique address strings to ensure the API is never called twice for the same location.

Probabilistic Sampling: (Depending on accuracy requirements) Validating a statistically significant sample before proceeding to full validation.

Preprocessing: Cleaning and normalizing address strings to increase the hit rate of cached or previously queried data.

Hiring Manager (HM) Interview: Space-Efficient Sorting

Problem Statement: Given a set of unique, unsorted integers in the range $[0, 32000]$, implement a sorting algorithm that writes the results to a file using two helper functions: getNum() and putNum().

Technical Solution:

This is a classic "low-level" systems problem often solved using a BitMap (Bitset).

Implementation: Since the range is small and fixed (32,001 possible values), an array of bits can be used where each bit represents an integer.

Efficiency:

1. Initialize a bit array of 32,001 bits (approx. 4KB of memory).2. Iterate through the input using

getNum(), setting the bit at the corresponding index to1.3. Iterate through the bit array from index 0 to 32,000; if a bit is

1, callputNum()for that index.

During this process, I highly recommend using the AI interview assistant Linkjob.ai to help you complete the coding and script writing. It is fast and accurate, and you can rest assured that the interview software will never detect that you are using this tool.

Other Azure technical interview questions and answers

Problem Statement

Design a system to process data blocks and identify Unique Blocks for storage optimization.

Definitions:

Unique Block: A block is defined as "unique" if it shares the same Size and Checksum as a previously seen block, but originates from a different Address.

Full Record Duplicate: A block where the Address, Size, and Checksum are all identical to a previous entry (estimated at 3% of total records).

Requirements:

Identify and preserve Unique Blocks based on the definition above.

Write these identified unique blocks to an external output file.

Calculate the Redundancy Ratio using the formula:

$\text{Redundancy Ratio} = \frac{\text{Total Block Size} - \text{Unique Block Size}}{\text{Total Block Size}}$

Constraints & Assumptions

Physical Memory: 16 GB.

Expected Redundancy: ~10%.

Full Record Duplication: ~3%.

Deliverables: API design, data structure selection, and implementation logic.

Proposed Design

1. Data Structures

To handle the 16GB memory constraint, we need an efficient way to track "seen" content signatures without storing the entire payload.

Fingerprint Table (Hash Map):

Key: A composite of

(Checksum, Size).Value: A metadata flag or a counter.

Note: Since we need to identify "different addresses," the first time a

(Checksum, Size)is seen, we store it. Subsequent occurrences with different addresses trigger the "Unique Block" logic.

Bloom Filter (Optional): To quickly discard blocks that are definitely new, reducing expensive hash map lookups.

2. API Design

C++

struct Block {

uint64_t address;

uint32_t size;

uint64_t checksum;

};

class DeduplicationService {

public:

// Processes incoming block metadata

void ProcessBlock(const Block& block);

// Returns the calculated redundancy ratio

double GetRedundancyRatio() const;

// Persists unique blocks to the target file

void ExportUniqueBlocks(const std::string& outputPath);

};

3. Implementation Logic

Filter Full Duplicates (3% Case): Use a small LRU cache or a secondary hash of

(Address, Checksum)to identify and skip blocks that are exact replicas of previous records.Uniqueness Logic:

For each block, query the Fingerprint Table using

(Checksum, Size).If Key exists: This is a "Unique Block" (same content, different address). Mark for export and update

TotalBlockSize.If Key does not exist: This is the first time we've seen this content. Add the key to the table and update

UniqueBlockSize.

Memory Management: 16GB is generous for just metadata. If each entry (16-byte key + overhead) is ~32 bytes, you can track roughly 500 million blocks in RAM. If the dataset exceeds this, we would implement External Sorting or Length-Partitioned Indexing.

Azure technical interview process

Interview Stages

When I went through the azure technical interview, I noticed a clear structure. First, I had a phone screen with a recruiter. This part focused on my background and why I wanted to work with Azure. Next, I joined a technical screening. Here, I answered scenario-based questions and sometimes solved problems live. The final stage was a panel interview. I spoke with engineers and managers. They wanted to see how I think, not just what I know.

Question Types

The interviewers asked a mix of technical and practical questions. I saw questions about Azure services, networking, security, and cost management. Some questions tested my coding and scripting skills. Here’s a table with examples of the types of questions I faced:

Question | Topic |

|---|---|

What is Azure Front Door, and how is it different from Traffic Manager? | Azure Services |

How do you establish a private connection to Azure without using the internet? | Networking |

What is the difference between Azure DevOps and GitHub Actions? | DevOps |

How does Azure Blueprints help with compliance? | Compliance |

What are the benefits of using Terraform for Azure instead of ARM templates? | Infrastructure as Code |

Understanding Azure deployment models and core services helped me answer these questions with confidence.

What Interviewers Assess

During my azure technical interview, I realized interviewers cared about more than just technical depth. They wanted to see if I could explain complex ideas in simple terms. They also checked how I adapted my explanations for different audiences. Here’s what they looked for:

Assessment Criteria | Description |

|---|---|

Communication Skills | How effective is the candidate at communicating technical concepts to different audiences, from highly technical engineers to business stakeholders? |

Adaptability | Evidence of the candidate's ability to adapt communication style based on the audience, including specific examples of translating complex technical concepts. |

If you can break down tough topics and adjust your style, you’ll stand out in any azure technical interview.

Common experiences and frequently asked questions in Azure technical interviews

Advice from Other Candidates

When I talked to others who passed the azure technical interview, I noticed some patterns. Most people stressed the value of real, hands-on experience. Reading about Azure is helpful, but actually building things makes a big difference. Here are some tips I heard again and again:

Get comfortable with Azure AD, virtual machines, Kubernetes, and DevOps tools.

Practice designing secure networks and troubleshooting VM connectivity.

Learn how to automate tasks and enforce security across subscriptions.

Focus on real-world scenarios, not just theory.

Tip: If you can explain how you solved a problem in your own words, you’re on the right track.

Frequently Asked Questions

I often get questions from friends who want to prepare for Azure interviews. Here are some of the most common ones:

What topics should I focus on?

How much do I need to know about scripting or automation?

Do I need to memorize every Azure service?

How do I handle scenario-based questions?

I always say, focus on understanding how things work together. You don’t need to know everything, but you should know how to find answers and solve problems.

Trends in Azure Interviews

I’ve noticed some clear trends in the past year. Companies now ask more scenario-based questions. For example, they might ask how I would handle data drift in a production pipeline or what I would do if a Databricks cluster keeps crashing. Interviewers want to see hands-on skills, like debugging failed pipelines or setting up triggers and key vault access. They also expect me to think about the big picture—how data moves, scales, and stays secure in Azure.

Azure Technical Interview Preparation Tips

Study Resources

I found that the right study materials made a huge difference for me. I didn’t just read documentation. I used guides, blogs, and video tutorials that explained real-world scenarios. Here’s a table of resources that helped me the most:

Resource | Description |

|---|---|

Azure Interview Prep Guide for Admins, Architects, DevOps, Developers | A comprehensive guide that covers AWS, Linux, Jenkins, Kubernetes, Terraform, CI/CD, and cloud basics. It works for both beginners and experienced engineers. |

Docker Q&A Guide | This guide has over 80 questions about Docker, from basics to advanced topics. It’s great for DevOps Engineers, Cloud Architects, and Software Developers. |

I also spent time on Microsoft Learn and the Azure Architecture Center. These sites offer hands-on labs and up-to-date tutorials.

Practice Strategies

Practice made me feel ready for anything. I didn’t just memorize facts. I focused on real tasks and scenarios. Here’s what worked for me:

I learned core Azure concepts and how they fit together.

I built pipelines using Azure Data Factory, Databricks, and Delta Lake.

I practiced reading Spark execution plans and debugging real issues.

I designed modular pipelines and used parameterization.

I worked through scenario-based questions and explained my solutions out loud.

I used mock interviews and question banks to rehearse.

I completed labs to deploy services like VNets and VMs.

I recorded myself explaining architecture decisions.

Tip: The more hands-on practice you get, the more confident you’ll feel.

Time Management

Managing my time kept me on track. I broke my prep into small steps and stayed consistent. Here’s how I did it:

Break It Down: I focused on one topic at a time instead of trying to learn everything at once.

Stay Consistent: I studied a little every day. Even short sessions helped me remember more.

Use the Right Tools: I used interactive tools and mock interviews to get feedback and simulate real interview conditions.

I gave myself 4–8 weeks to prepare. I did at least 10 mock interviews and wrote out 4–6 STAR stories to share my experiences. I also set aside time for hands-on labs and take-home projects.

Remember: Small, steady steps add up to big results.

Personal Insights and Lessons Learned from Azure Technical Interviews

Building Confidence

I used to feel nervous before Azure interviews. Over time, I learned that confidence comes from more than just technical skills. I focused on soft skills like communication and problem-solving. I made sure I could explain my ideas clearly and work well with others. Employers want candidates who are well-rounded and can fit into a team. Here’s what helped me build confidence:

I practiced explaining technical concepts in simple terms.

I worked on real Azure projects with friends.

I asked for feedback and improved my answers.

I reminded myself that everyone makes mistakes and learns from them.

Tip: Confidence grows when you combine technical knowledge with strong communication.

Mistakes to Avoid

I made plenty of mistakes during my prep. I learned to avoid these common pitfalls:

Skipping the AZ-104 Exam Skills Outline and missing key topics.

Ignoring hands-on labs and struggling with real-world tasks.

Lack of focus on role-based certification content.

Using outdated Azure materials and getting confused by old features.

Cramming instead of following a structured study plan.

Skipping practice tests and feeling lost during the interview.

Underestimating the time required for Azure topics.

Note: A structured study plan and current resources make a huge difference.

Final Advice

If you want to pass your Azure technical interview, here’s my advice:

Understand core services like compute, storage, and networking.

Spend time in the Azure Portal and use CLI or PowerShell for tasks.

Stay updated with new Azure features.

Learn security best practices and be ready to discuss them.

Practice scenario-based questions until you feel comfortable.

Remember: Hands-on practice and clear communication will set you apart. You’ve got this!

I found that focused study and hands-on practice made all the difference. I spent at least two weeks working with Power BI, SQL, and mock interviews. I used expert resources every day. Here are the ones I relied on:

Resource Name | Description |

|---|---|

Microsoft Learn | Technical prep for Azure roles. |

Azure Architecture Center | System design and best practices. |

Microsoft Developer Blogs | Latest updates and innovations. |

LinkedIn Learning Microsoft Courses | Extra courses for skills and growth. |

Preparation boosted my confidence and helped me show my problem-solving skills.

Learning from others gave me new ideas and strategies.

You can do this too! Stay curious, keep practicing, and trust your journey.

Azure Technical Interviews FAQ

How much Azure experience did I need before the interview?

I had about one year of hands-on Azure work. I built small projects and practiced daily. Real experience helped me answer scenario questions.

Did I need to know every Azure service?

No, I focused on core services like VMs, storage, and networking. I learned how to find answers fast. Interviewers cared more about my problem-solving.

What helped me stay calm during the interview?

I practiced mock interviews with friends. I took deep breaths and reminded myself to explain my thinking step by step. That made a big difference.

See Also

Leveraging AI Tools to Ace My Microsoft Teams Interview

Insights from My 2025 Oracle Software Engineer Interview Experience

Key ES6 Questions I Faced in 2025 That Led to Success

My Comprehensive Approach to Tackling Dell Technologies Interview Questions

Experiences from My Technical Support Engineer Interview: Authentic Q&As