My 2026 Databricks System Design Interview: Tough Qs Solved

I aced the Databricks system design interview by prioritizing real-world business scenario design and conducting multiple rounds of trade-off discussions. Google Docs replaced the traditional whiteboard in the interview, which required me to clearly articulate my design thinking through text and diagrams. In my preparation, I focused on mastering core data platform concepts including data ingestion, ETL, and scalability, and leveraged an AI interview copilot to practice business problems for specific scenarios. This allowed me to gain a deep understanding of how to balance throughput, latency, and reliability. Thanks to thorough preparation, I stayed composed and tackled tough interview questions with confidence. Now, I’ve been invited by Linkjob.ai to share my hands-on experience from this interview.

Databricks System Design Interview Process

My Background And Motivation

I started my journey with a clear goal. I wanted to join a team that works on big data problems at scale. Databricks seemed like the perfect place for me. I had experience with Spark and data engineering, but I knew the databricks system design interview would push me to think deeper. My motivation came from wanting to solve real-world problems and learn from the best in the industry.

Preparation Steps

I didn’t just jump into the interview. I made a plan and stuck to it. Here’s what helped me the most:

I practiced using Google Docs since Databricks uses it instead of a whiteboard. This made me focus on clear writing and diagrams.

I reviewed core system design concepts like scalability, reliability, and data flow.

I’ve compiled high-frequency system design questions from past interviews, with a focus on distributed systems and Spark-related topics. I break down these questions for in-depth analysis, do targeted practice, and continuously refine my problem-solving approaches and explanatory clarity.

Interview Format And Timeline

The databricks system design interview process took about six weeks for me. It included several rounds, each with a different focus. Here’s a quick look:

Interview Assessment Type | Core Assessment Content | Preparation Strategy |

|---|---|---|

Core Technical Interview (VO) Session (Big Data, SQL, Programming) | It not only assesses the ability to use tools, but also focuses on three core points: 1. The depth of understanding of the underlying principles of technology; 2. The ability to apply technology to solve practical business problems; 3. The level of engineering in code (standardized, efficient, and implementable). | 1. Delve deeply into the underlying principles of big data, SQL, and programming (such as Spark SQL execution mechanism and code optimization logic); 2. Practice business scenario questions to integrate technology with actual requirements; 3. Standardize code writing, review and optimize past project code, and improve engineering thinking. |

Senior Big Data + Real-Time Processing System Design Interview | It focuses on assessing the in-depth understanding of the underlying principles of technology and the ability to use relevant technologies to build a big data platform with high throughput, low latency, and high reliability; special attention is paid to how to convert vague business requirements into implementable and scalable technical solutions. | 1. Focus on mastering the underlying logic of real-time processing frameworks and understanding the implementation principles of high throughput, low latency, and high reliability; 2. Practice decomposing vague business requirements and sorting out technical implementation paths; 3. Accumulate big data platform design cases and summarize design skills for scalable solutions. |

Special Focus of System Design Interview | It focuses on assessing the depth of understanding of big data processing and distributed systems, especially the application of Databricks core products (Delta Lake, Spark); it not only requires the ability to draw clear architecture diagrams, but also values the ability to implement design ideas into specific code and handle detailed issues. | 1. Thoroughly understand the core features and application scenarios of Delta Lake and Spark, and deepen the understanding combined with the principles of distributed systems; 2. Practice drawing architecture diagrams to ensure clear logic and prominent key points; 3. Decompose architecture design into specific code snippets, conduct targeted practice on detail handling, and avoid common pitfalls. |

There were also managerial and technical rounds. The technical rounds got harder each time, covering topics like SQL, Spark internals, and streaming.

Handling Ambiguous Questions

Some questions felt open-ended or unclear. I learned to ask clarifying questions and state my assumptions. For example, if the interviewer asked about scaling a hit counter, I’d ask about expected traffic and latency needs. This helped me show my thought process and adapt my solution. Using Google Docs made it easier to organize my ideas and update them as the conversation changed.

Tip: Don’t be afraid to pause and clarify requirements. Interviewers appreciate it when you think before you answer.

The databricks system design interview tested my ability to communicate, adapt, and solve problems. Preparation and a clear strategy made all the difference.

Databricks System Design Interview: Toughest Questions

Real Questions Asked

When I sat down for the databricks system design interview, I faced some questions that really made me think. Here are a few that stood out:

Design a Spark job that processes terabytes of data every 10 minutes on Databricks.

Build a Lakehouse architecture using Bronze, Silver, and Gold Delta layers. The question included data governance, RBAC-based access, and schema evolution.

Create a data lakehouse for customer transactions with Delta tables. I had to include audit logging and a metadata strategy for governance and access control.

These questions tested my understanding of big data, cloud architecture, and how to make systems reliable and scalable. I realized that the interviewers wanted to see how I would handle real-world problems, not just textbook scenarios.

My Approach And Solutions

I knew I had to break down each problem and show my thought process. Here’s how I tackled them:

Spark Job for Terabytes of Data

I started by asking about the data sources and expected throughput. I wanted to know if the job needed to run in real time or batch mode.

I sketched out a workflow in Google Docs. I included steps for data ingestion, partitioning, and error handling.

I chose Spark Structured Streaming for real-time needs. I explained how I would use checkpointing and windowing to manage state.

I talked about scaling the cluster. I suggested using autoscaling and monitoring resource usage.

Lakehouse Architecture with Delta Layers

I described the Bronze, Silver, and Gold layers. Bronze would store raw data, Silver would clean and enrich it, and Gold would hold business-ready tables.

I added diagrams to show how data flows between layers.

I discussed RBAC for access control. I explained how schema evolution works in Delta Lake.

I included a plan for data governance. I mentioned using audit logs and metadata management.

Customer Transactions Data Lakehouse

I focused on reliability and security. I suggested using Delta tables for ACID transactions.

I explained how to set up audit logging for every change.

I described a metadata strategy. I showed how to track data lineage and access patterns.

Tip: I always made sure to state my assumptions and ask clarifying questions. This helped me avoid rushing and overcomplicating my answers.

I kept my solutions simple and clear. I avoided jargon and wrote out my ideas step by step. I used tables and diagrams in Google Docs to organize my thoughts.

Feedback And Insights

After each round, the interviewers gave me feedback. Here’s what I learned:

They liked when I explained my thought process clearly. If I skipped steps or used too much jargon, they asked me to slow down.

I realized that understanding core concepts helped me answer follow-up questions. For example, knowing how Delta Lake handles schema changes made it easier to discuss data governance.

The interviewers wanted to see both high-level and low-level design skills. I had to sketch out workflows and also write pseudocode for key parts.

Time management mattered. I learned to focus on the most important parts of the problem and not get lost in details.

Note: Many candidates rush through their answers or try to sound too technical. I found that simple, well-structured solutions worked best.

Recommended Resources

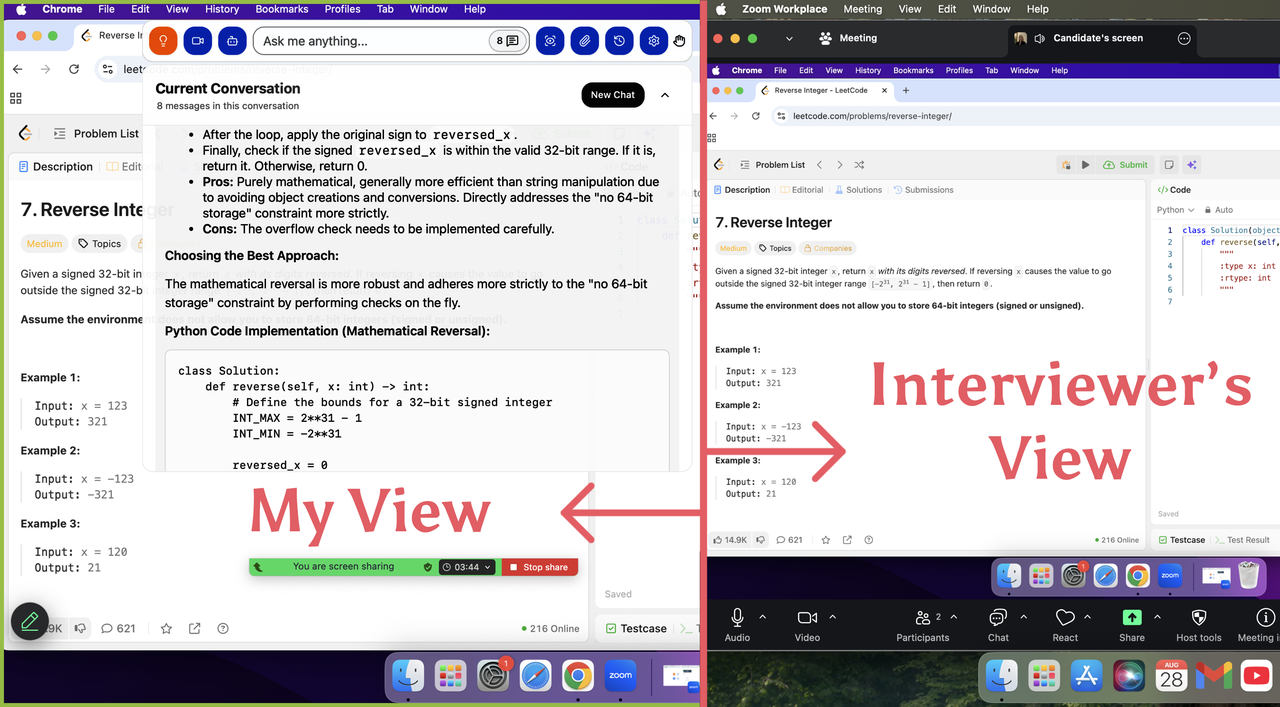

Linkjob.ai is an incredibly practical AI interview tool. During my interview preparation, it helped me run mock interviews tailored to real-world business scenarios; in the actual interview, it also organized clear response frameworks for me and assisted with my answers, ultimately enabling me to pass Databricks' system design interview smoothly.

Community Insights And Shared Experiences

Common Challenges

When I talked with other candidates and read their stories online, I noticed we all faced similar hurdles. Here are the most common challenges I saw:

Many people struggled to show their Databricks expertise during interviews. They knew the tools but found it hard to explain their decisions.

Some candidates felt lost about what to prepare. The range of topics—Databricks architecture, Delta Lake basics, optimization tricks, and orchestration—felt overwhelming.

Interviewers wanted us to start with the business problem, not just the tech. They liked when we shared metrics, explained architecture choices, and talked about schema evolution.

Performance optimization stories, error handling, and security came up a lot. I realized interviewers wanted to see end-to-end pipeline ownership, not just code snippets.

I learned that focusing on real business impact and clear architecture decisions made my answers stand out.

Notable Shared Questions

The reason Databricks' system design interview is considered "difficult" is not that the questions are overly abstract, but that it requires exceptional performance at both the high-level and low-level stages. Interviewers not only review my macro-level architecture diagrams but also focus on how I translate the design into runnable code to solve granular engineering challenges.

High-Level Design: Building with Blocks, But Steadily

This section tests your architectural thinking and understanding of core big data concepts. You need to transform ambiguous business requirements into a scalable technical solution that meets performance constraints.

Real-Time Fraud Detection System: This is a flagship problem in Databricks interviews. The core is using Spark Structured Streaming to consume Kafka data, perform real-time feature engineering, and invoke MLflow models for inference—with Delta Lake ensuring ACID transaction guarantees throughout the process. Interviewers will dive deep into challenges such as cold start handling, model freshness, and late-arriving data processing.

Book Price Comparison Platform: When users submit a request, the system needs to call multiple bookstore APIs to find the lowest price. The focus is on designing request distribution and result aggregation modules, controlling concurrency via thread pools, and implementing timeout and circuit-breaking mechanisms to guarantee latency performance.

Multi-Tenant Data Platform: Design a platform capable of serving different business units with varying SLA requirements. Key considerations include resource isolation, security boundaries, cost accounting, as well as enabling cross-tenant analysis and data sharing.

Low-Level Design: Crafting Details with Precision

This is a distinctive feature of Databricks' interviews and a "nightmare" for many candidates. Interviewers may ask you to write pseudocode or outline functional logic to address granular issues such as multithreading, concurrent writes, and other detailed engineering challenges.

Log Writer (Multithreaded Log Writing): Design a system that supports multithreaded, concurrent writing of logs to disk. Key considerations include handling race conditions, ensuring log order consistency, and using WAL (Write-Ahead Logging) to prevent data loss in the event of a service crash.

Durable KV Store (Single-Machine Key-Value Store): Design a persistent, single-machine key-value store. The focus is on using WAL to guarantee crash recovery, and balancing performance with consistency through read-write locks and fine-grained locks (e.g., locking based on key-hash sharding).

Distributed Cache System: Extend the classic LRU Cache to a distributed environment. Beyond ensuring thread safety, you must address how to maintain cache consistency across multiple nodes.

Challenges and Coping Strategies

Drilling into Details: Nowhere to Hide,Interviewers will probe into the finest of details. For example, if you propose using a Prefix Tree to index file paths, they might ask, "If a directory contains hundreds of millions of files (e.g., /a/b/1.../a/b/1000000000), can your indexing structure handle that? How would you implement pagination?"

Code Quality is Non-Negotiable:Not only must the code be functional, but it should also be logically clear and stylistically clean. For topics related to ML tool development (e.g., MLflow), interviewers will delve into design details and the trade-offs between different solutions.

Test Case Mindset:During coding or low-level design tasks, interviewers highly value your ability to design test cases that cover various user behaviors and edge cases. This reflects the completeness and rigor of your problem-solving approach.

Product knowledge is a significant advantage: A deep understanding of core products like Spark, Delta Lake, and MLflow delivers a notable edge. When answering questions, you can naturally link your responses to the design principles of these tools—for example, noting, "This aligns with the design philosophy of Spark’s Shuffle Write module"—to effectively showcase the depth of your understanding of these products.

Peer Lessons

I picked up some great tips from others who went through the process:

I leveraged an AI interview assistant for mock interviews, which sharpened my thought process significantly for the formal interview.

For both technical and behavioral questions, I structured my answers systematically and applied the STAR method consistently throughout the process, with highly effective results.

Interview Preparation Tips And Strategies

Structuring Answers

When I started prepping for Databricks interviews, I realized that structuring my answers made a huge difference. I always tried to break down my thoughts so the interviewer could follow along. Here’s a table that helped me organize my approach:

Strategy | Description |

|---|---|

Prepare for Scenario-Based Questions | I focused on solving real-world problems, like handling schema changes in live Delta tables. |

STAR Method for Behavioral Questions | I used the STAR method to prepare stories that highlighted teamwork and conflict resolution. |

Structured Communication | When tackling the system design (SD) interview, I treat it just like a project design review at work. I start by clarifying the functional requirements—such as inputs/outputs and target users—then outline the non-functional ones (performance, reliability, security, and so on), and finally break down the design step by step. This approach lets me clearly walk interviewers through my thought process within the limited time. |

Depth Trumps Breadth | For positions at the L6/L7 level, interviewers place greater emphasis on my understanding of details. Rather than providing a high-level architecture diagram, it is more impactful to dive deep into the implementation specifics of a component—such as why an LSM-tree is chosen over a B+ tree, or how to optimize the performance of a Spark job. |

Familiarity with the Databricks Ecosystem | A deep understanding of core products including Spark, Delta Lake, and MLflow is a huge advantage for me. When answering questions, I can naturally tie my responses to the design philosophies of these tools—for instance, referencing "This aligns with the design principles of Spark’s Shuffle Write module" lets me effectively demonstrate the depth of my understanding. |

Test Case Mindset | During coding or low-level design sessions, interviewers highly value my ability to design test cases that cover all user behaviors and edge cases. This speaks to the thoroughness and rigor of my problem-solving approach. |

I found that emphasizing real-world problem-solving and practicing how I explained my ideas made my answers much clearer. I always prepared STAR stories to showcase my experiences.

Clarifying Requirements

I learned not to jump straight into technical solutions. Instead, I started by clarifying the problem. Here’s how I did it:

I asked about the business goals and what success looked like.

I made sure to ask specific questions about the data source, like whether it came from Kafka, Event Hubs, or cloud storage.

I checked the data format—was it JSON, Parquet, or CSV?

Asking these questions helped me avoid misunderstandings and made my solutions more relevant.

Tip: Pausing to clarify requirements shows that you care about solving the right problem, not just any problem.

Time Management

Time always felt tight during interviews. I set a timer when practicing at home. I spent the first few minutes clarifying requirements, then outlined my solution before diving into details. If I got stuck, I moved on and circled back later. This kept me focused and calm.

I used bullet points to organize my thoughts quickly.

I prioritized the most important parts of the problem first.

Mindset And Mistakes To Avoid

Staying Calm

Staying calm during a Databricks system design interview can feel tough. I remember my heart racing when I faced a tricky question. Over time, I learned that a steady mindset made all the difference. Here’s a table that helped me focus when things got intense:

Strategy | Description |

|---|---|

Stay Calm Under Pressure | These interviews are intense. If I got stuck, I returned to my framework and tackled one piece at a time. |

I also found these habits useful:

I sat with tough problems without panicking.

I explained my logic step by step, instead of jumping around.

I stayed consistent with my prep, even when distractions popped up.

I focused on showing structure, not perfection. Talking through my reasoning helped.

I reminded myself to be authentic. Clarity always beat cleverness.

Tip: When I felt stuck, I paused, took a breath, and broke the problem into smaller parts. That helped me regain control.

Common Pitfalls

I made my share of mistakes during prep and interviews. Here are some common pitfalls I noticed:

Rushing to answer without clarifying the problem.

Overcomplicating solutions instead of keeping them simple.

Using too much jargon or skipping steps in my explanation.

Ignoring business goals and focusing only on technical details.

Not managing my time, which led to unfinished answers.

Note: I learned that interviewers cared more about my approach and communication than about a perfect answer.

Continuous Growth

Improvement never stops, even after landing the job. I built a routine to keep growing:

I prepared in a structured way and tracked my progress.

I practiced with peers and asked for feedback.

I used online resources, blogs, and guides to learn new patterns.

I balanced my practice between coding, system design, and behavioral questions.

I sometimes joined bootcamps for extra guidance.

Growth comes from steady practice, honest feedback, and a willingness to learn from every experience.

I found that preparation, adaptability, and clear communication helped me succeed in the Databricks system design interview. I learned from my own journey and from others in the community. I focused on the basics and practiced sharing my ideas clearly.

Candidates preparing for system design interviews should focus on mastering the fundamentals, including understanding trade-offs, bottlenecks, and scalability. This foundational knowledge enables them to confidently tackle any system design problem.

If you want to join Databricks, keep learning and stay curious. You can do it—just take one step at a time!

FAQ

How did you practice system design for Databricks?

I used Google Docs to sketch diagrams and write out solutions. I joined mock interviews with friends. I focused on real Databricks scenarios. Practicing out loud helped me explain my ideas clearly.

What resources helped you the most?

I relied on Alex Xu’s book and Databricks interview guides. I joined online forums and read blog posts. Mock interviews and feedback from peers made a big difference.

How did you handle time pressure during interviews?

I set a timer while practicing. I broke problems into small steps. I used bullet points to organize my thoughts. If I got stuck, I moved on and circled back later.

What mistakes should I avoid in Databricks interviews?

Don’t rush your answers. Always clarify requirements first. Keep solutions simple. Avoid jargon. Focus on business impact, not just technical details.

Can I use diagrams in Google Docs during the interview?

Absolutely! I drew simple diagrams to show data flow and architecture. Diagrams helped me organize my ideas and made my answers easier to follow.

See Also

Navigating the Databricks New Graduate Interview Journey in 2025

My Comprehensive Approach to Dell Technologies Interview Questions in 2025

Insights from My Perplexity AI Interview Experience in 2025

Overview of My Oracle Software Engineer Interview Questions in 2025

Essential Guide to Succeeding in the Gemini ML Interview 2025