How I Nailed My Databricks Technical Interview In 2026

Based on my recent interview journey in Databricks , I've compiled the actual technical problems I encountered . Below, I'll share my notes on each stage, along with the specific questions asked, my solution approaches, and the key takeaways I gathered. Whether you're preparing for your own Databricks technical interview or just curious about their process, I hope this detailed recap proves helpful.

I’m really grateful to Linkjob.ai for helping me pass my interview, which is why I’m sharing my interview questions and experience here. Having an undetectable AI coding interview copilot during the interview indeed provides a significant edge.

Databricks Technical Interview Questions

Coding And Data Engineering Questions

When I sat down for the databricks technical interview, I expected a few coding questions. I got a lot more than that. The interviewers wanted to see how I handled real data problems. They asked me to write SQL queries, solve data structure puzzles, and talk through data engineering scenarios. Below are the problems I encountered:

Question 1: CIDR-Based Rule Matching System

Problem Description

Design an IP rule matching system that determines whether a given IP address should be ALLOW or DENY based on an ordered list of CIDR-based rules.

Rules Format:

Each rule is a tuple (action, rule_string) where:

action: Either"ALLOW"or"DENY"rule_string: A CIDR notation (e.g.,"192.168.100.5/30") or a plain IP address (e.g.,"1.2.3.4")

Rule List Example:

python

rules = [

("ALLOW", "192.168.100.5/30"),

("DENY", "123.456.789.100/3"),

("ALLOW", "1.2.3.4")

]Matching Logic:

Rules are processed in the order they appear in the list.

The first rule that matches the given IP determines the action.

If no rule matches, default to

DENY.A plain IP address (without mask) should be treated as

/32(exact match).

Examples:

IP

192.168.100.4→ Matches first rule → Returns"ALLOW"IP

123.456.789.100→ Matches second rule → Returns"DENY"IP

1.2.3.4→ Matches third rule → Returns"ALLOW"IP

10.0.0.1→ No match → Returns"DENY"

Key Edge Cases to Consider:

Handling invalid IPs or CIDR notations

Single IP without mask (e.g.,

"1.2.3.4")Overlapping rule ranges

Large number of rules (optimization may be needed)

Question 2: LazyArray Implementation (System Design & Testing Focus)

Problem Description

Implement a LazyArray class that simulates array operations with deferred execution. The primary focus is on API design, system behavior, and comprehensive testing rather than complex algorithms.

Requirements:

Design a clean, intuitive API for the

LazyArrayclassSupport basic operations (map, filter, reduce) with lazy evaluation

Operations should chain appropriately

Execution only happens when results are explicitly requested

Example Usage:

python

array = LazyArray([1, 2, 3, 4, 5])

result = (array

.filter(lambda x: x % 2 == 0)

.map(lambda x: x * 2)

.collect()) # Triggers execution

# result should be [4, 8]Evaluation Criteria:

Clean, intuitive API design

Proper handling of edge cases

Comprehensive test coverage

Clear documentation of behavior

Performance considerations

The interview focused heavily on coding and data engineering topics. I spent most of my time writing code and explaining my logic.

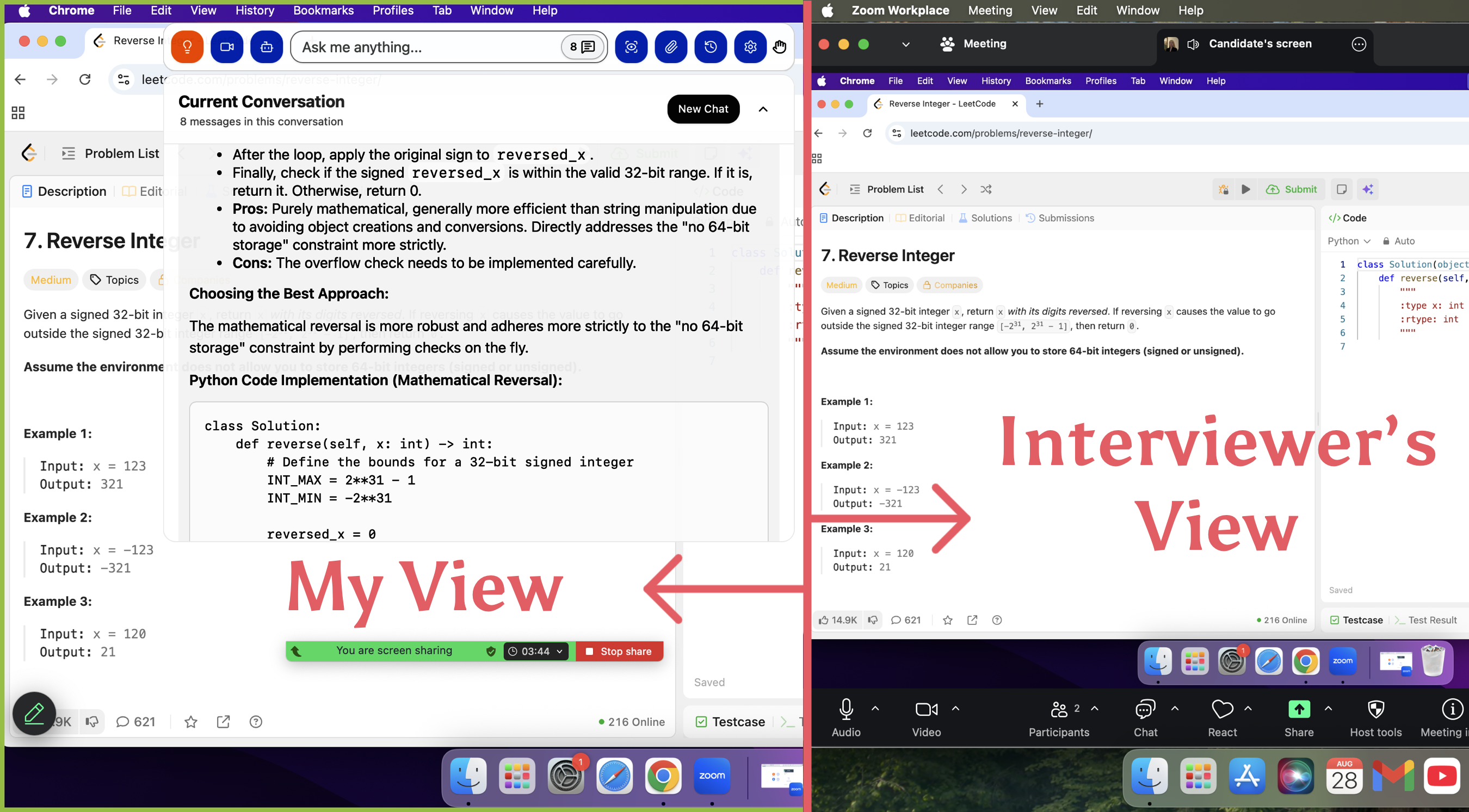

Tip: Practice SQL and data engineering problems before your interview. The questions can get tricky, especially when I need to optimize my code or explain my choices.Just in case, I used linkjob.ai during the interview process. This software is very useful, as it allows me to use its AI tool during the online interview without being detected by the interviewer. Even when sharing the screen, the interviewer cannot see the AI tool on my screen. Thanks to this tool, I successfully passed the online interview stage of Databricks.

This is completely invisible from the interviewer's perspective! I tested it with my friend before the interview, and she couldn't see the AI tool on my shared screen at all!

System Design And Spark Topics

The system design part of the databricks technical interview pushed me to think bigger. I had to design scalable systems and explain how I would handle huge amounts of data. The interviewers asked about Spark, ETL pipelines, and machine learning integration. They wanted to know if I understood both the technical details and the big picture.

Here’s what they focused on:

Functional and non-functional requirements for data platforms.

Data ingestion from multiple sources, including structured and unstructured data.

Building ETL pipelines for batch and streaming analytics.

Query optimization strategies for large datasets.

Integrating machine learning models into data workflows.

Data governance, including tracking data lineage and enforcing permissions.

Below are the problems I encountered:

Question 1: Group Chat System with Message Deletion

Problem Description

Design a group chat system that supports:

Basic group chat functionality (creating groups, sending messages, user management)

Special requirement: When a user deletes a conversation, it must be deleted for all users in the group (complete removal from everyone's view)

Key Design Challenges:

How to implement "delete for everyone" functionality

Data modeling for messages and conversations

Ensuring data consistency across all users

Handling concurrent deletions

Performance considerations for large groups

Example Scenarios:

User A sends message, User B deletes it → Both User A and User B should not see the message

User deletes entire conversation → All participants lose access to the conversation

New user joins group → Should not see previously deleted messages

Question 2: Distributed File System (GFS/S3-like) with Prefix Search

Problem Description

Design a distributed file system similar to Google File System (GFS) or Amazon S3, with special focus on efficient prefix search for file paths.

Core Requirements:

Basic file system operations (create, read, update, delete files)

Special requirement: Efficiently search files by path prefix (e.g., find all files under

/a/b/)Handle metadata operations at scale

Deep Dive on Prefix Search:

Initial Approach Discussion:

Using a prefix tree (trie) to index file paths

Storing metadata in a distributed key-value store

Interviewer's Challenge Question:

"What if there's a big hole? For example, a directory with millions of files:

/a/b/I.../a/b/I00000000. How would you handle this?"

What "Big Hole" Likely Means:

Hotspot issues in prefix trees

Performance degradation with deep or wide directory structures

Pagination challenges for massive directories

Storage and memory issues with large trie nodes

Question 3: Concurrent Log Writer

Problem Description

Implement a thread-safe log writer that can handle multiple threads writing logs concurrently to disk.

Requirements:

Multiple threads can write log entries simultaneously

Logs must be written to disk in a thread-safe manner

Handle race conditions properly

Ensure log ordering and consistency

Good performance under high concurrency

Expected Implementation:

Pseudo-code showing the concurrency control mechanism

Explanation of synchronization strategy

Handling of edge cases and failures

Key Challenges:

Thread Synchronization:

Mutex vs lock-free approaches

Minimizing contention between threads

Ensuring proper log ordering

Performance Considerations:

Reducing disk I/O contention

Batch writing for better throughput

Buffer management

Failure Handling:

Disk full scenarios

Thread crashes during write

Recovery mechanisms

Example Pseudo-code Structure:

python

class ThreadSafeLogWriter:

- Constructor(disk_writer, buffer_size)

- write_log(thread_id, message)

- flush_buffer()

- synchronization mechanismsNote: If you’re preparing for a databricks technical interview, make sure you understand Spark internals and system design basics. You’ll need to explain your ideas clearly and back them up with examples.

My Approach To Problem Solving

During the databricks technical interview, I realized that my approach mattered as much as my answers. I always started by breaking down the problem. I asked clarifying questions and made sure I understood the requirements. I wrote out my plan before jumping into code.

Here’s how I tackled each challenge:

I read the question carefully and repeated it back to the interviewer.

I listed possible solutions and explained the pros and cons.

I chose the best approach and wrote clean, readable code.

I tested my solution with sample data and checked for edge cases.

I explained my reasoning at every step.

If I got stuck, I didn’t panic. I talked through my thought process and asked for hints. The interviewers appreciated my transparency and willingness to learn. I learned that showing my work and communicating clearly made a big difference.

😊 If you’re preparing for a databricks technical interview, practice thinking out loud. Interviewers want to see how you solve problems, not just the final answer.

Databricks Technical Interview Process

Application And Recruiter Screen

I submitted my application online and waited for a recruiter to reach out. The recruiter screen felt more like a friendly chat than a test. The recruiter asked about my background, my interest in Databricks, and my experience with data engineering. I felt comfortable sharing my story. The recruiter explained the next steps and gave me a rough timeline. I learned that most candidates who reach this stage move forward, so I felt encouraged. The process here moved quickly, and I got an invitation for the technical phone interview within a week.

Tip: Be honest and clear about your experience. The recruiter wants to see if you’re a good fit for the company’s values and the role.

Technical Phone Interview

The technical phone interview was the first real test in the databricks technical interview process. The call lasted about an hour. The interviewer jumped straight into coding questions. I had to solve problems related to data structures and algorithms. For example, I got a question about converting IAP to CIDR and another about implementing tic-tac-toe. The interviewer also asked me to explain my thought process out loud.

I noticed that the databricks technical interview focused a lot on practical coding and system design. The interviewer wanted to see how I approached problems, not just if I got the right answer. Communication mattered as much as technical skill.

Hiring Manager Call

After passing the technical phone interview, I moved on to a call with the hiring manager. This conversation felt more personal. The manager asked about my past projects, how I handled challenges, and what I learned from mistakes. We discussed my experience with big data tools like Apache Spark and Hadoop. The manager wanted to know if I could design scalable data systems and troubleshoot performance issues. I also got questions about how I work with teams and explain technical ideas to non-technical people.

I realized that the databricks technical interview process values both technical depth and communication skills. The manager looked for people who could grow with the company and fit the culture.

Final Interview Stage

The final interview stage was the most intense part of the databricks technical interview. I joined a series of back-to-back interviews that lasted almost half a day. Each session focused on different areas:

The hiring manager interview tested both technical and cultural fit.

I answered behavioral questions and talked about my previous projects.

Scenario-based questions covered conflict resolution, leadership, and adapting to change.

The interviewers wanted to see if I aligned with Databricks’ values of ownership, innovation, and transparency.

I found the difficulty level high—probably a 4 out of 5 compared to other tech companies. The interviewers expected me to know SQL, Python, and big data technologies. They also wanted to see if I could design scalable systems and manage distributed processing. Communication skills played a big role, especially when I had to explain complex ideas in simple terms.

Note: The databricks technical interview usually has four to five rounds, with each round focusing on different skills like coding, system design, and behavioral questions.

Looking back, I saw that each stage built on the last. The process tested not just my technical knowledge but also my ability to learn, adapt, and communicate.

Databricks Technical Interview Insights From Other Candidates

Common Databricks Technical Interview Questions

I wanted to know what other candidates faced in their Databricks interviews. I found that most people got a mix of technical and behavioral questions. The technical part often included coding challenges, SQL queries, and system design problems. The behavioral questions focused on teamwork and handling conflict. Many candidates said the process felt thorough but fair.

Here’s a table that shows the typical stages and what each one covers:

Stage | Description |

|---|---|

Recruiter Call | Discuss background, technical skills, and motivation for joining Databricks. |

Technical / Phone Screen | Showcase problem-solving skills with LeetCode-style questions. |

Take-Home or OA | Complete tasks mirroring real Databricks challenges, such as SQL queries or data engineering cases. |

Onsite / Panel | Intensive interviews featuring technical and behavioral rounds with multiple interviewers. |

Hiring Committee | Holistic review of feedback, references, and overall candidate trajectory. |

Most candidates said they got questions like:

Write a SQL query to find users with more than one transaction.

Design a scalable data pipeline for streaming data.

Tell me about a time you resolved a conflict on your team.

Shared Experiences And Patterns

I noticed some clear patterns from reading candidate stories. The interview process usually lasted between two and five weeks. People described the technical assessment as rigorous. The behavioral interviews focused on teamwork and conflict resolution. Feedback came quickly, and most candidates felt satisfied with how transparent the process was.

Here are some common themes:

The technical rounds tested real-world data engineering skills.

Interviewers cared about how candidates explained their solutions.

Teamwork and communication mattered as much as technical ability.

“I appreciated how fast Databricks gave feedback. The interviewers wanted to know how I think, not just what I know.”

Community Tips For Databricks Technical Interview

I picked up some great advice from the Databricks interview community. Here are the top tips that helped others succeed:

Simplify complex concepts. Use visuals or analogies to explain technical ideas.

Refine SQL skills. Practice advanced joins and Delta Lake time-travel syntax.

Conduct mock interviews. Rehearse with a friend to build confidence.

Practice communication. Use the STAR method to share your experiences.

Try AI interview simulators. Get feedback and improve your answers.

If you follow these tips, you’ll feel more prepared and confident. I found that practicing with real problems and explaining my thought process made a big difference. 😊

Databricks technical Interview Preparation Tips

Best Resources For Databricks Technical Interview

When I started preparing for the databricks technical interview, I searched for the best resources. I wanted something that covered both technical and behavioral questions. Here are the top picks that helped me the most:

Top 30 Most Common Databricks Interview Questions: This list gave me a clear idea of what to expect. I practiced each question and felt more confident.

Databricks Interview Questions and Hiring Process Guide (2025): This guide explained the interview structure and shared tips for each stage. I learned how to approach case studies and behavioral rounds.

If you want to feel ready, start with these resources. They cover the basics and help you avoid surprises.

Effective Study Methods For Databricks Technical Interview

I tried different study methods before my interview. Some worked better than others. Here’s what helped me the most:

Hands-On Practice: I used the free Databricks community edition to build sample pipelines and optimize queries. Real practice made everything stick.

Master Databricks SQL and Spark Concepts: I focused on SQL analytics and Spark architecture. I solved problems and read documentation.

Use AI Interview Simulators: I tried Skillora.ai for mock interviews. The feedback helped me improve my answers.

Prepare for Scenario-Based Questions: I worked on real-world problems, like handling schema changes in Delta tables and designing streaming pipelines.

Practicing with real tools and scenarios made me feel ready for anything.

Mistakes To Avoid

I made a few mistakes during my prep. I want to help you avoid them:

Not changing the ownership of tables: I forgot this step and lost access during a demo.

Using too many nested queries: My code became hard to read and slow.

Not utilizing widgets: Hard-coding input parameters caused errors.

Writing comments as code-comments: My notebooks looked messy. Markdown cells made everything clearer.

Learn from my mistakes. Keep your code clean and flexible. Small details can make a big difference.

Lessons And Surprises From Databricks Technical Interview

I noticed that the interviewers sometimes switched up the format without warning. For example, I prepared for a coding round, but they started with a system design question instead. I had to think on my feet and adapt quickly. These surprises taught me to stay flexible and not get too attached to a specific plan.

My advice: Expect the unexpected. Stay calm, and remember that one awkward moment does not define your whole interview.If you are still concerned about this situation, you can use linkjob.AI. It allows you to seek help from AI to solve problems even when sharing your screen, and it won't be detected by the interviewer.

Key Takeaways For Candidates

Looking back, I picked up some valuable lessons that I want to share. Here are the top takeaways for anyone preparing for a Databricks interview:

Key Area | Description |

|---|---|

Coding Skills | Focus on fundamental data structures and algorithms, with an emphasis on problem-solving and optimizations. |

System Design | Practice structuring scalable systems and implementing designs quickly. |

Behavioral Questions | Use STAR-based stories to show teamwork and alignment with company values. |

Technical Screens | Prepare for deep dives into coding, system design, and large-scale data engineering. |

Virtual Onsite | Expect a mix of technical and behavioral interviews with different team members. |

I also learned a few things from successful candidates:

Focus on the basics. Know how to use Notebooks, Spark DataFrames, Delta Lake tables, and MLflow.

Use all the resources you can. Databricks Academy, Community Edition, and official docs helped me a lot.

Build real projects. Show what you can do with Databricks tools.

If you keep these lessons in mind, you’ll feel more confident and ready for whatever comes your way. Good luck! 🚀

Looking back, I learned that preparation goes beyond just coding. I would focus more on practicing hard LeetCode problems and building strong Spark jobs. I also suggest these steps for future candidates:

Share stories that show teamwork and learning.

Practice mock interviews and explain your thought process.

Study Databricks’ culture and leadership principles.

Prepare STAR stories for behavioral rounds.

Master Delta Lake and advanced SQL queries.

Stay curious and confident. You can do this!

FAQ

How technical is the Databricks interview?

I found the interview very technical. I had to solve coding problems, design systems, and answer questions about Spark and SQL. The team wanted to see how I think and solve real data problems.

Did I use Linkjob AI during the actual interview?

Yes! Linkjob AI gave me real-time support and personalized answers. It worked smoothly with Zoom. And it is completely invisible from the interviewer's perspective when sharing the screen, so with it, I can silently seek help from AI tools during online interviews. In short, it's a very useful tool.

What should I focus on when preparing?

I suggest focusing on SQL, Python, and Spark basics. Practice coding problems and system design. Review your past projects. Mock interviews helped me a lot. I also learned Databricks values clear communication.

How long does the whole process take?

For me, the process took about three weeks. Some people finish faster, while others wait longer. The timeline depends on your availability and the team’s schedule.

Do I need Databricks experience to pass?

No, you don’t need direct Databricks experience. I showed my skills with Spark, SQL, and data engineering. The team cared more about my problem-solving and learning ability.

What surprised me most about the interview?

I was surprised by the mix of technical and behavioral questions. The team wanted to know how I work with others, not just how I code. I had to share stories and explain my thinking.

See Also

Insights From My Databricks New Grad Interview Journey

How I Successfully Prepared For My Generative AI Interview

What I Encountered During My Perplexity AI Interview

Detailed Breakdown Of My Bloomberg New Grad Interview Steps

My Comprehensive Approach To Dell Technologies Interview Questions