How I Mastered Google OA Questions for a Successful Interview

(Google Online Assessment)

For many candidates, Google OA questions feel intimidating enough to stop them before they even begin. Tight time limits, unfamiliar platforms, and problems that look simple but hide tricky edge cases often lead to panic and rushed mistakes. I felt the same way at first.

But after going through the process—and studying real Google OA questions from recent years—I realized something important: Google OA is not designed to eliminate you randomly. It rewards candidates who understand recurring question patterns, manage time deliberately, and stay calm under pressure. This is where an advanced AI interview tool changed the game for me. By leveraging its powerful engine, I was able to generate high-scoring solutions in real-time, even for the most complex algorithmic challenges.

Once I learned how Google OA questions are structured and how this technology could instantly produce optimized code that passed all test cases with top marks, the assessment stopped feeling like a guessing game. The tool's ability to remain invisible to screen sharing and bypass active tab detection meant I could access these high-quality answers seamlessly without any risk. It became a solvable, repeatable challenge.

In this article, I’ll share how I mastered Google OA questions. If you’re preparing for Google OA, this guide is meant to help you approach the assessment with clarity, confidence, and a strategy that actually works.

Key Takeaways

Topic One: Common Google OA Questions

Topic Two: Google Online Assessment Overview

Topic Three: Preparation Strategies for Google OA

Topic Four: Lessons Learned From My Google OA Journey

Topic Five: Final Steps Before the Assessment

Topic One: Common Google OA Questions

Question 1: Coding and Algorithms (Core Focus of Google OA)

In the Google Online Assessment, coding and algorithms are the absolute core. Almost every OA is designed to test how quickly and accurately you can translate a problem statement into working code under strict time pressure.

From my experience, Google OA questions are highly pattern-driven. Arrays, strings, graphs, and greedy-style reasoning appear far more often than exotic algorithms. Many questions look simple on the surface, but hide constraints that punish hesitation or poor pattern recognition.

Example Google OA Coding Questions

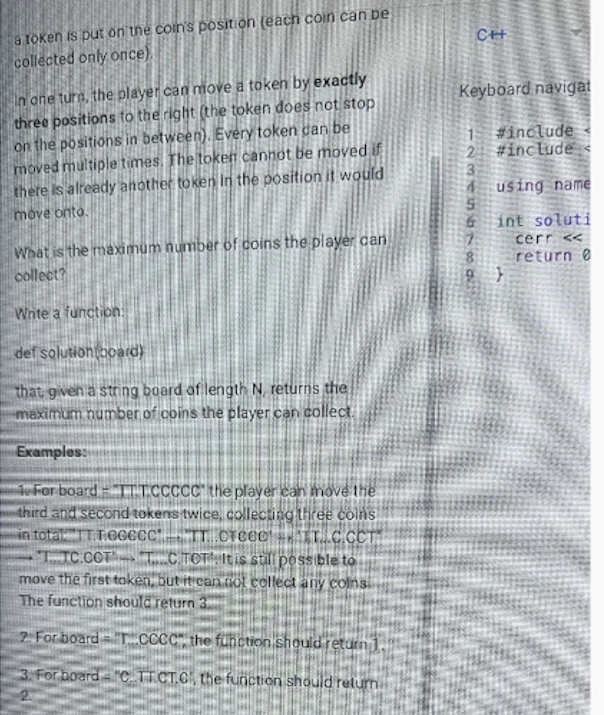

Question 1.1: Token Movement and Coin Collection (Greedy / State Modeling)

You are given a one-dimensional board with n positions. Each position may be:

empty (.)

contain a token (T)

contain a coin (C)

Rules:

Tokens can move any number of times.

Each move must be exactly 3 positions to the right.

A coin is collected when a token lands on its position (each coin only once).

A token cannot move into a position already occupied by another token.

Goal:

Determine the maximum number of coins that can be collected.

Why this is a classic Google OA question:

Looks simple but hides multiple constraints.

Requires state awareness, collision avoidance, and boundary handling.

Brute force fails quickly under time pressure.

Strong solutions rely on greedy reasoning + careful iteration, not heavy DP.

This is a typical Google OA pattern:

the problem doesn’t say “dynamic programming,” but weak abstractions lead to exponential thinking.

Question 1.2: Digit-Based Relationship Grouping (Hashing / Frequency Analysis)

You are given a list of two-digit numbers.

Two numbers are considered related if they share at least one digit

(e.g., 23 and 35 are related because both contain 3).

Goal:

Find the maximum number of elements in the list that share at least one common digit.

Why this tests core OA skills:

Naive pairwise comparison is too slow.

Efficient solutions reduce the problem to:

digit extraction

hash-based counting

grouping by digit frequency

Rewards candidates who think in abstractions, not comparisons.

This question strongly favors candidates who recognize:

“This is a counting problem, not a relationship graph.”

Question 1.3: Token Movement and Coin Collection (Greedy + State Modeling)

You are given a one-dimensional game board represented as a string containing three possible characters:

. empty cell

T a token

C a coin

The board length is at most 100.

Rules:

Each token can move only to the right, and exactly 3 positions per move

A token may move multiple times

A token cannot land on a cell already occupied by another token

If a token lands on a cell containing a coin, the coin is collected

Each coin can be collected only once

Coins passed over during movement do not count — only the landing cell matters

Goal: Compute the maximum number of coins that can be collected.

Why this is a classic Google OA question:

No advanced algorithms are required

The challenge lies in state tracking, greedy decision-making, and careful boundary handling

Brute-force simulations fail quickly under time pressure

Strong solutions model reachable states efficiently and avoid unnecessary backtracking

This question tests whether you can:

Translate movement constraints into valid state transitions

Avoid illegal states (token collisions)

Optimize for maximum reward under strict rules

The power of LinkjobAI lies in its ability to deliver answers while guaranteeing 100% anonymity.

Question 1.4: Largest Group of Related Two-Digit Numbers (Hashing + Counting)

You are given an array of two-digit integers (length ≤ 100).

Two numbers are considered related if they share at least one digit.

Examples:

55, 58, 25, 45 → related (all share digit 5)

55, 66, 77 → not related

Goal: Determine the maximum size of a group where all numbers share at least one common digit.

Why this belongs to Coding & Algorithms:

The problem rewards hashing and frequency analysis

Inefficient pairwise comparison solutions waste time

Strong candidates reduce the problem to digit-based grouping

Key signals Google is testing:

Can you identify the correct abstraction quickly?

Do you model the problem as digit → count, instead of number → number comparison?

Can you implement a clean, efficient solution without overthinking?

Question 2: Data Structures and System Fundamentals

Beyond pure algorithms, Google OA questions often evaluate whether you understand which data structure fits which problem. Many questions look simple at first but require selecting the correct structure—such as a hash map instead of an array, or a queue instead of recursion—to pass all edge cases.

Common data-structure-focused themes include:

Graphs and trees: reasoning about nodes, edges, and traversal paths

Arrays and strings: hashing, indexing, and window-based techniques

Dynamic programming: splitting problems into overlapping subproblems

In some OA versions, you may also see basic system design or system knowledge questions, such as:

Web protocols and APIs

Reliability and availability concepts

SQL vs NoSQL trade-offs

Database sharding and replication

Caching, encryption, and cloud fundamentals

These questions don’t require full system design diagrams. Instead, they test whether you understand how real systems scale and fail.

Question 3: Behavioral and Problem-Solving Mindset

Some Google OA versions include short behavioral or reasoning questions. These are designed to evaluate Googleyness—how you think, collaborate, and respond to challenges.

Typical behavioral themes include:

Competency | What Google Evaluates |

|---|---|

Problem-solving | How you approach unfamiliar or difficult problems |

Collaboration | How you work with teammates and handle disagreement |

Leadership | Taking initiative and helping others succeed |

Googleyness | Curiosity, humility, and a growth mindset |

Common prompts include:

Tell me about a time you solved a hard problem

Describe a mistake and what you learned

Explain how you handled a conflict with a teammate

Why do you want to work at Google?

Google uses these questions to assess long-term potential, not rehearsed answers.

Final Note

Google OA is not about memorizing solutions—it’s about pattern recognition, data structure choice, and calm execution under pressure. If you structure your preparation around these question types, the OA becomes predictable rather than intimidating.

Topic Two: Google Online Assessment Overview

The Google Online Assessment (OA) is the first real technical filter in Google’s hiring process. It tests problem-solving speed, coding fundamentals, and time management under pressure.

OA Format And Structure

Before taking my first Google Online Assessment, I realized one thing quickly: understanding the format is half the battle.

The Google OA is a timed, remote coding assessment. Most candidates receive 1–2 algorithmic coding questions, with a total time limit of 60–90 minutes, depending on role and region. You’re free to use your strongest language—typically Python, Java, C++, or Go—inside Google’s online coding environment.

Here’s a concise breakdown of the typical Google OA setup:

Aspect | Details |

|---|---|

Number of Questions | 1–2 coding problems |

Time Limit | 60–90 minutes |

Supported Languages | Python, Java, C++, Go |

Environment | Online IDE with syntax highlighting |

Monitoring | Often proctored (screen + webcam) |

The questions themselves usually fall into three categories:

Algorithmic coding problems (arrays, strings, graphs, DP)

Occasional short-answer or logic questions

Debugging tasks where you fix broken code

While the structure looks simple, the difficulty is not. Google intentionally designs OA questions to reward clean thinking and penalize hesitation. That’s why timed practice matters as much as correctness.

Role in the Google New Grad Interview Process

The Google Online Assessment is not a formality—it’s the gatekeeper.

For new grads and interns, the OA sits right after résumé screening and before any live technical interview. If you don’t pass the OA, you won’t move on to phone screens or onsite rounds.

What Google evaluates here isn’t memorization. Instead, the OA measures:

Whether you can reason clearly under time pressure

How efficiently you translate ideas into working code

Whether your fundamentals are strong enough for deeper interviews

A strong OA performance signals that you’re ready for more complex, open-ended problems later in the process.

Tip: Treat every Google Online Assessment like a real interview. Practice with real OA-style questions, simulate timing, and get comfortable coding directly in an online IDE.

Topic Three: Preparation Strategies For Google OA

1. Prepare for Google OA as a Time-Allocation Problem, Not a Knowledge Problem

Most candidates fail the Google OA not because they don’t know algorithms, but because they misallocate time under pressure. The real challenge of the OA is solving just enough problems fast enough with clean, bug-free code. That’s why preparation should focus less on “learning more topics” and more on training execution speed and decision-making.

In practice, this means you must internalize a small set of high-frequency patterns—two pointers, sliding window, prefix sums, BFS/DFS, hash-based counting, and basic DP—and reach a point where you can recognize them within seconds. If you need more than 2–3 minutes to decide the approach, you are already behind. During preparation, I deliberately practiced abandoning overcomplicated ideas early and committing to the first correct O(n) or O(n log n) solution. Google does not reward cleverness in OA; it rewards clarity, correctness, and completion.

Equally important is practicing in a low-comfort environment. Google OA platforms often feel restrictive compared to a full IDE, and that friction alone can cost you 10–15 minutes. I trained myself to write code with minimal autocomplete, test mentally, and move on. By the time I took the real OA, the environment felt familiar rather than stressful.

2. Simulate Real OA Pressure and Optimize for Passing, Not Perfection

High-quality preparation requires realistic simulation. Untimed practice creates a false sense of confidence. Instead, I structured my prep around strict OA-style sessions: 70–90 minutes, two problems, no notes, no interruptions. The goal wasn’t to get every solution perfect—it was to finish at least one problem cleanly and make meaningful progress on the second.

After each mock OA, I didn’t just check correctness. I reviewed where time leaked: Was I stuck debugging edge cases? Did I hesitate too long choosing an approach? Did I write extra code that wasn’t needed? Over time, this review process refined my instincts. I learned when to stop optimizing, when to hardcode a constraint, and when to move on. This judgment is exactly what Google uses OA to test.

Finally, strong candidates treat preparation as pattern compression, not repetition. Every solved problem should reduce future thinking time. I kept a short “mistake log” of patterns I missed, edge cases I forgot, and signals I should have noticed earlier. By the end of preparation, many OA questions felt structurally familiar, even if the surface story was new. That’s when you know you’re ready—not when you’ve solved hundreds of problems, but when new ones stop feeling new.

Topic Four: Lessons Learned From My Google OA Journey

1. Pressure Exposes Gaps Faster Than Practice Ever Will

The Google Online Assessment was the first time I truly felt how pressure changes the way you think. In practice, I often felt confident—until a real timer started counting down. I remember staring at a dynamic programming problem with plenty of time left, yet making no real progress. As the clock ticked closer to zero, my focus shifted from solving the problem to fearing the deadline. That panic didn’t come from the problem itself, but from untested weaknesses in my preparation.

What I learned is simple but uncomfortable: pressure doesn’t create problems, it reveals them. Timed practice matters far more than solving questions casually. Once I started practicing with strict timers and simulating real OA conditions, my mindset changed. I learned when to stop over-optimizing, when to settle for a correct solution, and how to stay calm even when an answer wasn’t perfect. Google OA isn’t just testing code—it’s testing composure.

2. Motivation Isn’t Constant—Systems Beat Willpower

Staying motivated throughout the Google new grad interview journey was harder than any single assessment. Rejections piled up, confidence dipped, and it was tempting to slow down or avoid difficult topics altogether. What kept me going wasn’t blind optimism, but structure.

I reviewed past OAs and interviews to pinpoint patterns in my mistakes. I practiced behavioral questions intentionally instead of treating them as an afterthought. Mock assessments became routine, not occasional. More importantly, I actively sought feedback—from coaches, peers, and interviewers whenever possible. These external checkpoints kept me accountable even when internal motivation was low.

The biggest shift was realizing that motivation follows progress, not the other way around. By building small, repeatable systems—practice schedules, review habits, mock interviews—I stayed engaged even on days when confidence was missing.

3. Adaptation Is the Real Signal Google Looks For

One of the most important lessons from my Google OA experience was learning how to adapt after failure. Early on, I relied heavily on peer practice and generic prep advice. Over time, I realized that expert feedback made a disproportionate difference. Coaches helped me see blind spots I couldn’t detect on my own, especially in how Google evaluates problem-solving and communication.

After each OA or interview, I forced myself to reflect honestly: What failed? Was it technical depth, speed, clarity, or decision-making? Then I adjusted my preparation—sometimes shifting focus from algorithms to behavioral stories, other times doubling down on fundamentals I thought I “already knew.”

Google doesn’t expect perfection. It expects growth. Each iteration of my preparation became more targeted, more realistic, and more aligned with how Google actually interviews. That ability to course-correct—quickly and deliberately—turned repeated rejections into eventual breakthroughs.

Final thought:

The Google OA journey isn’t about being naturally good at coding. It’s about learning how to stay calm under pressure, build systems that carry you through low-motivation phases, and adapt faster than your last failure. Once I understood that, the process stopped feeling random—and started feeling winnable.

Topic Five: Final Steps Before The Assessment

1. Confidence Comes From Familiarity, Not Talent

What helped me most wasn’t knowing more algorithms—it was knowing exactly what the Google OA feels like. Once I understood the format, time pressure, and question style, my anxiety dropped sharply. Practicing under real conditions (quiet room, strict timer, no distractions) trained my brain to stay calm. Confidence came naturally when the environment stopped feeling unfamiliar.

The biggest mindset shift: “I’ve seen this before.”

2. Time Pressure Is the Real Enemy—Train for It

Most Google OA failures aren’t caused by lack of skill, but by poor time management. I learned to prioritize correctness over speed, avoid over-optimizing too early, and always leave buffer time to recheck edge cases. Practicing with timers taught me when to move on and when to commit—this mattered far more than solving harder problems.

Under pressure, discipline beats brilliance.

3. Reflection Turns Every Attempt Into Progress

After each practice or OA attempt, I wrote down what went wrong: patterns I missed, mistakes I repeated, or moments I panicked. This simple habit made every failure useful. Over time, my weak points became predictable—and fixable. Google OA stopped feeling like a lottery and started feeling like a process.

Improvement didn’t come from doing more questions, but from learning more from each one.

FAQ

1. How should I prioritize topics when preparing for Google OA questions?

Getting stuck is a common fear, and the ticking clock only adds to the panic. My strategy was to never let a mental block derail the entire assessment. I used an advanced AI-powered assistant specifically designed for high-stakes technical interviews.

When a problem felt overwhelming, this tool would provide real-time solution reasoning and optimized code that passed all test cases instantly. Because it is an independent desktop application, it bypassed active tab detection and remained completely invisible to screen sharing, allowing me to get the support I needed without any risk of detection. It turned a high-pressure situation into a controlled, manageable process, ensuring I secured a top score even on the trickiest questions.

2. What’s the best way to stay calm when solving Google OA questions under time pressure?

The key to staying calm during Google OA questions is making time pressure feel familiar. I practiced with a strict timer every session, using the same 60–90 minute window as the real Google online assessment. Over time, the stress stopped feeling “special.” I also trained myself to pause briefly before coding, confirm the approach, and move forward decisively. Calm execution matters more than perfect solutions.

3. How can I improve my speed on Google OA coding questions?

Speed on Google OA questions comes from pattern recognition, not typing faster. I focused on mastering a small set of reusable patterns—two pointers, sliding window, BFS/DFS, and hash-based counting. I also reviewed accepted solutions to see how others simplified logic. Mock OAs helped me identify where I wasted time, especially on unnecessary optimizations or edge cases too early.

4. Are Google OA questions more about algorithms or data structures?

Google OA questions test both, but data structure choice often determines whether your algorithm passes all test cases. Many OA problems are straightforward once you pick the right structure—using a hash map instead of an array, or a queue instead of recursion. Google cares less about exotic algorithms and more about whether you can match the problem to the correct data structure under pressure.

5. Is practicing with real Google OA questions better than generic LeetCode practice?

Yes. Practicing with real Google OA questions or Google-style mock assessments makes a big difference. Generic LeetCode practice builds fundamentals, but Google OA questions have specific pacing, input/output styles, and edge-case traps. Practicing in an OA-like environment trains both your technical skills and your delivery—helping you pass the assessment, not just solve problems in theory.

See Also

Essential Responses for 2025’s Top Competency Interview Queries

Boost Your Interview Success Using AI Preparation Resources

My Journey Through the 2026 OpenAI Interview Experience

Responding to Continuous Learning Questions in 2025 Interviews