How I Cracked the Microsoft AI Engineer Interview (2026)

Just signed my offer for the Microsoft AI Engineer role .The interview loop was heavy on complex algo implementation.To manage the complexity, I actually used a real-time coding interview assistant, Linkjob AI, to help me prep and unblock during live coding. Because this approach was so effective, I was invited to share my experience here.

Here’s a full breakdown of the process and my strategy.

MS AI Engineer Interview: Application and recruiter screen

The first step began with a recruiter reaching out after I applied online.I learned early on that Microsoft uses AI systems to filter resumes, so I kept my formatting simple and keyword-rich (e.g., LLM, RAG, Azure ML, PyTorch).The recruiter asked about my background and why I wanted the role. I kept my answers focused and specific.

Common pitfalls: Listing duties instead of results. Make sure to quantify your achievements.

MS AI Engineer Interview:Online assessment(OA)

After the screen, I received a link to an online assessment. It tested practical cloud and AI knowledge.

The questions covered a wide range of topics. Here’s a quick look at what I faced:

Topic | Description |

|---|---|

Generative AI | Implementing generative AI solutions using Azure services. |

Computer Vision | Applying computer vision technologies in real-world AI applications. |

Natural Language Processing | Using NLP techniques for AI projects. |

Responsible AI Practices | Designing solutions with ethics and responsibility in mind. |

Azure Machine Learning | Integrating Azure Machine Learning into AI solutions. |

Cognitive Services | Building intelligent apps with Azure Cognitive Services. |

Azure OpenAI | Using Azure OpenAI in projects. |

Azure Bot Service | Creating conversational AI with Azure Bot Service. |

My Strategy: I picked the right answer and used the "comment box" to explain why I chose a specific Azure service over another.

Microsoft want clean, production-ready code.

A hard one really tested my math skills:

The "Digit Sum" Combinatorics Problem: The interviewer gave me an array of integers, e.g.,

[30, 31, 31]. I had to return the total number of arrays possible where every element has the same digit sum as the corresponding element in the input array. (e.g., for input30,4998is valid because 4+9+9+8=30. Now calculate the combinations for the whole array).

How I used Linkjob AI here: This was a tricky Digit DP (Dynamic Programming) problem that wasn't on standard study lists. I used Linkjob AI to quickly verify the combinatorics logic. It suggested a recursive approach with memoization to count the digit sums efficiently. Having that logic check in real-time stopped me from going down the wrong rabbit hole and helped me write the clean DP solution within 15 minutes.

MS AI Engineer Interview: Technical interviews

Here is the exact breakdown of my onsite loop. It was a mix of standard coding, deep system design, and a very intense behavioral round.

Round 1: Coding

The Question: LeetCode 56 - Merge Intervals (Original).

Task: Given an array of intervals, merge all overlapping intervals.

My Experience:

Even though I knew the logic (sort by start time), handling the lambda sort syntax and the result array in Python can sometimes lead to typos.

Round 2: Coding + Follow-up

The Question: LeetCode 235 - Lowest Common Ancestor (LCA) of a Binary Search Tree.

Task: Find the LCA of two nodes in a BST.

Solution: Since it's a BST, you can just use the property $(val - p.val) * (val - q.val) > 0$ to traverse.

The Follow-up :

"What if the tree is NOT a BST? Just a regular Binary Tree?"

Context: This effectively turns it into LeetCode 236.

The jump from Iterative (BST) to Recursive (General Tree) requires a quick mental switch. Linkjob AI's real-time hint feature confirmed the recursive structure for the general tree immediately, preventing me from getting stuck in the previous logic. I implemented the recursive solution smoothly.

Round 3: System Design

This stage tested my ability to design large AI systems and solve real-world problems.

The Prompt: Design a Recommendation System for User's Local Sports Teams.

Key Discussions:

Architecture Decisions: We debated RAG vs. Fine-tuning for specific retrieval sub-tasks. I argued that for user history, lightweight fine-tuning of a ranking model is better than RAG.

Scaling: How to serve billion-parameter models? I discussed quantization and model distillation to optimize for low latency.

Evaluation: A huge topic was Hallucinations. I proposed a "Red Teaming" layer in the pipeline to evaluate LLM outputs before they reach the user.

My Strategy: I explicitly discussed the trade-offs between "Strong Consistency" vs. "Eventual Consistency" in the context of a feed. I argued that for a RecSys, we don't need strong consistency (it's okay if a new video appears 5 minutes late), which allows for better availability.

Here’s a snapshot of the topics:

Topic/Area | Description |

|---|---|

Architecture decisions | RAG, fine-tuning, multi-modal systems |

Scaling strategies | Handling billion-parameter models |

Optimization techniques | Improving latency, cost, and performance |

Evaluation frameworks | LLM output evaluation and hallucination fixes |

Deployment patterns | Industry-specific deployment strategies |

Round 4: The Hiring Manager & Behavioral

This round was split into two parts: deep philosophical discussions about AI and standard behavioral questions.

They wanted to see if I could handle pressure and work in a team. I stuck strictly to the SAR (Situation, Action, Result) format.

The Question:"Tell me about a time you prioritized the customer over technical perfection."

My Story: I talked about a time I shipped a 'good enough' feature to unblock a client, rather than waiting 2 weeks for a perfect refactor.

The Question:"Describe a time you failed."

My Story: I shared a real story about a failed deployment that caused downtime. I focused on how I led the rollback and the subsequent post-mortem analysis to prevent it from happening again.

The Question: "How do you evaluate the current state of LLM applications? Where do you see the real value vs. the hype?"

My story: I argued that we are moving away from generic "Chatbots" toward specialized, context-aware agents.

Here’s what they looked for:

Competency | Description |

|---|---|

Problem-solving and Decision-Making | Tackling complex problems and making smart choices. |

Teamwork and Collaboration | Working with diverse teams and resolving conflicts. |

Leadership and Influence | Inspiring others and driving innovation. |

Adaptability and Resilience | Handling change and bouncing back from setbacks. |

Customer Obsession and Impact | Focusing on customer needs and delivering results. |

I made sure to share real stories from my experience. I showed how I learned from mistakes and grew as an engineer.

Tip: The microsoft ai engineer interview process checks both your technical skills and your ability to think strategically. Be ready to explain your choices and reflect on your experiences.

MS AI Engineer Interview: Preparation Strategies

Study Resources:

LeetCode/HackerRank: For the basics.

Microsoft Learn: For Azure specific terminology.

Exponent (YouTube): Great for system design frameworks.

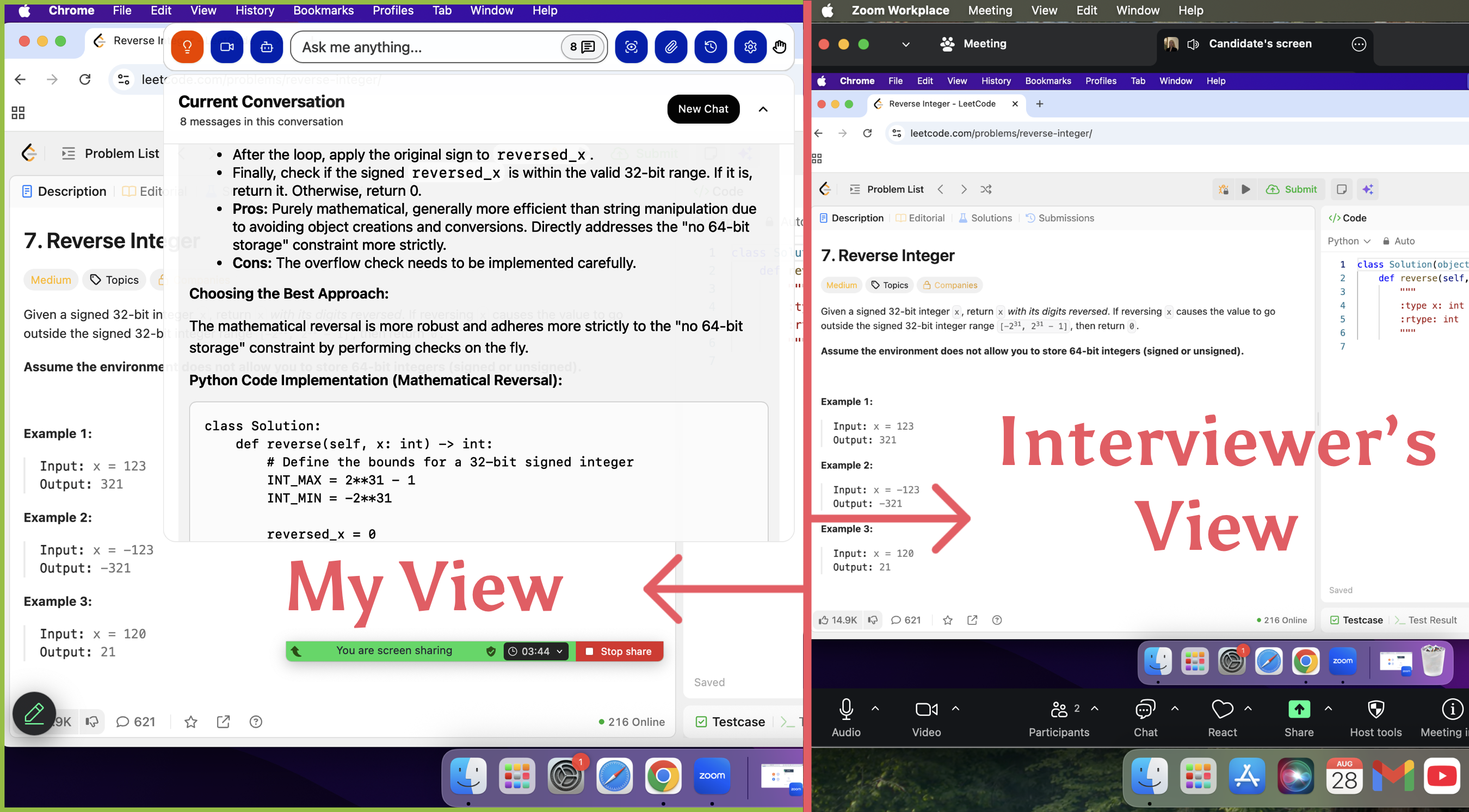

Linkjob AI: I needed live support. I used Linkjob AI as my interview "copilot.", it listened to the context in real-time and suggested code snippets or logic paths the moment I got stuck.

Below, I’m sharing the preparation I did beforehand, distilled into general strategies that can help anyone, no matter what role you are targeting.

general strategies

Interview questions and topics

When I prepared for the microsoft ai engineer interview, I noticed that the questions fell into a few main categories. Let me break them down and share what I actually faced.

Coding and algorithms

Coding and algorithms questions tested my core computer science skills. The interviewers wanted to see if I could solve problems efficiently and explain my thought process. Here are some common types of questions I encountered:

Check if a Binary Tree is BST or not

Remove duplicates from a string, do it in-place

Given a rotated array which is sorted, search for an element in it

Write a function that adds two big numbers represented by linked lists

Print last 10 lines of a big file or big string

Clone a linked list with next and arbitrary pointer

Connect nodes at the same level

Find the least common ancestor of a binary tree or a binary search tree

Detect cycle in a linked list

Validate a given IP address

Two of the nodes of a BST are swapped. Correct the BST

I always explained my choices out loud. For example, when I solved the "detect cycle in a linked list" problem, I described why I picked Floyd’s Cycle Detection algorithm and what trade-offs it had.

If the interviewer questioned my decision, I never blamed the tools. I made sure to show that I understood the fundamentals. The team wanted people who could explain their code, not just prompt an AI model.

Machine learning fundamentals

The microsoft ai engineer interview also focused on machine learning basics. I had to show that I understood the difference between machine learning, artificial intelligence, and data science. Here are some topics that came up:

Understanding the differences between Machine Learning, Artificial Intelligence, and Data Science

Concepts of overfitting and how to avoid it

Regularization techniques to prevent overfitting

Familiarity with various machine learning algorithms such as regression, decision trees, and neural networks

One interviewer asked me to explain overfitting and how I would prevent it in a real project. I talked about using cross-validation and regularization, and I gave examples from my past work.

Azure and cloud applications

Cloud knowledge played a big role in my interviews. I got questions about:

Foundational cloud concepts

Platform services

Networking

Security

DevOps

Scenario-based behavioral questions

I had to describe how I would deploy a machine learning model using Azure Machine Learning. Sometimes, the interviewer gave me a scenario and asked how I would secure the data or automate the deployment. I made sure to mention best practices for networking and access control.

Tip: For behavioral questions, I used the Situation, Action, Result (SAR) format. I explained the technical decisions I made and how I worked with other teams.

System design

System design questions tested how I would build and scale AI solutions. The interviewer wanted to see if I could think about the big picture, not just write code. Here’s a table of the main principles they cared about:

Principle | Description |

|---|---|

Scalability | Ensures systems can handle increasing workloads without performance degradation. |

Availability | Minimizes downtime and enables smooth recovery from failures. |

Performance Optimization | Techniques to reduce latency and enhance system responsiveness. |

Data Consistency | Balances strong and eventual consistency based on system needs and trade-offs. |

Security | Protects user data through encryption, access control, and secure authentication mechanisms. |

Compliance | Adheres to regulatory standards to ensure data integrity and user privacy. |

Efficient Storage | Strategies for managing large datasets while maintaining fault tolerance and scalability. |

One question asked me to design a system that could serve AI predictions to millions of users with low latency. I had to talk about caching, load balancing, and how to keep the system secure.

AI ethics and scenarios

Ethics came up in almost every round. The microsoft ai engineer interview included scenarios about responsible AI, especially in areas like finance, healthcare, and hiring. I got questions like:

How would you detect and reduce bias in your model?

What steps would you take to ensure demographic fairness?

How would you explain a model’s decision to a non-technical user?

What would you do if your model’s predictions could impact someone’s job or health?

We also discussed regulations like GDPR and the AI Act. The interviewer wanted to see if I understood the importance of transparency, privacy, and accountability.

Behavioral questions

Behavioral questions helped the team understand how I work with others and handle tough situations. Here are some themes I noticed:

Collaboration and communication in fast-paced teams

Handling challenges and setbacks in AI projects

Balancing technical performance with ethical considerations

I always tried to give real examples from my experience. For instance, I described a time when a project failed and how I helped the team recover and learn from it.

In every part of the microsoft ai engineer interview, I made sure to explain my reasoning. The team wanted to see that I could communicate clearly, make smart decisions, and understand both the technical and ethical sides of AI.

Insights from other candidates

When I prepared for my Microsoft AI engineer interview, I didn’t just rely on my own experience. I spent hours reading forums, talking to recent candidates, and checking out interview reports. I noticed some clear patterns and a few surprises that I want to share with you.

Common technical questions

Many candidates told me that Microsoft loves to test the basics. I saw these trends pop up again and again:

Interviewers want you to talk through your problem-solving process. They care about how you think, not just what you code.

Data structures and algorithms come up in almost every round. You need to know how to use them and explain why you chose them.

Communication matters as much as technical skill. If you can’t explain your solution, you won’t stand out.

System design and design patterns are important, especially in advanced rounds. Interviewers ask about trade-offs and want you to discuss your decisions in detail.

Mastering fundamentals is key. You should feel comfortable with system design and operating system concepts.

Behavioral trends

I noticed that Microsoft interviewers pay close attention to how you interact and communicate. Here’s what other candidates shared:

Interviewers look for clear, confident communication. They want to see if you can work well with others.

You should be ready to explain your choices and reflect on your experiences.

Many candidates said that interviews felt like conversations, not interrogations. The team wants to know how you think and solve problems with others.

Tip: Practice explaining your reasoning out loud. It helps you sound confident and makes your answers more memorable.

Unique or surprising questions

Some candidates faced questions that caught them off guard. Here are a few examples:

Interviewers asked about how models behave in production, not just during training.

They wanted details about tokenization, embeddings, and designing prompts for consistent user experiences.

Handling context updates and memory persistence came up, along with retrieval-augmented generation (RAG) strategies.

I heard about questions where you sketch out full pipelines, from data ingestion to feedback loops.

Interviewers sometimes asked about balancing cost and latency in real-world systems.

Debugging and migration scenarios involving embedding models appeared in some interviews.

Philosophical questions about system robustness and design popped up too.

Here are some advanced questions that recent candidates reported:

What are effective architectural and data-centric strategies for LLMs to reason over large unstructured data?

How would you create layered indexes for better semantic retrieval using Multi-Granular Hierarchical RAG?

Can you describe Recursive Self-Refinement with Scratchpad for internal working memory?

How would you use knowledge graphs to enhance reasoning and prevent hallucinations in AI systems?

I found that Microsoft likes to challenge candidates with questions that go beyond the basics. If you prepare for these, you’ll feel much more confident on interview day.

Lessons and reflections

Key takeaways

Looking back, I learned so much from the Microsoft AI engineer interview. The process taught me more than just technical skills. Here are the biggest lessons I took away:

Think beyond test cases. I focused on writing clean code and handling edge cases, not just passing the obvious tests.

My projects told my story. I prepared to explain my design choices and the reasons behind them.

System design matters at every level. I showed my thought process and how I understood the bigger picture.

I did not rush to the best solution. I walked through my reasoning, starting with simple ideas and moving to better ones.

Staying calm helped me. Long interview days can feel overwhelming, but clarity always mattered more than speed.

I realized that practicing clarity, not just correctness, made a huge difference. Communicating my thoughts while coding helped me stand out.

Advice for future candidates

If you want to succeed in the Microsoft AI engineer interview, here’s what I would tell you:

Find your real navigators. Look for people who are great at documentation and quick learning. These skills help you move through AI projects smoothly.

Win the war, then count the spoils. Prove your AI solutions work before you try to change strategies. Focus on building a safe and supportive team culture.

Practice real-world thinking. Not every question will be a simple coding problem. Be ready to talk through your approach.

Keep calm when things get tough. The way you handle challenges matters more than getting every answer perfect.

How the process changed me

This interview changed how I see myself as an engineer. I learned to value my own story and trust my problem-solving skills. I became more confident in explaining my ideas and choices. I also realized that teamwork and communication matter just as much as technical knowledge. Now, I approach every project with a bigger-picture mindset and a lot more patience.

If you’re preparing for this journey, remember: You can do it. Stay curious, keep practicing, and don’t be afraid to show who you are.

Here’s what helped me most:

Practice clear communication and problem-solving.

Focus on real-world projects and system design.

Stay calm and explain your thinking.

You can pass the Microsoft AI engineer interview if you keep learning and believe in yourself. Got questions or want to share your story? Drop a comment—I’d love to hear from you!

FAQ

How long did the Microsoft AI engineer interview process take?

For me, the whole process took about four weeks. I moved from the application to the final offer in that time. Some friends told me their process took a bit longer.

What was the hardest part of the interview?

I found the system design round the toughest. I had to think fast and explain my ideas clearly. The questions pushed me to show both technical and strategic thinking.

Do I need deep Azure experience to pass?

I didn’t know everything about Azure before my interview. I focused on learning the basics and how to use Azure for AI projects. Showing that I could learn quickly helped me a lot.

How should I prepare for behavioral questions?

I practiced real stories from my past.

I used the Situation, Action, Result (SAR) format.

I stayed honest and showed what I learned from mistakes.

Leveraging AI Tools to Ace My Microsoft Teams Interview

My Journey of Preparing for a 2025 Generative AI Interview

Insights From My 2025 Perplexity AI Interview Experience

Navigating the OpenAI Interview Process Step by Step in 2025