Breaking Down My 2026 OpenAI Machine Learning Interview

It is widely recognized that ML coding forms a core component of the technical assessment for many programming-related roles.Based on my recent interview journey in OpenAl , I've compiled the actual technical problems I encountered about machine learning. Below, I'll share my notes on each stage, along with the specific questions asked, my solution approaches, and the key takeaways I gathered. Whether you're preparing for your own OpenAl ml interview or just curious about their process, I hope this detailed recap proves helpful.

I’m really grateful to Linkjob.ai for helping me pass my interview, which is why I’m sharing my interview questions and experience here. Having an undetectable AI coding interview copilot during the interview indeed provides a significant edge.

OpenAI ML Interview Questions

The interview process was structured to assess machine learning competency from multiple dimensions, evaluating a breadth of expertise from foundational theory and system design to applied research and execution.Below are the key questions I encountered, along with the expected areas of evaluation.

1. Foundational ML Concepts

These questions test your grasp of core principles and your ability to communicate them clearly.

Q1: Explain what overfitting is.

What they’re assessing: Your ability to define a fundamental concept, differentiate between bias and variance, and discuss common mitigation strategies (e.g., regularization, cross-validation, dropout, early stopping). They expect a concise yet comprehensive answer that connects theory to practice.Q2: In a binary classification problem, how would you evaluate model performance?

What they’re assessing: Knowledge beyond accuracy. They want you to discuss metrics like precision, recall, F1-score, ROC-AUC, PR-AUC, and confusion matrix interpretation, especially in cases of class imbalance. The choice of metric should be justified based on the business or research objective.

2. System Design & Engineering Rigor

This segment focuses on building robust, production-grade training pipelines. The emphasis is not just on “making code run,” but on ensuring reliability under extreme conditions.

Q3: Design a fault-tolerant training pipeline.

This was broken down into sub-questions:Concurrency Control: In a multi-worker scenario (e.g., distributed training), how do you avoid race conditions during checkpointing or data sharding?

State Management: After an interruption, how do you guarantee accurate recovery? What metadata must be included in the saved state (e.g., optimizer state, random seeds, epoch count, global step)?

Graceful Degradation: How do you handle different failure modes—file corruption, network timeouts, out-of-memory errors—with appropriate fallback strategies?

3. Project Deep Dive

You’re asked to present a past project in detail, followed by incisive follow-ups. I discussed a distributed training framework, which led to:

Q4: How did you prove your sharding strategy was optimal?

What they’re assessing: Your approach to performance evaluation (benchmarking, profiling bottlenecks), consideration of trade-offs (computation vs. communication overhead), and whether you validated efficiency gains empirically.Q5: If gradient explosion occurred, how quickly would your monitoring system alert you?

What they’re assessing: Your understanding of real-time monitoring, observability (metrics, logs, alerts), and system responsiveness. This probes the operational maturity of your project.Q6: If you could redesign the system now, what architectural improvements would you prioritize?

What they’re assessing: Your ability to reflect critically, identify past limitations, and apply newer best practices (e.g., better orchestration, adaptive fault tolerance, or integration of newer distributed computing paradigms).

4. Research Sensitivity & Vision

This portion evaluates your forward-thinking mindset and awareness of open challenges at the frontier of ML.

Q7: If you were to design the next-generation multimodal reasoning system, what challenge would you prioritize solving?

What they’re assessing: Your ability to identify meaningful, unsolved problems (e.g., cross-modal alignment under low data regimes, compositional reasoning, efficient fusion of heterogeneous modalities, or robustness to adversarial inputs). They want to see structured thinking and a well-reasoned prioritization framework.

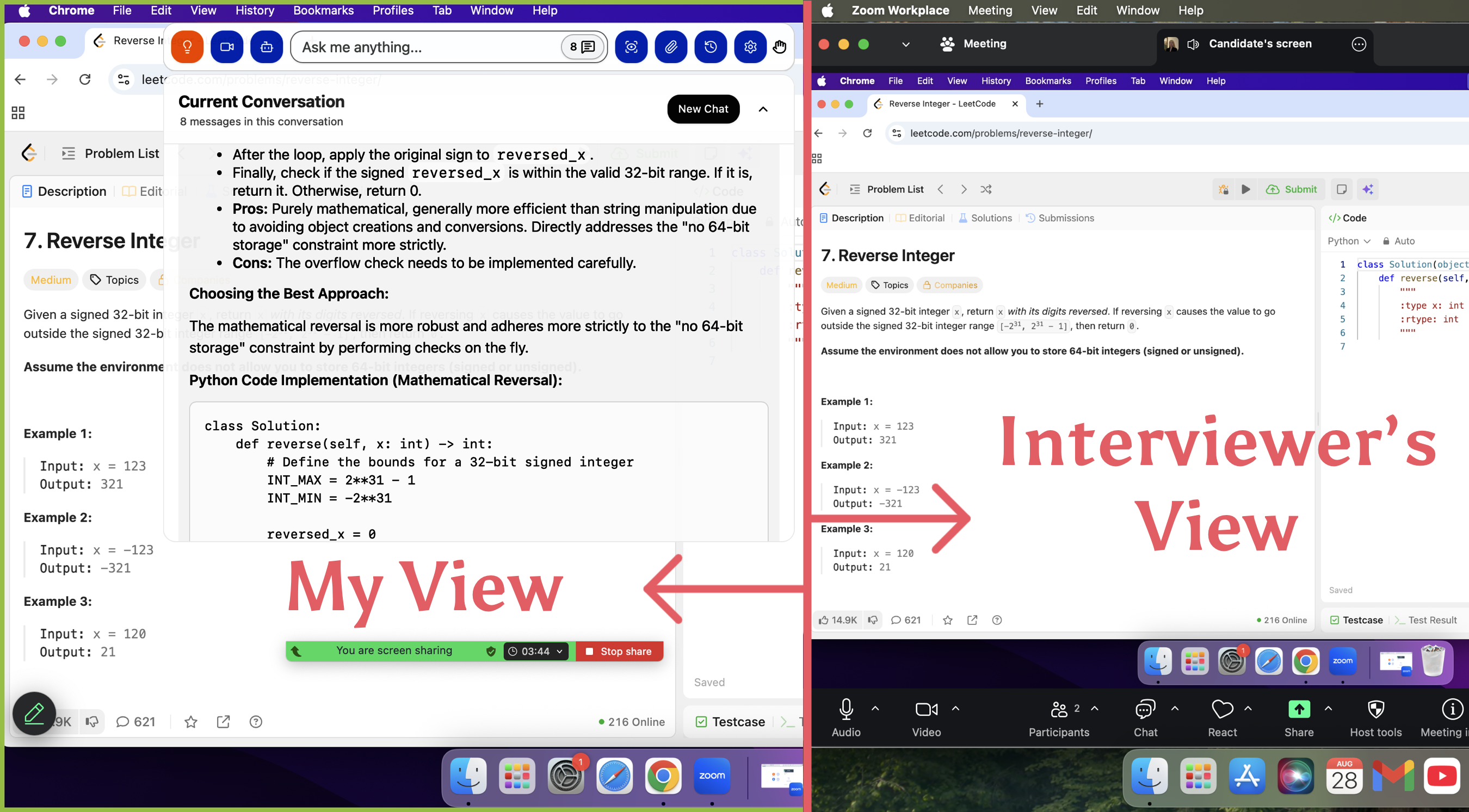

Overall Impression: The interview was highly holistic. It seamlessly wove together theory, hands-on system design, deep project scrutiny, and research foresight. The underlying theme was building reliable, scalable, and intelligent model—not just in ideal scenarios, but under real-world constraints and failures.Just in case, I used linkjob.ai during the interview process. This software is very useful, as it allows me to use its AI tool during the online interview without being detected by the interviewer. Even when sharing the screen, the interviewer cannot see the AI tool on my screen. Thanks to this tool, I successfully passed the online interview stage of OpenAI.

This is completely invisible from the interviewer's perspective! I tested it with my friend before the interview, and she couldn't see the AI tool on my shared screen at all!

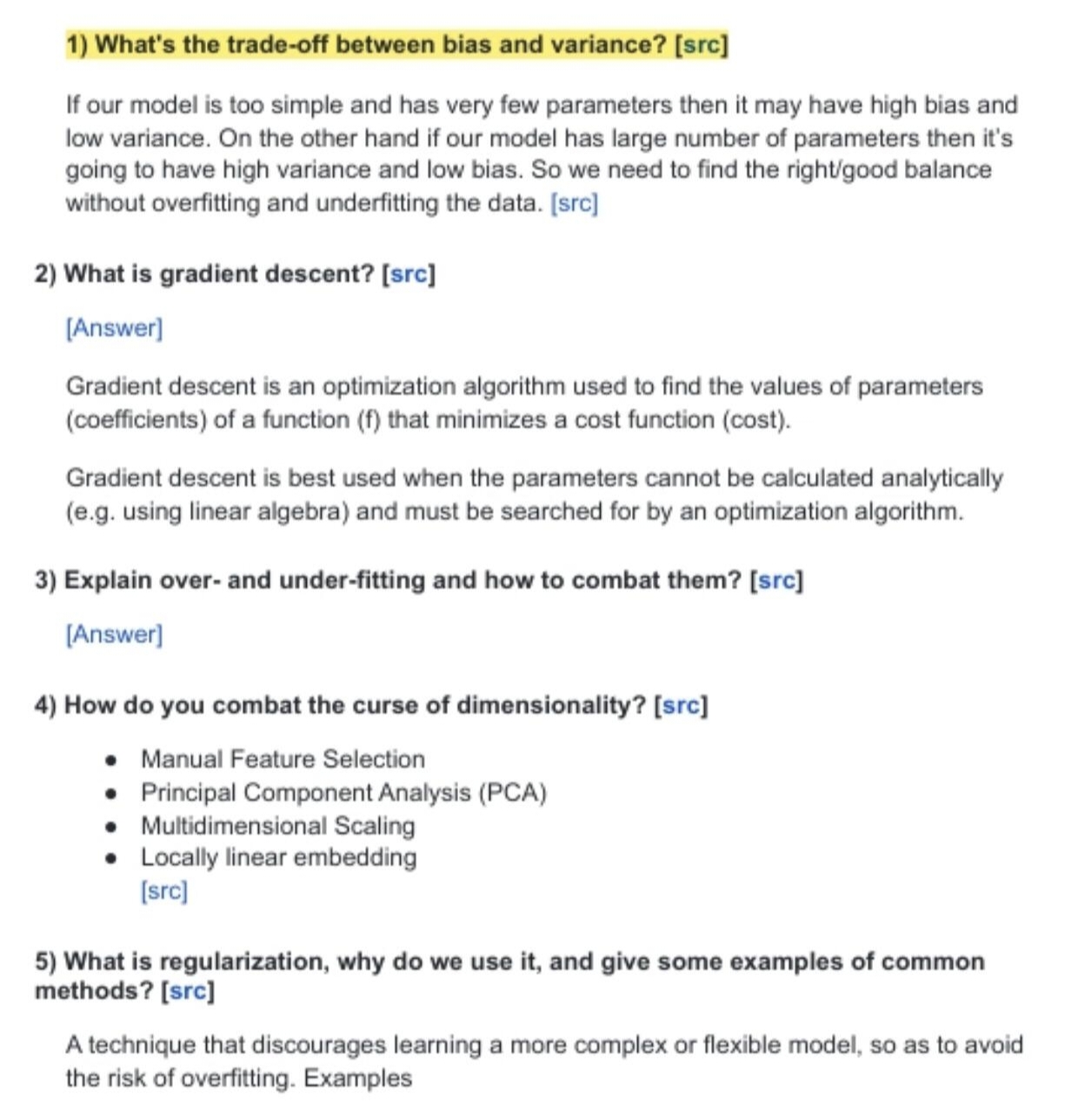

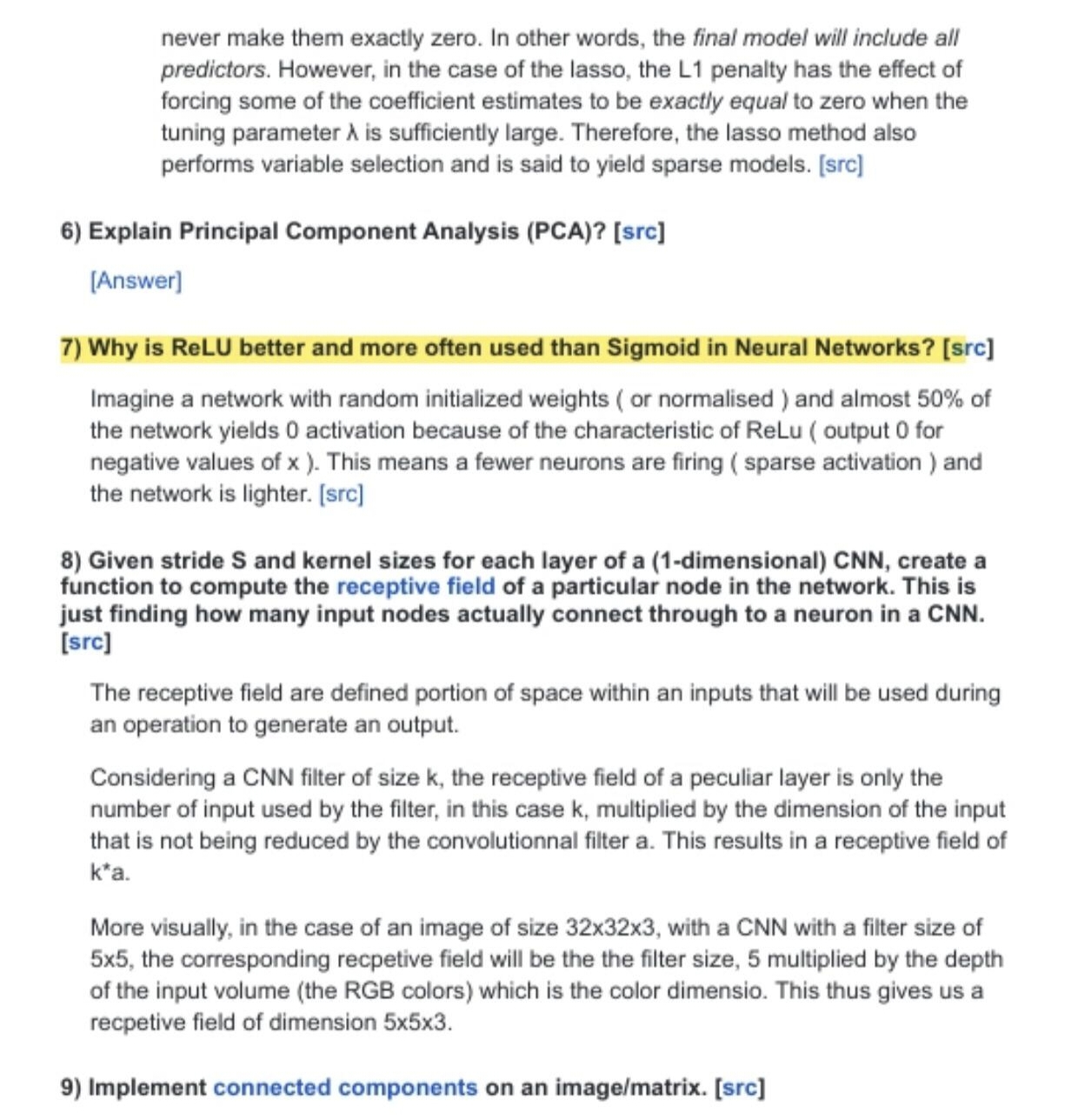

Additional Real Questions Found Online

I didn’t just rely on my own experience. I searched for more interview questions from other candidates. Here are some that stood out:

Some questions felt familiar, but others surprised me. I saw a lot of focus on real-world scenarios and edge cases.

Comparing My Experience

Looking back, my interview matched a lot of these themes. I got questions about model evaluation, overfitting mitigation, and the scalability of training pipelines. The interviewers wanted to know how I’d handle ethical challenges. My process felt fast, but I heard from others that timelines can shift. I think my background in backend and machine learning helped, but I still had to show I could communicate and solve problems with a team.

If you’re preparing, don’t just study algorithms. Practice explaining your ideas and think about how your work affects people. OpenAI wants engineers who care about impact, not just code.

OpenAI ML Interview Process

My Approach and Lessons Learned

I learned a lot from the openai ml interview process. Here’s what worked for me and what I would do differently next time:

Go deep, not wide. I focused on solving each problem thoroughly. I didn’t rush to finish as many as possible. That helped me avoid careless mistakes.

Recognize patterns. I practiced common data structure and algorithm patterns. That made it easier to spot the right approach during the interview.

Communicate clearly. I explained my thought process out loud. That helped interviewers follow my logic and gave me time to think.

Practice fundamentals. I reviewed basic coding problems regularly. Even senior candidates need a strong foundation.

Use real interview data. I checked recent interview reports online. That helped me focus my prep on the most relevant topics.

Make full use of AI tools.During the interview process, I utilized an effective AI interview tool to assist with problem-solving. Linkjob.ai offers real-time Q&A support during interviews and remains invisible to the interviewer even when screen sharing, making it highly practical.

After the interviews, I realized OpenAI cares more about conceptual understanding and engineering skills than memorizing algorithms. They want people who can think about systems, reliability, and ethical trade-offs. I also learned the importance of preparing for questions about OpenAI’s mission and how I fit into it.

Lesson: Don’t just study algorithms. Practice explaining your ideas, thinking about systems, and connecting your experience to OpenAI’s goals.

Insights From Other Candidates

When I started prepping for my OpenAI interview, I wanted to know what others had gone through. I read a bunch of stories online and noticed some patterns. Here’s what kept popping up:

Strong technical skills matter, but interviewers also care about how you think about ethics in AI.

The process can feel flexible or even a bit chaotic. Some people get different rounds or questions depending on the team.

Time-based data structures, versioned data stores, and concurrency come up a lot.

OpenAI looks for practical problem-solving, teamwork, and clear communication.

I realized that OpenAI doesn’t just want coders. They want people who can work together and think about the bigger picture.

Interview Preparation Tips and Strategies

Recommended Resources and Materials

I found a few resources that made a big difference in my prep:

OpenAI Interview Guide: This guide helped me understand what “systems fluency” means and why clarity matters.

Technical Interview Insights: I read a 62-page guide that covered interview processes at top tech companies.

How to Ace Interviews at OpenAI: I took the recruiter’s advice seriously and scheduled interviews when I felt ready.

Mindset and Time Management

Staying positive during interview prep isn’t easy, but I learned a few tricks. I treated every interview as a learning opportunity. This helped me stay curious and relaxed. I saw each session as practice, not just a test. I created a personal knowledge base to reflect on my experiences and prepare better for the next round.

break my study sessions into short, focused blocks.

set small goals for each day and celebrated progress.

remind myself that every interview, even the tough ones, helped me grow.

Pro Tip: Keep your mindset flexible. If you stumble on a question, treat it as a chance to learn, not a failure.

Mistakes To Avoid

I made some mistakes along the way. I also saw others trip up on the same things:

Not adapting to new interview formats that use AI.

Forgetting to explain my thought process clearly during machine learning questions.

Tip: Always show your reasoning, not just your final answer.

Actionable Advice

Here’s what helped me the most:

Study OpenAI’s blog, research, and products to understand their work.

Practice pair-programming and mock interviews to get comfortable thinking out loud.

Write a one-pager about a recent project to prepare for technical discussions.

Practice answering questions about AI safety and ethics.

Prepare STAR stories that show how your work fits OpenAI’s mission.

I kept these steps in mind and felt more confident at every stage. You can do the same!

Using AI For Interview Success

Of course. Using AI to prepare for technical interviews is no longer just a nice-to-have; it’s becoming an essential tool for efficient preparation. Its greatest value lies in structuring the open-ended exploration process. When you’re faced with a complex, open-ended question like “design a distributed inference API,” traditional prep often leads to fragmented searching and passive reading. With AI, you can quickly build an interactive, iterative thinking sandbox.

It can dynamically play different roles:

As a Mock Interviewer: It can generate follow-up questions, poke at edge cases, and challenge your assumptions in real-time, simulating the pressure and unpredictability of a real interview.

As a Collaborative Architect: You can iterate on a system diagram by having a dialogue—explaining your design, getting instant feedback on trade-offs, and exploring alternative approaches without having to schedule another person.

As a Knowledge Synthesizer: Need to connect the dots between Kubernetes autoscaling, GPU resource pooling, and inference latency SLOs? AI can instantly provide concise explanations and relevant concepts, saving hours of manual research.

This transforms preparation from a solitary study session into an active, dialog-driven drill. You’re not just consuming static information; you’re stress-testing your reasoning, filling knowledge gaps on-demand, and building the muscle memory to think aloud and adapt under questioning—which is precisely the core skill being assessed. In short, AI doesn’t replace deep understanding, but it dramatically accelerates the path to achieving it, making your preparation more focused, adaptive, and ultimately, more complete.

Personal Reflections and Growth

What I Gained From The Process

This interview became a mirror of my growth. Beyond technical skills, it reshaped how I approach engineering: not just how to build, but why — how to articulate trade-offs, design for resilience, and communicate reasoning clearly under pressure.I realized that meaningful problem-solving isn’t about quick answers, but about intentional thinking. Each round taught me to slow down, structure my logic, and stay calm even when the path wasn’t obvious. It was a lesson in clarity, composure, and craft.Ultimately, it wasn’t just a test — it was a deepening of my mindset as an engineer and collaborator.

How It Changed My Interview Approach

After that experience, I reshaped how I prepare for interviews. It became less about memorizing solutions and more about strengthening my ability to think, communicate, and reason from first principles.

I started focusing on three key shifts:

Thinking aloud. I now practice explaining my reasoning before writing a single line of code, treating interviews as collaborative design sessions rather than solo tasks.

Deep problem framing. I spend more time understanding constraints, trade-offs, and edge cases before moving to implementation.

Structured problem-solving under pressure. I simulate real interview environments to practice staying calm, breaking problems down, and communicating my process clearly.

Ultimately, the process taught me that great engineering isn’t just about writing correct code—it’s about thinking clearly, communicating intentionally, and staying grounded in fundamentals, especially when the pressure is on.

Encouragement For Future Candidates

If you are thinking about applying to OpenAI, I want you to know that you can do it. The process feels tough, but you learn a lot along the way. Here are some tips that helped me:

Be proactive in your job search.

Stay informed about the roles you want.

Make sure the role matches your skills and interests.

Use referrals if you can.

Keep practicing your fundamentals. Don’t let AI tools replace your own problem-solving skills. Stay curious and keep learning. You might surprise yourself with what you can achieve! 🚀

Looking back, I learned that OpenAI values more than just technical skills. Here are my biggest takeaways:

AI product sense matters as much as coding. I had to show I understood AI’s impact, safety, and unique challenges.

Sharing my passion for AI helped me stand out. I talked about projects that excited me and matched OpenAI’s mission.

Building a real-world portfolio and connecting with others in the AI community made a difference.

If you’re preparing, stay curious and keep practicing. You’ll grow with every attempt!

FAQ

How long did the OpenAI ML interview process take for me?

It took about three weeks from my first recruiter call to the final onsite. I moved quickly because I scheduled each round as soon as possible. Some friends waited longer, so your timeline might vary.

Did I use Linkjob AI during the actual interview?

Yes! Linkjob AI gave me real-time support and personalized answers. It worked smoothly with Zoom. And it is completely invisible from the interviewer's perspective when sharing the screen, so with it, I can silently seek help from AI tools during online interviews. In short, it's a very useful tool.

What programming languages did I use during the interviews?

I used Python for all my coding rounds. The interviewers let me pick my preferred language. I chose Python because I felt most comfortable with it.

Did I get feedback after each round?

I received brief feedback after the technical screen. The recruiter shared general impressions and next steps. After the onsite, I got a more detailed summary about my strengths and areas to improve.

What surprised me most about the OpenAI interview?

I expected tough coding, but the real-world focus and ethical questions stood out.

See Also

Navigating the 2025 OpenAI Interview Process: My Experience

Insights From My Prompt Engineering Interview: Key Q&As

Exploring My Oracle Software Engineer Interview: Q&A Insights