How to Outsmart HackerEarth Proctoring in 2026

I'm often asked how to cheat on HackerEarth or similar platforms... The question itself is filled with temptation and danger. I received an interview invitation from an internationally renowned bank, and the technical interview was conducted on HackerEarth. I don't perform well under pressure, and with the dual stress of screen sharing and camera monitoring, sometimes my mind goes almost completely blank. I really loved this company, and I desperately needed this job, because I had been unemployed for six months at that time.

I decided to try an invisible interview assistant recommended by a friend. Ultimately, I successfully passed the interview and got the long-awaited job. I’m genuinely grateful for the tool Linkjob.ai, that's why I’m sharing my entire interview experience here. Having an invisible AI assistant during an interview can be extremely helpful.

See Also

How to pass HackerRank tests with AI

How to passe the interview on Microsoft Teams with Ai assistant

How to cheat on codility and avoid getting caught

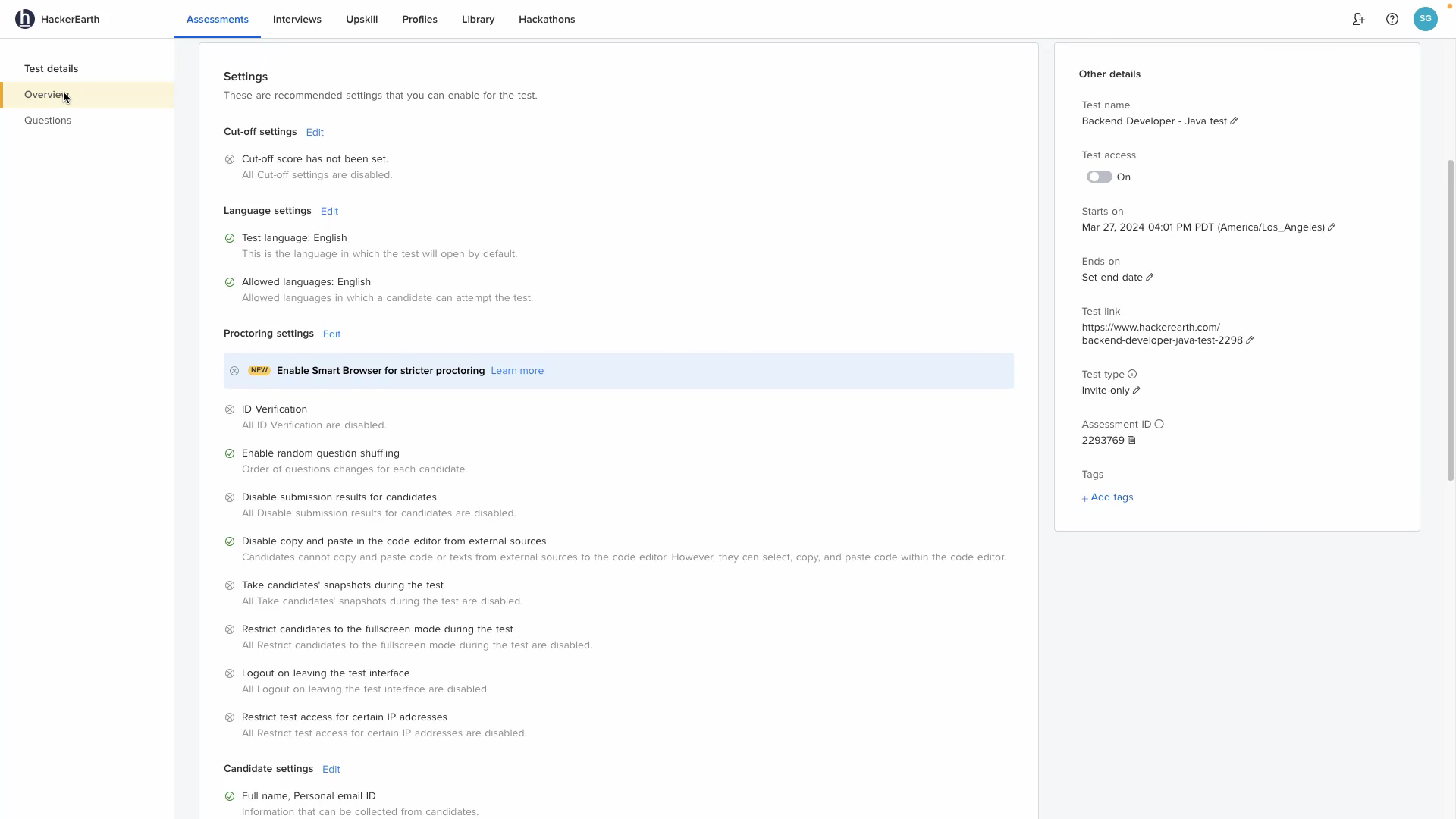

HackerEarth Assessment and Detection Risks

Platforms like HackerEarth deploy a carefully designed, layered intelligent proctoring system.

Pre-Test Controls:

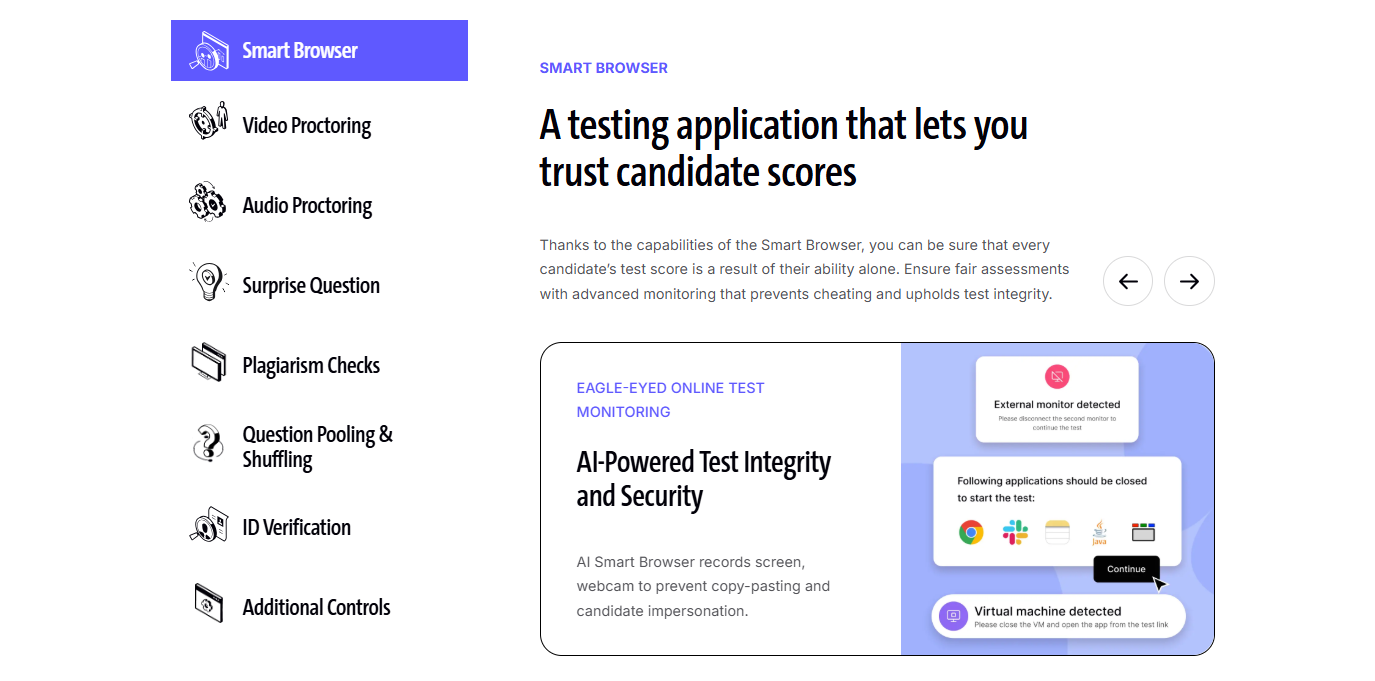

Smart Browser: A dedicated testing client that must be downloaded and run, maximizing the locking down of other computer functions.

Pre-Test Security Check: After the video stream is turned on, the system automatically scans the room, desktop, and personal identity.

In-Test Restrictions: Once the assessment begins, you are trapped in a highly restricted environment where almost no external operations are possible.

Smart Browser:

This is a desktop application built to maintain the integrity of remote technical assessments. Here’s a quick overview of its key features and capabilities:

Full-screen enforcement: you must take the test in full-screen mode; switching windows or resizing logs them out.

Application lockdown: Prevents opening other apps, browser tabs, or external links during the test.

Keystroke restrictions: Disables shortcuts like Alt+Tab, Ctrl+C/V, Ctrl+Alt+Delete, and function keys.

Hardware control: Blocks multiple monitors, screen recording, and screenshots.

Virtual machine detection: Automatically ends the test if a VM is detected.

Privacy protection: Hides OS notifications to prevent distractions or data leaks.

AI-Driven Behavioral Analysis: By real-time analysis of eye focus, typing rhythm patterns, and cursor abnormal movements, a "normal test-taking" behavioral baseline is constructed. Any deviation, such as staring at a fixed area of the screen for a long time, unnatural pauses, or burst input, will be flagged as potential cheating evidence.

Types of Evidence Collected by the Platform like HackerEarth

Evidence Type | Description |

|---|---|

AI Algorithms | Core intelligent systems used to identify code patterns and behavioral anomalies |

Real-Time Monitoring | Continuous video, audio, screen, and activity tracking |

Anomaly Detection | Flagging and analysis of any actions deviating from the "normal" behavioral baseline |

2026 Measures Introduced by HackerEarth test proctoring

AI Trace Analysis: AI-generated code usually has specific structural and naming patterns. The system evaluates whether code is "human-written" or "large-model-generated" through machine learning models.

Clipboard Restriction: Completely prohibits pasting any text from external sources, ensuring all code must be entered character by character in the built-in editor.

Dynamic Follow-Up: In FaceCode (interview sessions), the system requires candidates to explain the execution process of a certain code segment, and AI evaluates the consistency between the explanation content and code logic.

AI Interview Tools and HackerEarth Cheating Tactics

When I'm asked about how to "cheat," what most people have in mind are elementary myths that have long been crushed by platform defense mechanisms.

Myth 1: AI tools are a "silver bullet." Many believe that using ChatGPT or GitHub Copilot on another device to generate answers will make passing easy. But the reality is that platforms anti-cheating AI is evolving at an astonishing speed. For example, HackerRank's machine learning detection system may identify AI-generated code with three times the accuracy of traditional methods. 30%~ 50% of cheating attempts involve AI tools, and this is precisely what monitoring systems prioritize targeting.

Myth 2: Small movements won't be detected. Switching browser tabs, copy-pasting code, or looking up information on a phone,these traditional skills are almost ineffective against intelligent proctoring. Platforms use secure desktop applications, in-test restrictions, and AI-driven behavioral analysis, for example, real-time analysis of eye movements, typing patterns, and cursor trajectories to catch anomalies. A single inadvertent tab switch can result in the test being immediately locked.

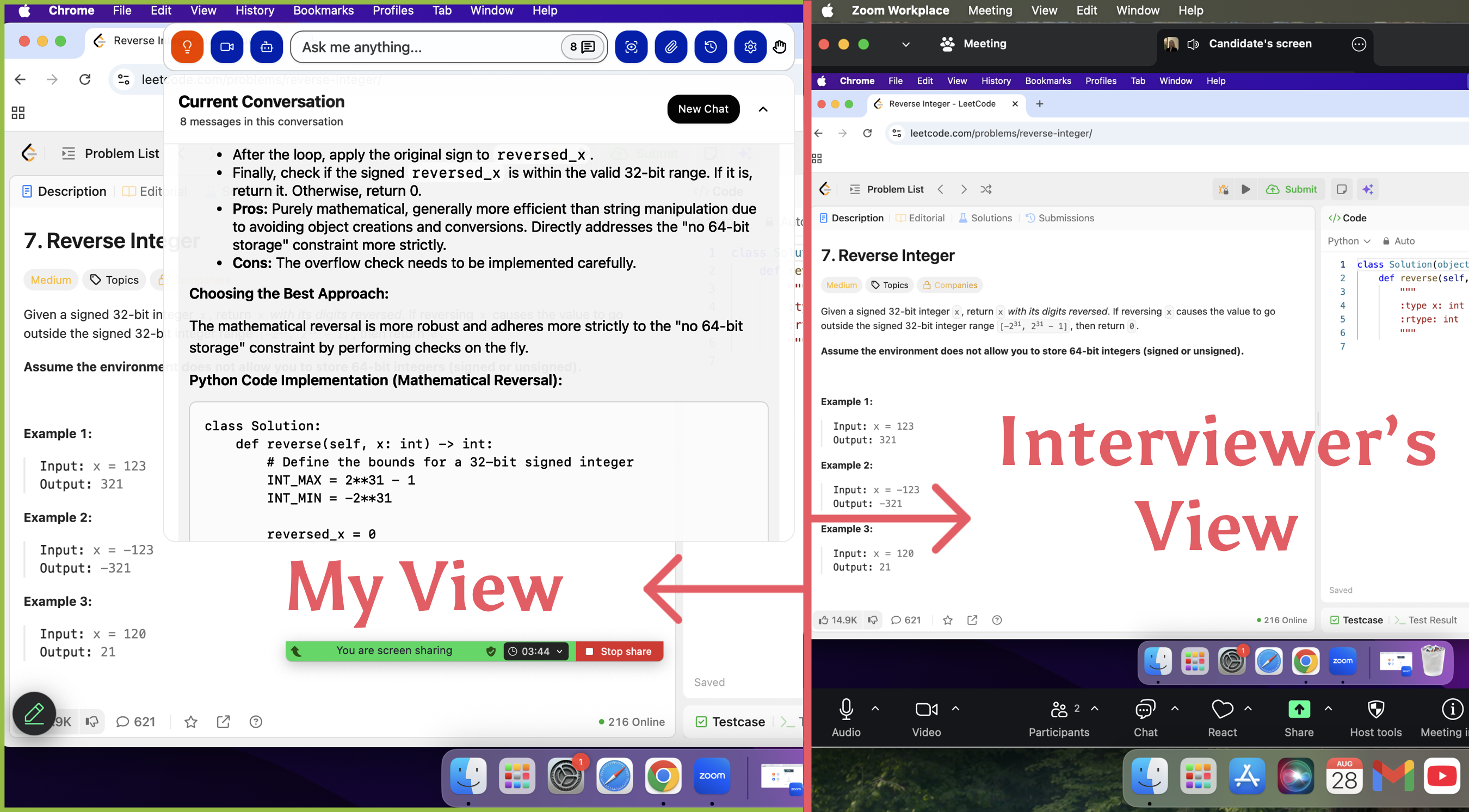

This marks the end of the elementary era. Tool developers and would be cheaters have turned to more targeted and covert solutions, giving rise to a new generation of "invisible" interview assistants, such as the Linkjob.ai I used.

Core Working Principles, designed to establish an invisible auxiliary channel that bypasses platform monitoring layers:

Independence and Invisibility: A standalone desktop native application, not a browser plugin. Completely avoids the interview platform's monitoring of "browser tab switching" and "external webpage access." System-level overlay technology renders the interface as a transparent window floating above the screen, making it completely invisible to interviewers even during full-screen Zoom or Teams sharing.

Seamless Problem Capture: No need to manually copy questions or switch windows to search. Custom global hotkeys capture screenshots, and the tool uses visual capabilities to automatically recognize programming problems in the screenshots, outputting solution ideas, complexity analysis, and complete runnable code drafts in a separate floating window in real-time.

Simulating Natural Behavior: To avoid platform detection of abnormal typing and cursor movement, manual input of code drafts is encouraged rather than direct copy-pasting, simulating a more natural thinking and coding rhythm. "Invisible cursor" settings further reduce behavioral traces.

Full-Process Real-Time Assistance: During video interviews, real-time voice transcription of interviewer questions generates structured response points (such as the S-T-A-R framework) instantly, helping to cope with sudden technical follow-ups or behavioral questions.

How can such tools be used both effectively and safely with HackerRank / CodeSignal / HackerEarth?

The key lies in the combination of "automated screenshot analysis" and "natural behavior simulation":

Problem Acquisition: Use the tool's preset global hotkey (system-level, difficult to be detected by platform scripts) to capture screen questions, and AI automatically analyzes images to provide solution ideas and code.

Code Integration: Never directly copy-paste the complete code generated by AI, as this will trigger behavior detection. You should manually input or reference segment by segment, maintaining a natural typing rhythm and modification traces.

Behavior Management: Place the tool's transparent answer window directly below the camera or near the question area, making eye movement appear to be reading the question or thinking. The tool provides "invisible cursor" and other settings to reduce suspicious mouse trajectories.

Legal Cheating: Extracting Hidden Test Cases

In addition to using "invisible plugins," another more low-key path has been attempted by some veterans, "Legal Cheating." The name itself is filled with irony and gray areas. The core goal of "legal cheating" is to use technical means to "peek" at the characteristics of these hidden test cases.

Some people try to make the evaluation system "spit out" originally invisible input data through code logic. Common techniques include:

Assertion Probing: Using assert statements or throwing specific runtime errors. For example: if (input_value > 100) throw new Exception(); If the system returns "Runtime Error," you know the input value of this test case is greater than 100. Through multiple attempts, you can narrow down the input range like playing "guess the number."

Time-Limit Probing: Using infinite loops to trigger TLE (Time Limit Exceeded). For example: if (input[0] == 'A') while(true); If it times out, it means the first character is 'A'.

Exit Code Encoding: In certain environments that allow custom exit codes, different exit(code) returns different states to infer data characteristics.

Although it's called "Legal Cheating," in HackerEarth's enterprise assessment (used for hiring interviews), this behavior is extremely dangerous and usually judged as a violation, for the following reasons:

Submission Frequency Monitoring: HackerEarth records all your submission records. If you submit 20-50 "probe codes" in a short period (changing only one

ifcondition each time), the system will immediately flag it as an "abnormal submission rhythm."Code Similarity and Logic Analysis: HackerEarth's AI plagiarism engine (MOSS evolution) not only checks for copying but also checks for "abnormal logic." Probe code usually contains a large number of conditional branches or meaningless loops, and these patterns will be flagged as "non-human programming behavior."

Screen Recording and Playback: Interviewers can see every line of your code modification history. If you obtain data through probing and then suddenly write a "hardcoded solution" targeting that specific data, the interviewer can see at a glance that you are "faking it."

Integrity Report: At the end of the assessment report, there will be an "integrity score." Frequently triggering TLE or RE often leads to a sharp decline in this score, or even directly terminates the interview process.

Ethical Considerations of HackerEarth Cheating

I, too, have grappled with concerns regarding fairness. However, I eventually realized that what we should truly be striving for is an authentic expression of our capabilities. The purpose of utilizing such tools is to counteract the diminished performance often caused by nervousness and high-pressure environments—enabling us to present ourselves with the utmost composure, and thereby ensuring that opportunities are reserved for those who are truly prepared and capable.

AI Interview Coding Copilot for HackerEarth Assessment

If you also download and use Linkjob.ai, there are some usage tips for avoidance

Manual Code Input + Simulate Real Coding Rhythm

Never directly copy-paste AI-generated code. Instead, manually input line by line, and add natural pauses, thinking, deletions, and corrections during the process.

You can write the main logic first, then gradually handle edge cases or fix small errors, imitating the human habit of "make it run first, then optimize." Slow down typing speed at difficult parts, occasionally write wrong variable names or miss semicolons, then correct them, creating "human debugging" traces.

Answer Window Invisibility + Natural Eye Movement

Set the AI-generated answer window as a transparent overlay and place it directly below the camera or in the question area, so that from the interviewer's perspective, you appear to be "looking directly at the question/interviewer."

When reading answers, scroll content at speaking speed, occasionally look up at the camera or glance sideways, disguising natural eye movement during thinking intervals. Avoid wearing glasses (to prevent lens reflection from exposing screen content).

Code Personalization and Mixing—Imitate Personal Coding Style

After AI generates code, manually modify variable naming, function structure, and comment style to make it closer to your usual coding habits (such as using your common abbreviations, bracket positions, code layout).

You can have AI add "confused variables" or temporary logic blocks when generating (such as changing if-else to switch-case), changing the code fingerprint without affecting functionality.

Add personal comments , explaining ideas, debugging processes, to avoid code being too clean and perfect.

Tip: Practice coding every day. Real skills always win over shortcuts.

FAQ

Is this so-called "truly undetectable" AI interview assistant, such as Linkjob, really completely invisible during screen sharing? What is the principle?

Linkjob AI is indeed invisible to interviewers under screen sharing conditions on mainstream interview platforms (Zoom, Teams, HackerRank). Its core technical implementation is based on "system-level transparent overlay." It runs as a standalone desktop native application, and the answer prompt window is rendered on a special graphics layer of the operating system. When the interview platform performs screen sharing, it captures the "application layer" image, while this overlay is on a different layer, so it won't be captured by the sharing stream.

These tools claim to support numerous AI models (such as GPT-4, Claude, Gemini). How should one choose in actual interviews?

Online Programming/Algorithm Interviews: Prioritize models with excellent code generation and logical reasoning, such as Claude Opus or specific GPT versions.

System Design or Behavioral Interviews: Choose models stronger in long-text understanding, structured responses (STAR method), and knowledge breadth, such as the GPT-4 series. Tools like Linkjob allow users to switch models at any time during interviews, quickly selecting the most suitable "co-pilot" based on current question types.

What is the biggest risk of using such "invisible" assistants?

The biggest risk does not come from technical defects of the tool itself , its concealment has been verified by numerous cases, but from the inconsistency of the interviewee's behavior. If oral expression level and thinking depth seriously mismatch the complex code or perfect answers generated by AI, it is extremely easy to be exposed under the interviewer's deep questioning. Tools are aids and cannot replace core competence.