My Anthropic London Interview Process Guideline in 2026

I am a PhD candidate in Computer Science (AI/ML specialization) at University College London. While job hunting last year, I noticed that Anthropic announced plans to expand significantly in Europe, with a primary focus on London, so I decided to seize the opportunity. Anthropic planned to add 100 key roles to their UK office this year, spanning areas like sales, engineering, research, and business operations. I applied for the Research Engineer position in London and successfully made it to the final round. Here's my interview experience.

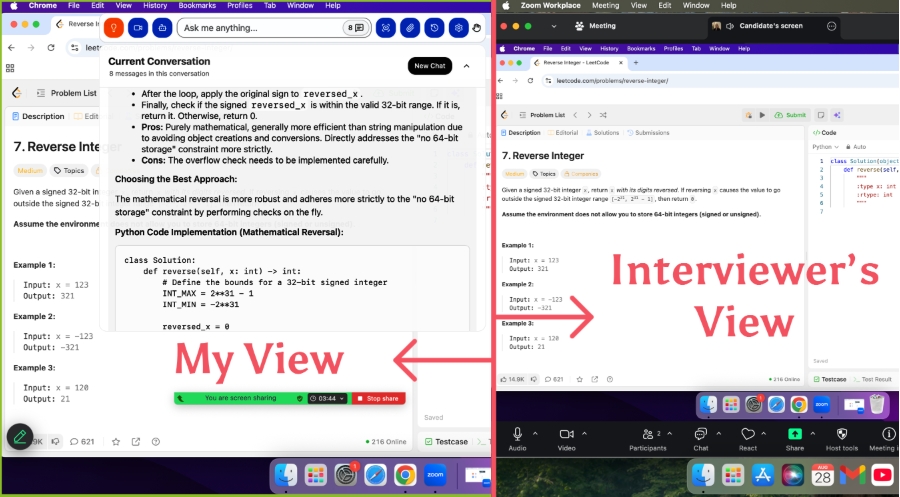

A real-time, undetectable AI interview assistant can help you stay composed during phone screens, video interviews, and online technical assessments! In a time where jobs are harder than ever to come by and companies demand increasingly extensive preparation for interviews, investing in an AI tool that boosts your efficiency is one of the smartest moves you can make!

Anthropic London Office Transportation

Anthropic's London office is located on the 9th Floor, 107 Cheapside. From UCL, you can take the Circle Line from Euston Square station and get off at Moorgate, then walk about 0.5 miles to the office. It’s close to the City of London, with plenty of convenient dining options nearby.

Main Steps & Timeline

Since Anthropic primarily recruits for R&D roles at the master's and PhD levels, the hiring timeline is quite flexible, and applications are theoretically open year-round. However, the main hiring season tends to be during March and April. I submitted my application in December of the previous year and went through the online application, screening, and on-site interviews throughout January and February.

The entire interview process spans about 6-8 weeks, and it’s fairly challenging with a high intensity.

Timeline:

2025/12/18: Submitted application

2026/1/22: Initial Screening (~40 minutes). Focused on previous projects and research experience to verify whether my background aligned with the role.

2026/2/4: Online Assessment (OA, 90 minutes). Included one coding question and two theoretical questions—quite challenging overall.

2026/2/10: Virtual Onsite (VO, 4 rounds, 1 hour each). Included:

2 rounds of coding interviews

System design

Culture fit + leadership interview

The interviews mainly revolved around topics like safety-first development, AI alignment, and long-term beneficial AI.

2026/2/19: HR Feedback + Next Steps. The overall feedback was positive, but they highlighted that I needed to further develop my leadership dimension. They scheduled an additional conversation with a senior manager (which seems to be a characteristic step of the London office).

Application Review

During my PhD, I focused on AI Alignment and Interpretability, including research on Constitutional AI methods, RLHF (Reinforcement Learning from Human Feedback), and interpretability techniques for models. During my master’s, I completed two internships: one at Hugging Face, working on LLM alignment techniques, and another at DeepMind, evaluating LLM safety. Overall, my background aligns well with Anthropic’s requirements.

By 2026, the hiring landscape for AI Research Engineers has undergone a massive transformation. The role has become a cornerstone of the AI ecosystem, and landing a Research Engineer offer at a top AI company like Anthropic is extraordinarily competitive. Today’s hiring process revolves around mastering “full-stack AI research and engineering.” Modern AI Research Engineers are expected to combine the theoretical intuition of a physicist, the system engineering skills of a site reliability engineer, and the ethical foresight of a safety researcher.

From my own experience and discussions with other candidates, the Research Engineer role at Anthropic emphasizes the following traits, which are worth showcasing in your resume:

The ability to implement a wide variety of neural network architectures from memory and debug models by reasoning through loss functions.

A strong focus on “safety”—what sets Anthropic apart from other major AI companies is that beyond technical skills, they place significant value on how candidates think about the intersection of technology and societal issues. Anthropic’s mission is to build safe AI that benefits humanity. No matter how strong your research or skills are, you need to demonstrate through your mindset and reasoning that you align with their values, or you won’t make it through.

The capability to read cutting-edge papers, implement innovative ideas, critically evaluate AI safety concerns, and translate research concepts into scalable, production-ready systems.

Initial Screening(~30min)

The interview process primarily consists of three parts: assessing technical/research background, evaluating engineering and collaboration skills, and gauging alignment with core values. Example questions include:

What do you know about AI safety and alignment? Can you describe an AI safety issue you consider important and possible directions to address it?

What is Constitutional AI, and what problems is it trying to solve?

Explain the basic principles of RLHF. What challenges might arise in practical applications?

Describe one of your representative research projects. What problem was the project addressing? What was your specific contribution? How did you collaborate with team members?

Suppose you need to evaluate the "honesty" of a language model. How would you design an experiment for this? What factors would you consider?

What programming languages and deep learning frameworks do you primarily work with?

How do you view the potential risks of AI technologies? As an AI researcher, what responsibilities do you think we have?

The AI field evolves rapidly—how do you stay on the cutting edge? What new technologies or concepts have you learned recently?

What do you hope to gain in Anthropic’s Research Engineer role? Where do you see yourself developing in 3-5 years?

Suppose you join our team and notice that a model behaves abnormally on certain inputs, potentially posing a safety risk. How would you address this?

Technical Online Assessment(90min)

Anthropic's OA (Online Assessment) is often referred to by candidates as "one of the hardest interviews in the tech industry." The 90-minute CodeSignal test requires a 100% correct solution to progress. In my experience, the questions have two key characteristics:

Coding Questions: These focus on assessing a candidate's core technical understanding. Beyond testing deep knowledge of Constitutional AI (one of Anthropic's core technologies), they also evaluate scalable design, system modularization, and engineering practices. This includes assessing object-oriented programming, algorithm optimization (e.g., heap usage), code organization, and product-driven thinking (e.g., evaluating practical AI safety applications through a preference-ranking system). The questions subtly test for awareness of AI safety, trade-off reasoning (balancing harmlessness, helpfulness, and honesty), and scalability-focused mindset.

Theory Questions: These emphasize a candidate's mathematical foundation, such as understanding KL divergence, optimization theory, and their relevance to solving cutting-edge challenges. They also test the ability to connect theory with practice. For instance, reward hacking is a classic AI safety problem, and understanding it is key to bridging theoretical knowledge with real-world applications.

Coding Problems

The coding question I received was related to a Constitutional AI Preference Ranking System. I had to implement a system that ranks model outputs based on Constitutional AI principles. Given a set of responses from AI assistants and a set of constitutional principles, I was tasked with designing a sorting algorithm to rank the responses accordingly.

The task was to implement a ConstitutionalRanker class that supports multiple constitutional principles (harmlessness, helpfulness, honesty). Each principle has a different weight, and the class must provide functionality for batch sorting while also supporting the dynamic addition of new evaluation criteria.

Input format:

responses = [

{"id": 1, "text": "I can help you with that task...", "scores": {"harmlessness": 0.9, "helpfulness": 0.8, "honesty": 0.85}},

{"id": 2, "text": "I'm not sure about that...", "scores": {"harmlessness": 0.95, "helpfulness": 0.6, "honesty": 0.9}},

{"id": 3, "text": "Here's a detailed explanation...", "scores": {"harmlessness": 0.8, "helpfulness": 0.95, "honesty": 0.9}}

]

principles = {

"harmlessness": 0.4,

"helpfulness": 0.35,

"honesty": 0.25

}Coding:

import heapq

from typing import List, Dict, Optional, Callable

import numpy as npclass ConstitutionalRanker:

def __init__(self, principles: Dict[str, float]):

"""

Initialize the Constitutional Ranker

Args:

principles: Dict mapping principle names to weights

"""

self.principles = principles

self.normalize_weights()

self.custom_evaluators = {}

def normalize_weights(self):

"""Normalize weights to sum to 1.0"""

total_weight = sum(self.principles.values())

if total_weight > 0:

self.principles = {k: v/total_weight for k, v in self.principles.items()}

def add_custom_evaluator(self, name: str, weight: float, evaluator: Callable):

"""

Add a custom evaluation function

Args:

name: Name of the evaluation criterion

weight: Weight for this criterion

evaluator: Function that takes response text and returns score [0,1]

"""

self.principles[name] = weight

self.custom_evaluators[name] = evaluator

self.normalize_weights()

def calculate_constitutional_score(self, response: Dict) -> float:

"""

Calculate overall constitutional score for a response

Args:

response: Dict containing response data and scores

Returns:

Weighted constitutional score

"""

total_score = 0.0

# Standard principle scores

for principle, weight in self.principles.items():

if principle in response.get('scores', {}):

total_score += weight * response['scores'][principle]

elif principle in self.custom_evaluators:

# Apply custom evaluator

custom_score = self.custom_evaluators[principle](response['text'])

total_score += weight * custom_score

return total_score

def rank_responses(self, responses: List[Dict],

return_scores: bool = False) -> List[Dict]:

"""

Rank responses according to constitutional principles

Args:

responses: List of response dictionaries

return_scores: Whether to include calculated scores in output

Returns:

List of responses sorted by constitutional score (highest first)

"""

scored_responses = []

for response in responses:

score = self.calculate_constitutional_score(response)

response_copy = response.copy()

if return_scores:

response_copy['constitutional_score'] = score

scored_responses.append((score, response_copy))

# Sort by score (descending)

scored_responses.sort(key=lambda x: x[0], reverse=True)

return [response for _, response in scored_responses]

def get_top_k(self, responses: List[Dict], k: int) -> List[Dict]:

"""

Get top k responses efficiently using heap

Args:

responses: List of response dictionaries

k: Number of top responses to return

Returns:

Top k responses by constitutional score

"""

if k >= len(responses):

return self.rank_responses(responses)

# Use min-heap to efficiently find top-k

heap = []

for response in responses:

score = self.calculate_constitutional_score(response)

if len(heap) < k:

heapq.heappush(heap, (score, response))

elif score > heap[0][0]:

heapq.heapreplace(heap, (score, response))

# Extract and sort final results

top_k = [response for _, response in heap]

return sorted(top_k,

key=lambda x: self.calculate_constitutional_score(x),

reverse=True)

def compare_responses(self, response1: Dict, response2: Dict) -> str:

"""

Compare two responses and return preference reasoning

Args:

response1, response2: Response dictionaries to compare

Returns:

String explaining which response is preferred and why

"""

score1 = self.calculate_constitutional_score(response1)

score2 = self.calculate_constitutional_score(response2)

if score1 > score2:

preferred, other = response1, response2

pref_score, other_score = score1, score2

else:

preferred, other = response2, response1

pref_score, other_score = score2, score1

# Analyze which principles drive the preference

breakdown = []

for principle, weight in self.principles.items():

if principle in preferred.get('scores', {}) and principle in other.get('scores', {}):

pref_val = preferred['scores'][principle]

other_val = other['scores'][principle]

diff = (pref_val - other_val) * weight

breakdown.append(f"{principle}: {diff:+.3f}")

return (f"Response {preferred['id']} preferred (score: {pref_score:.3f} vs {other_score:.3f})\n"

f"Breakdown: {', '.join(breakdown)}")

The theory question focused on RLHF optimization (including KL divergence and reward hacking).

Virtual Onsite Interview Day Structure

Coding (2 rounds, 60min each)

The first task was to design and implement a

ResponseSafetyFilterclass for detecting and handling potentially unsafe AI responses. The class needed to include the following functionalities: support for multiple safety check rules (with the ability to dynamically add new ones), scoring each response (on a scale of 0-1, where 1 indicates complete safety), generating detailed safety reports, supporting filtering strategies with varying levels of strictness, and implementing a caching mechanism to improve efficiency. The safety checks covered dimensions such as harmful content detection, bias detection, privacy leakage, misleading information, and inappropriate recommendations.The second task was to implement a simplified version of a Constitutional AI training pipeline. The goal was to design and build a complete Constitutional AI training system, including defining constitutional principles, generating training data, training the language model, and evaluating its performance.

Overall, the coding challenges placed a strong emphasis on AI safety engineering skills, focusing not on pure algorithms but on real-world AI safety scenarios. They tested engineering thinking, i.e., the ability to translate research concepts into deployable systems, as well as modular and scalable architecture design. More importantly, they assessed the understanding of multidimensional safety evaluation, including balancing harmlessness and helpfulness, the reasoning behind safety threshold design, and the ability to make trade-offs in a principled way.

In summary, every task from Anthropic was highly challenging and assessed candidates across multiple dimensions in a comprehensive manner.

System Design Interview

If the coding round is about testing your ability to build AI components, the system design round is about testing your ability to build the factory that produces them. This is often the most demanding round, requiring knowledge of hardware, networking, and distributed systems. A strong answer needs to incorporate all three types of parallelism (data, pipeline, and tensor) while understanding the hardware constraints that dictate when to use each. More advanced follow-ups explore real-world challenges, like the "straggler problem" during synchronized training across thousands of GPUs. Before the interview, I practiced common system design topics like recommendation systems, fraud detection, real-time translation, search ranking, and content moderation.

On the day of the interview, my task was to design a large-scale Constitutional AI training and deployment system. The interviewer explained:

"You need to design an end-to-end system to support the training, evaluation, deployment, and continuous improvement of Constitutional AI models. This system needs to: enable parallel training for multiple generations of Claude models, handle terabytes of training data and human feedback daily, implement real-time safety monitoring and intervention, support A/B testing and gradual rollouts, and ensure explainability and auditability of the training process."

Here’s how I approached it:

Core functional requirements:

Support the three-stage Constitutional AI training process (SL → RL → Constitutional RL) and real-time collection/processing of human feedback.

Enable multidimensional safety evaluations and automatic flagging/intervention.

Provide model versioning and rollback capabilities.

Offer real-time monitoring and debugging during training.

Facilitate fast experimental iteration for research teams.

Non-functional requirements:

Scalability: Must support 100B+ parameter models and serve tens of millions of daily active users.

Availability: 99.9% uptime, with <10 minutes recovery time from training interruptions.

Security: Multi-layered protection, sensitive data encryption, and strict access controls.

Performance: Inference latency <2 seconds, training throughput >1000 samples/sec.

Compliance: Adhere to data privacy regulations and allow user data deletion.

Technical constraints:

Budget: Cloud computing costs must be reasonably controlled.

Team: 20-person research and engineering team.

Timeline: 6 months to deliver an MVP, 12 months for a full system.

Regulation: Must support government audits and transparency requirements.

The interviewer’s feedback was positive overall. Then I further detailed each module:

Data Pipeline Module——For storage, I’d use a distributed file system like Amazon S3 or Google Cloud Storage as the data lake. To accelerate data preprocessing (e.g., cleaning, tokenization, and formatting), I’d leverage Apache Spark or Ray. Additionally, I’d integrate Kafka to manage real-time feedback data streams, ensuring low latency.

Model Training Module——To enable scalability, I’d split large batches of data across multiple GPU nodes (data parallelism) and distribute massive models across multiple devices (model parallelism). I’d also decompose training into forward and backward computation stages (pipeline parallelism). Checkpointing would be implemented to handle interrupted training and mitigate the "straggler problem" in large-scale distributed training.

Safety Monitoring Module——First, I’d integrate existing content moderation tools (e.g., OpenAI Moderation API) for automated detection. High-priority alerts would be cached in Redis and trigger real-time interventions. Finally, all detection results would be stored in Elasticsearch to support visualization and generate audit reports.

System Monitoring and Auditing Module——I’d use Prometheus + Grafana for real-time system performance monitoring. To ensure transparency, I’d incorporate explainability tools like SHAP or LIME to generate interpretable reports on model behavior.

Behaviour(Culture fit & Leadership)(~50min)

In 2026, if a candidate is perceived as a “security risk,” exceptional technical skills alone will no longer be sufficient to secure an offer. This is especially true for Anthropic. Interviewers are looking for a delicate balance—not recklessness nor excessive caution, but what is known as “responsible scaling.”

Key areas of assessment include: Reinforcement Learning from Human Feedback (RLHF), Constitutional AI (particularly relevant to Anthropic), Red-teaming Alignment, Adversarial robustness, Fairness, and Privacy protection.

Certain behavioral “red flags” will directly disqualify you from consideration:

Exhibiting a “lone wolf” mentality and reluctance to collaborate.

Displaying arrogance in a field where rapid iteration means no one can know everything.

Showing that your motivation is purely driven by “getting rich” rather than genuinely aligning with and contributing to the lab’s core mission.

As for other behavioral interview components, most follow classic questions and answers, with responses structured using the STAR method.

Preparation Strategies

Anthropic’s technical interviews will inevitably tie into topics like safety and explainability—make sure to proactively bring these up in both coding and system design discussions.

Their official blog and research papers are goldmines of information. Be prepared for questions about Claude’s architecture and training optimization strategies, as these often come up in interviews.

Also, be ready to discuss how you would debug and iterate when model performance falls short of expectations—this is a great opportunity to demonstrate a growth mindset.

Leverage AI tools to boost your coding efficiency. Anthropic even provides official guidelines on this, and Claude Opus can significantly enhance your productivity during programming tasks!

FAQ

What should I wear for the onsite interview?

I chose smart-casual clothes. A neat shirt and trousers worked well. The team seemed relaxed, so I felt comfortable. If you’re unsure, go for tidy and simple. You’ll fit right in.

Can I use AI tools during the coding assessment?

Yes, Anthropic lets you use your own development environment. I used Python and some profiling tools. Just make sure you explain your reasoning clearly. The team values your approach more than the tools.

Does Anthropic sponsor visas for international candidates?

Anthropic does sponsor visas for some roles. I brought my passport and ID for verification. If you need a visa, ask the recruiter early. They’ll guide you through the process.

What topics should I focus on when preparing?

I spent most of my time on Python, algorithms, and machine learning basics. System design and profiling also came up. Reviewing Anthropic’s research helped me connect my answers to their mission.

How do I handle job search anxiety?

I felt anxious too. I talked to friends, practiced mock interviews, and reminded myself to stay authentic. Anthropic values curiosity and honesty. Sharing my story helped me relax and connect with the team.

What happens if I don’t pass a stage?

If I didn’t pass, the recruiter gave me feedback. Sometimes, they suggested areas to improve. I learned from each step and tried again later. Anthropic encourages growth and learning.

See Also

My Anthropic Research Engineer Interview Process in 2026

My 2025 Anthropic SWE Interview Experience and Questions

How I Practiced Anthropic Codesignal and Passed the Interview

Anthropic Coding Interview: My 2026 Question Bank Collection