My Anthropic Research Engineer Interview Process in 2026

I'm a CS master student at UCLA's Samueli School of Engineering, focusing on algorithms with a concentration in Deep Learning, RL, Trustworthy AI, and Distributed Systems. During my program, I worked on an Agent Safety project and got hands-on experience with large-scale RLHF deployment (both PPO and DPO) during my internship.

This year I applied for the Research Engineer position at Anthropic's SF headquarters. Most of their headcount is in SF, with a few spots in NY and Seattle. This round they're mainly hiring for junior-level roles, targeting CS/Stats/EE grads from the 25-28 classes (both undergrad and grad).

Honestly, the interview process was pretty brutal – the bar is insanely high. They really drill down on your understanding of the math fundamentals, technical chops, and past research work (makes sense since it's a Research role). Figured I'd share my experience in case it helps others going through the same process.

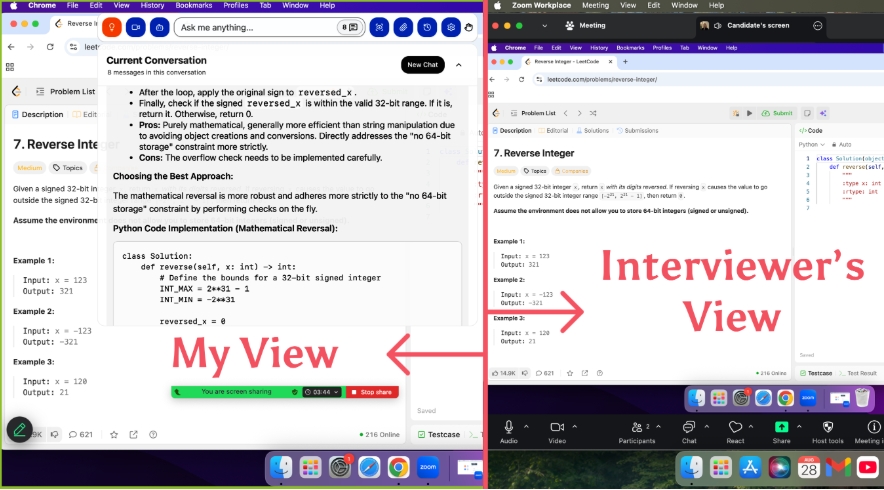

For CS students, most people are looking at a grueling 5-6 round interview process. To save your energy and focus on prepping for the tough on-site rounds, I'd recommend using a real-time AI interview assistant to help you get through the OA and recruiter screening stages.

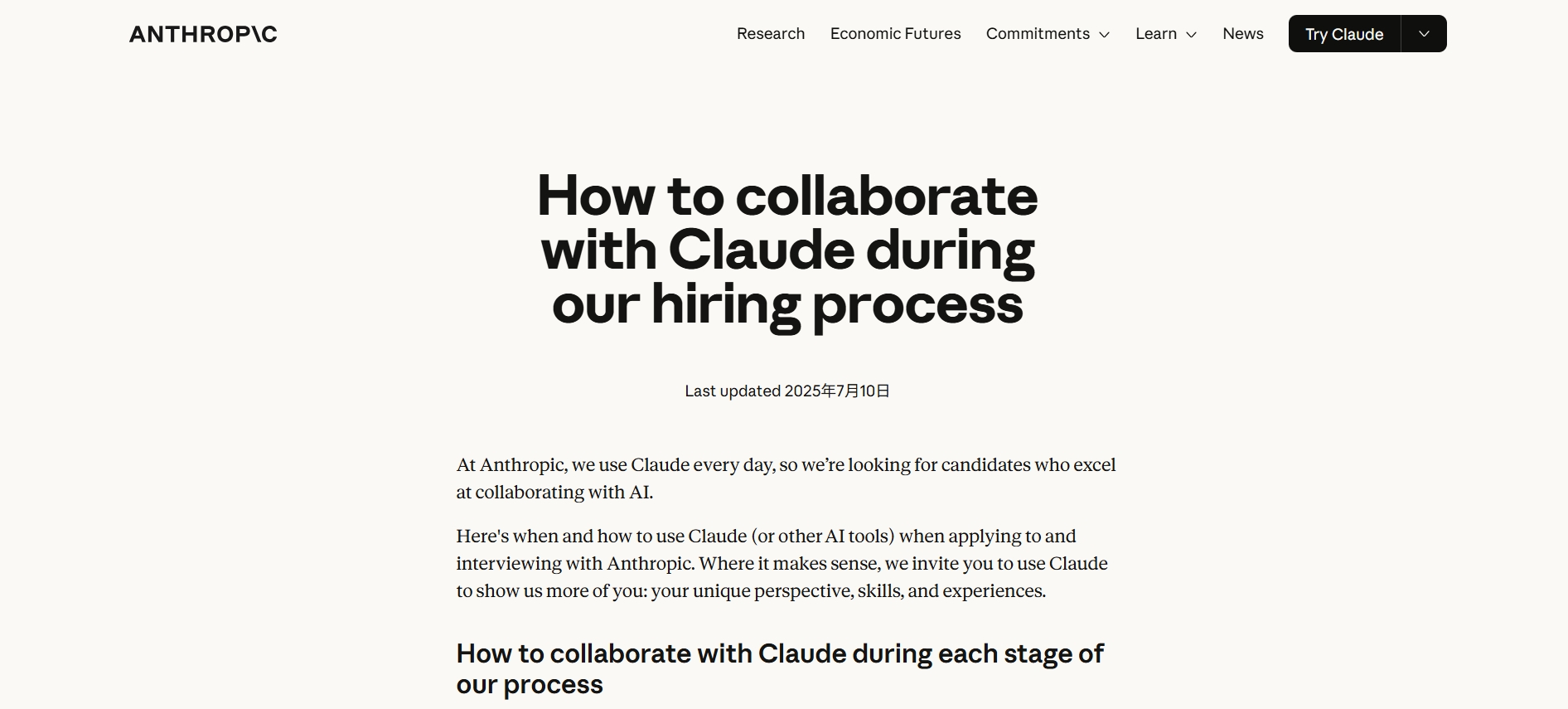

This AI interview assistant can generate answers in real-time based on what's on your screen and the interviewer's voice, and it's completely undetectable. It's honestly a solid investment for your job search! Don't feel guilty or weird about using AI tools - Anthropic themselves actually encourage new grads to use Claude to prep efficiently for their interviews:

The Overview of Anthropic Research Engineer Interview Process

Interview Timeline and Stages

This role is all about the deep integration of Research & Engineering, with a heavy emphasis on deploying cutting-edge tech in real systems. The focus is on safety issues with LLM Agents in actual production environments - specifically how Agents experience intent drift in complex task chains, how models develop behavioral shifts during multi-step reasoning and tool use, and how to detect and intervene at the system level.

The core research revolves around modeling and detecting Agent intent drift: understanding how goal shifts happen in multi-step reasoning, catching permission overreach in tool-calling chains, analyzing parameter tampering and injection attacks, attributing failure modes in complex decision chains (tracing from execution logs back to model mechanisms), and running anomaly detection on model behavior using internal signals like attention patterns and activations.

The Interview Process usually consists of 5-6 rounds spanning 4-5 weeks total.

Recruiter call (30 min): They'll ask about your background, why you're interested in Anthropic, and how well you understand their value prop. They'll explain what it means for them to be a B Corp. Heavy focus on "Why Anthropic?" and your thoughts on AI Safety plus their HHH values (Helpful, Honest, Harmless).

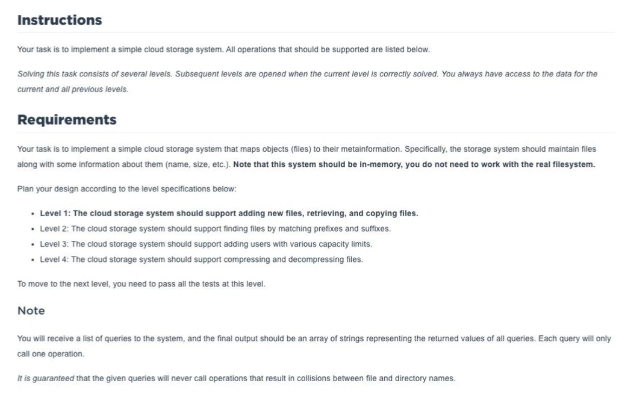

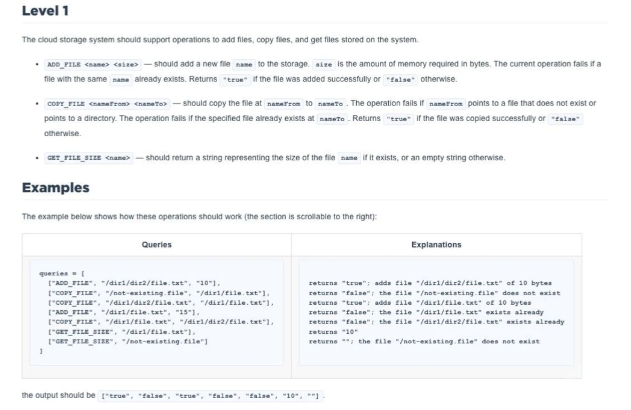

Coding and Specification Compliance

Coding challenge/Take-home Project (60-90 min): Most people get a 90-minute CodeSignal take-home that feels like a standard OA, though some get 60-minute live assessments depending on the role. They rarely throw traditional LeetCode algo problems at you - it's way more hands-on. Usually happens on CoderPad or similar platforms doing pair-programming. Common problems include:

Building a Transformer component from scratch in PyTorch (like Multi-head Attention);

debugging broken model training code, or implementing core algorithms from classic papers.

Hiring Manager call (1 hour): Mainly technical discussion split into two parts talking through projects you've completed, and reviewing code samples in different languages to spot issues and identify what the code does.

Virtual Onsite (4-5 hours): 30-minute breaks between each round.

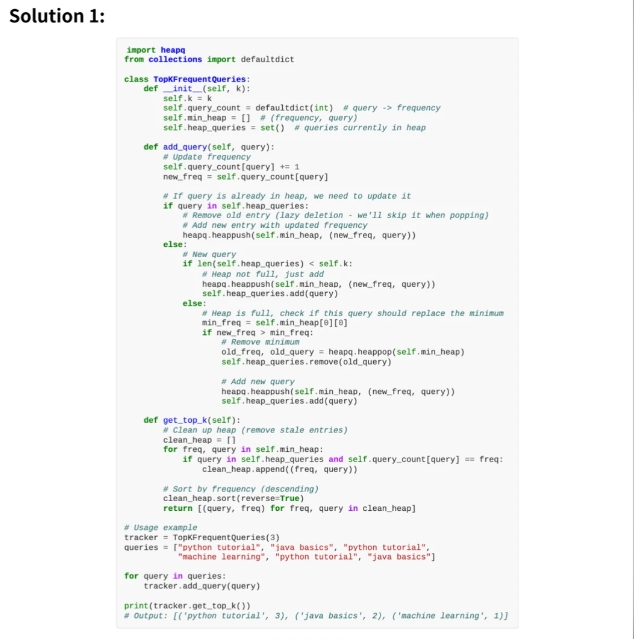

Pair Programming/ML Engineering (1h): Focus on algorithms and data structures, but way more practical than typical LeetCode problems. This is an absolutely hardcore coding round.

System Design Scenarios

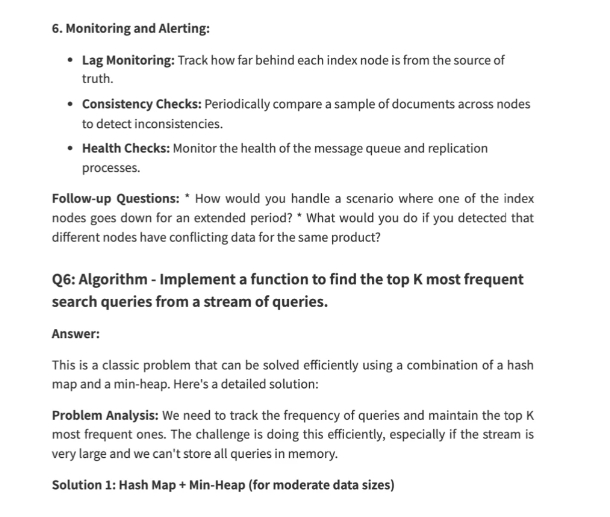

System Design (1h): Mainly tests large model training system design, usually tied to real problems Anthropic has faced. Like designing systems for GPT to handle multiple queries single-threaded, Claude's chat service, or banking applications. Honestly feels like Anthropic's RE roles demand insanely high engineering skills - even higher than pure paper-publishing ability. The interviewers couldn't care less if you can reverse a binary tree; they only care if you can write efficient PyTorch code. They'll throw very practical research problems at you. For example: "During pretraining of a 100B parameter model, you suddenly see loss spikes. How do you debug this? Is it a data issue, learning rate problem, or hardware failure?"

On the math side, some employees will dive deep into optimizer math during interviews - like how AdamW's momentum mechanism performs in specific non-convex optimization scenarios. I'd suggest focusing on these areas when prepping:

Linear Algebra - Just knowing matrix multiplication isn't enough; you need to understand what that multiplication means geometrically. Interview content includes eigenvalues/eigenvectors (and their relationship to Hessian matrices), rank and singularity (related to techniques like LoRA), and matrix decomposition (SVD, PCA, model compression).

Calculus and Optimization - "Backprop" rarely shows up as "explain backprop" but more like "derive the gradient for this specific custom layer." You need deep understanding of automatic differentiation, including the difference between forward-mode and reverse-mode and why reverse-mode wins.

Probability & Statistics - Maximum likelihood estimation, properties of key distributions (core to VAEs and diffusion models), and Bayesian inference.

Second Coding (1h): Some roles have this. I didn't encounter it.

Behavioral and Culture Fit Interviews

Behavioral & Research Deep Dive (1h): Conversational format, very different from traditional interviews. Questions revolve around AI in ethics, data protection, safety, job markets, and knowledge sharing. They'll also deep-dive your past research projects. As an interviewee, you need to not only explain your own papers clearly, but also have solid mathematical derivation skills for current cutting-edge architectures (like MoE, Mamba, various Attention variants).

Scaling Laws: Deep discussion on your understanding of scaling laws and how to use small-scale experiments to predict large-scale model performance.

Alignment Values: Personally feel Anthropic is extremely Safety-focused. In the culture round you'll get questions like: "If you found your team wanted to sacrifice some red-teaming test time to ship a model faster, what would you do?" (Remember: at Anthropic, Safety always trumps Speed).

The recruiter call kicked things off. I talked about my background and why I wanted to join Anthropic. The online coding challenge came next. I had 90 minutes to solve tough problems with perfect accuracy. After that, I met with a hiring manager for a deep dive into my experience. The technical interviews were intense—four or five rounds, each almost an hour. I had to show my skills in coding, system design, and machine learning. The final steps included reference checks and team matching, which took a few weeks. The whole interview process tested my patience and focus.

Interview Process Preparation Strategies

This is my prep advice for candidates:

Go hard on PyTorch. Don't waste too much time grinding LeetCode. Hit up GitHub and find minGPT (Andrej Karpathy) or nanoGPT - read through it line by line until you get it, then try writing it from scratch without looking at the source. Practice writing distributed training scripts in PyTorch (DDP/FSDP).

Deep dive Anthropic's papers and blogs: You absolutely need to read their work on Constitutional AI, Scaling Laws, and Mechanistic Interpretability (especially the underlying feature extraction stuff for Claude 3/Opus series - this is what they're really proud of).

If you have research experience, Research round interviewers love asking: "Tell me about an experiment you spent ages on that totally failed. What did you learn?" They value your ability to navigate ambiguity when there's no clear answer.

Mock pair-programming with friends who have similar backgrounds. You need to not just write correct code, but communicate your thought process clearly while coding (think out loud). At Anthropic interviews, smooth communication (cultural & intellectual fit) matters just as much as getting your code to run.

If you want to succeed, start early, stay curious, and never stop improving. You’ve got this!

FAQ

What programming language should I use for the coding rounds?

I used Python for all my interviews. Anthropic prefers Python because it’s clear and readable. If you feel more comfortable with another language, ask your recruiter first. I stuck with what I knew best.

How technical are the behavioral interviews?

The behavioral interviews felt more like conversations. I talked about teamwork, handling conflict, and making decisions. Sometimes, I discussed technical projects, but the focus stayed on how I think and communicate.

What if I get stuck during a coding interview?

I always explained my thought process out loud. If I got stuck, I asked clarifying questions or broke the problem into smaller steps. The interviewer wanted to see how I handled challenges, not just if I finished.

How important is knowing Anthropic’s mission?

It’s super important. I read their blog and research before my interviews. I made sure I could explain why AI safety matters to me. Showing that I cared about their mission helped me stand out.

See Also

My 2025 Anthropic SWE Interview Experience and Questions

Anthropic Coding Interview: My 2026 Question Bank Collection

How I Practiced Anthropic Codesignal and Passed the Interview

How I Beat 2026 Anthropic Interview: My Process & Questions

Top 20 Real Anthropic Interview Questions I Compiled for 2026