How I aced the Instacart CodeSignal assessment in 2026

I first heard about Instacart’s CodeSignal online assessment (OA) when a recruiter emailed me after I applied for a new-grad Software Engineer role. They said I’d be taking a CodeSignal assessment that Instacart uses to screen early-career candidates before interviews.

One timed session with four progressively harder problems, scored on the usual 300–850 CodeSignal scale. People often talk about “doing 2 out of 4 levels” or “solving all four levels” and mention that a score somewhere around the high-400s or above is typically needed to move on, though this is anecdotal and can vary by role and year.

In my case, the OA was exactly that: a four-question CodeSignal session that felt like a focused, proctored version of LeetCode — but with stricter rules and higher stakes. After the OA, my process followed the pattern many candidates describe online: a short recruiter call, then a virtual onsite with multiple rounds (coding, data-pivoting / CSV manipulation, system design, and behavioral).

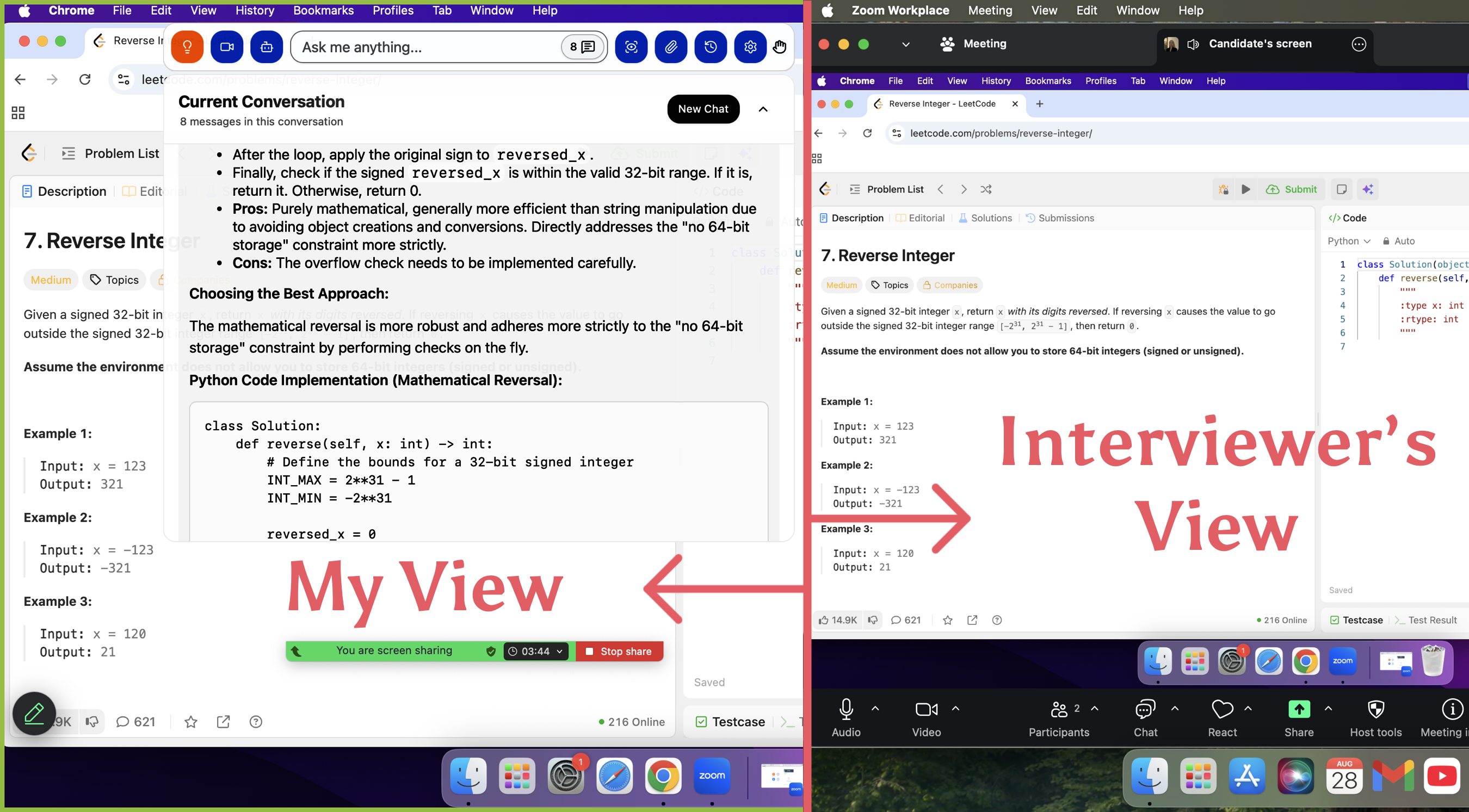

Instacart CodeSignal employs certain detection methods, such as 'suspicious score' detection. Because of this, I needed to find an application that truly provides AI support but is not easily detected. After searching extensively, I saw that the reviews for the LinkJob AI Interview assistant were quite good. After trying it out, I found that its screenshot analysis feature was genuinely helpful for solving CodeSignal problems. I did use this feature in my formal test and successfully passed it, which is why I'm willing to share my interview experience here

Instacart Codesignal Assessment Structure

Instacart doesn’t publish their OA format publicly, but combining the official CodeSignal GCA docs with candidate reports gives a pretty clear picture of what to expect:

Platform: CodeSignal (Certified / Pre-Screen assessment)

Questions: 4 “levels” of increasing difficulty, all visible from the start

Duration: 70 minutes total (you decide how to split your time)

Scoring: 300–850, based on correctness, efficiency, and coverage of hidden tests

Languages: 40+ supported (Python, Java, C++, JavaScript, etc.) — choose your strongest language

Proctoring: Webcam + screen recording + plagiarism checks (no outside IDEs; behavior is monitored)

A typical high-level pipeline for Software Engineer roles (from Glassdoor and Reddit) looks like this:

Online application

CodeSignal OA (GCA-style, 4 levels)

Recruiter phone screen

Virtual onsite (≈4 hours)

1–2 coding rounds (LeetCode medium-ish, sometimes with CSV / “data pivoting” style tasks)

1 system design or API / data-model design

1 behavioral / background round

Passing the OA doesn’t guarantee an offer, but it’s basically the ticket that lets you even reach the onsite.

What My Instacart Codesignal Assessment Felt Like

Although the exact questions change, CodeSignal’s GCAs tend to follow a 4-level difficulty curve: easy warm-up, simple medium, more involved implementation, and finally an algorithm-heavy question.

My Instacart OA matched that pattern:

Level 1 – Simple aggregation.

Parse structured input and compute counts / sums with a hash map.

LeetCode difficulty: Easy.

Level 2 – Time-window analytics.

Events with timestamps and “last X seconds” logic; implemented with a queue / sliding window.

LeetCode difficulty: Easy–Medium.

Level 3 – Graph traversal.

Undirected graph with hubs / warehouses; BFS or DFS to compute distances or reachable sets.

LeetCode difficulty: Medium.

Level 4 – Optimization under constraints.

A DP / greedy hybrid: choose tasks / deliveries / promotions to maximize profit subject to capacity/time limits.

LeetCode difficulty: high Medium to Hard.

Because CodeSignal reuses questions across companies and years, you’ll see the same problem patterns again and again.

Below are two real CodeSignal-style questions that circulated widely in 2024–2025 and are excellent preparation for Instacart-level OAs.

Real Instacart CodeSignal Questions (With Solutions)

These problems are genuine problems I encountered during the assessment, which I am sharing along with the solutions I provided.

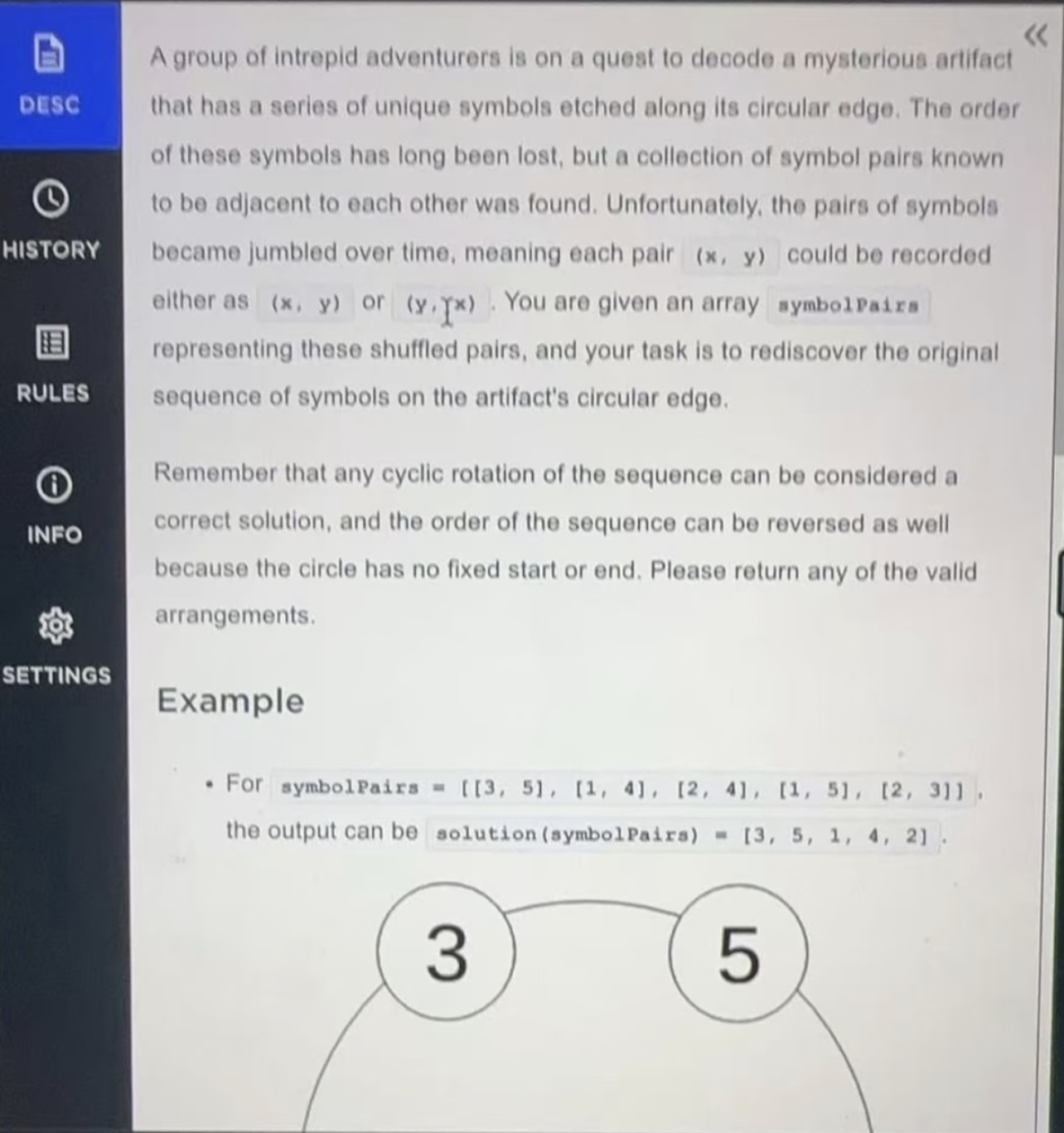

Question 1 – Reconstruct a Circular Symbol Sequence

(Graph / cycle reconstruction)

Story version

A circular artifact has unique symbols engraved around its edge. Archaeologists lost the original order, but they preserved a list of pairs of symbols that were adjacent on the circle. Each pair [x, y] could have been recorded in either order ([x, y] or [y, x]).

You’re given an array symbolPairs of these pairs. Your task is to reconstruct one valid cyclic ordering of the symbols.

Because the arrangement is circular:

Any rotation of the sequence counts as correct

You may also reverse the sequence

Example

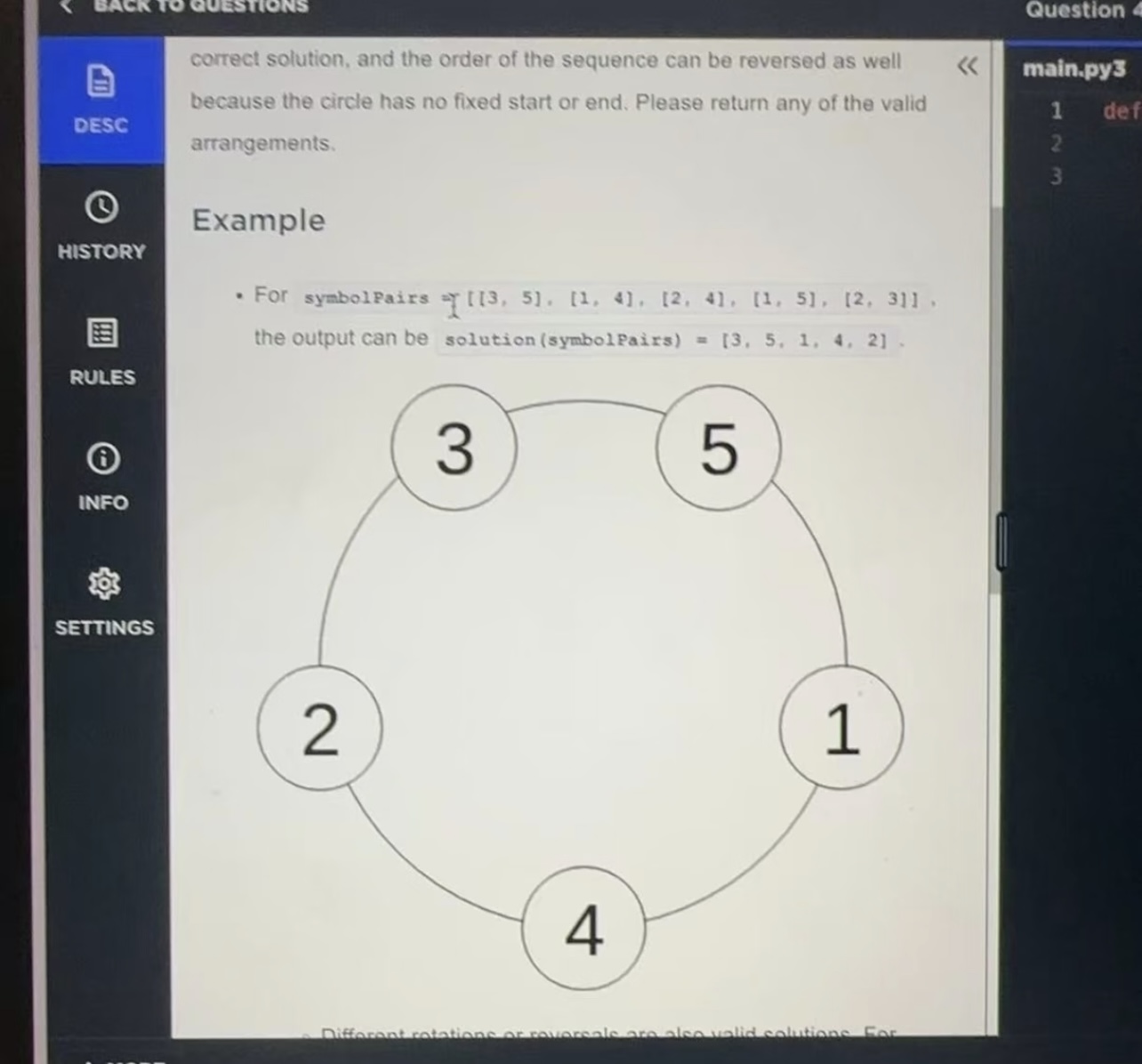

symbolPairs = [[3, 5], [1, 4], [2, 4], [1, 5], [2, 3]]

# One valid answer:

solution(symbolPairs) = [3, 5, 1, 4, 2]

This corresponds to the circle: 3–5–1–4–2–(back to 3).

Key idea: it’s just a simple cycle

Model this as an undirected graph:

Each symbol is a node.

Each pair

[x, y]is an edge betweenxandy.

Because the original structure is a single circle:

Every node has degree 2 (two neighbours).

The graph is one simple cycle.

So the real question is:

Given the edges of a cycle, output the nodes in cyclic order.

This question is indeed a bit difficult. So I used linkjob.ai, which remains undetectable and thus evades detection by interview platforms.

Linkjob AI's Undetectable AI Interview Copilot

Algorithm (adjacency + one walk)

Build an adjacency list.

from collections import defaultdict adj = defaultdict(list) for a, b in symbolPairs: adj[a].append(b) adj[b].append(a)For a valid input, each

adj[node]should end with exactly 2 neighbors.Pick any starting node.

start = next(iter(adj)) # any key path = [start] prev = None curr = startWalk around the cycle:

while True: n1, n2 = adj[curr] # two neighbors nxt = n1 if n1 != prev else n2 if nxt == start: break # back to where we started path.append(nxt) prev, curr = curr, nxtReturn

path.

This contains each symbol exactly once in valid circular order. Any rotation or reversal of this list is acceptable.

Complexity

Let n be the number of symbols (and also of pairs):

Building adjacency: O(n)

Walking the cycle: O(n)

Space: O(n) for adjacency and the output list

This is classic CodeSignal: once you strip away the story, it’s just “walk a cycle in a degree-2 graph.”

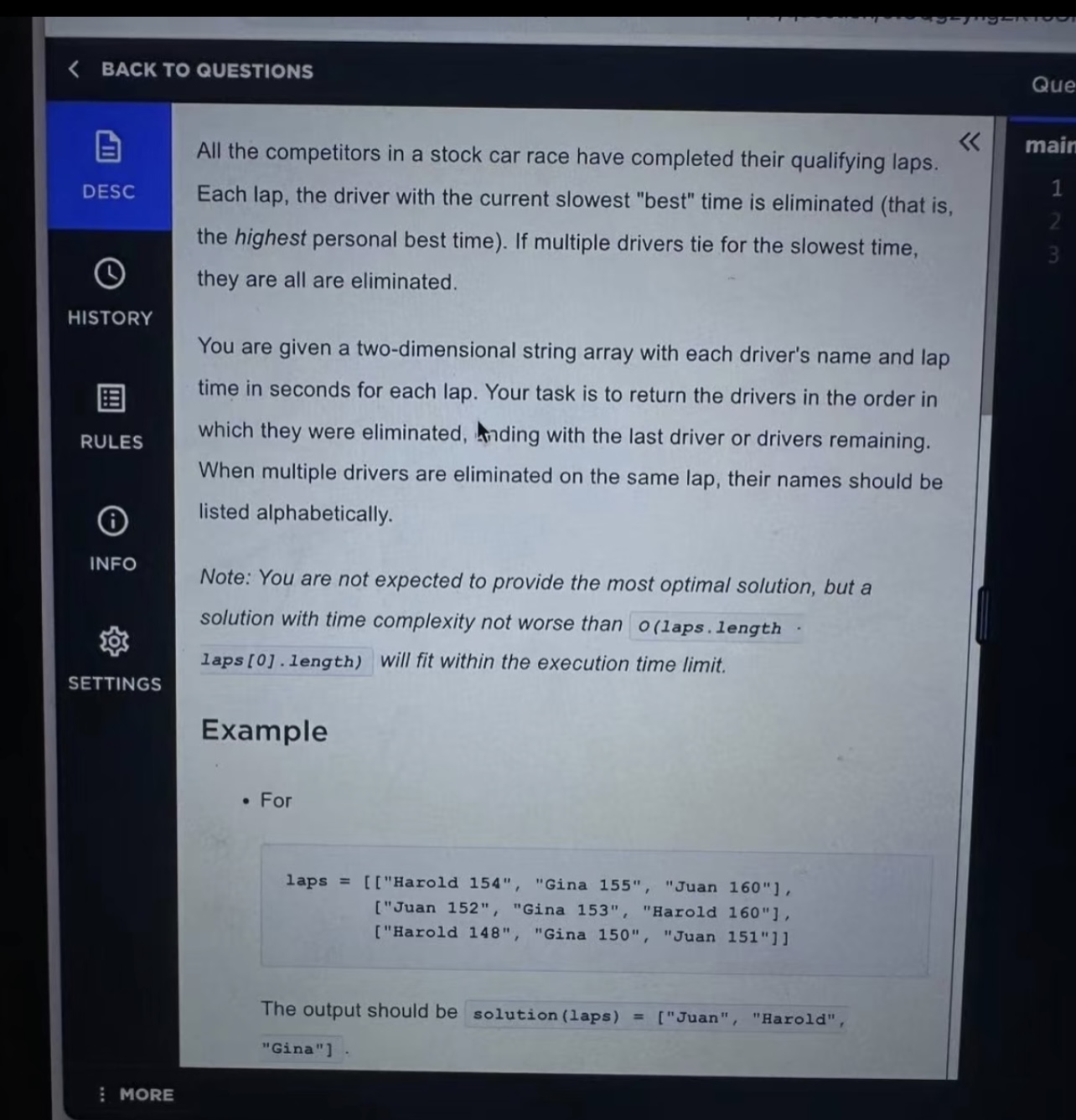

Question 2 – Stock-Car Qualifying Laps Elimination

(Simulation + hash maps)

Story version

All drivers in a stock-car race have completed several qualifying laps. For each lap, you get an array of strings in the format "Name timeInSeconds". The same set of drivers appears on every lap.

We now simulate elimination:

After each lap, for every still-active driver we look at their best lap time so far (minimum time over laps they’ve driven).

The driver(s) whose best time is currently the slowest (numerically largest) are eliminated.

If multiple drivers tie for the slowest best time, they are all eliminated together.

Drivers eliminated on the same lap must be listed alphabetically.

At the end, return an array of names in the order they were eliminated, finishing with the last remaining driver(s).

The problem states that any solution with time complexity not worse than O(laps.length * laps[0].length) is fine.

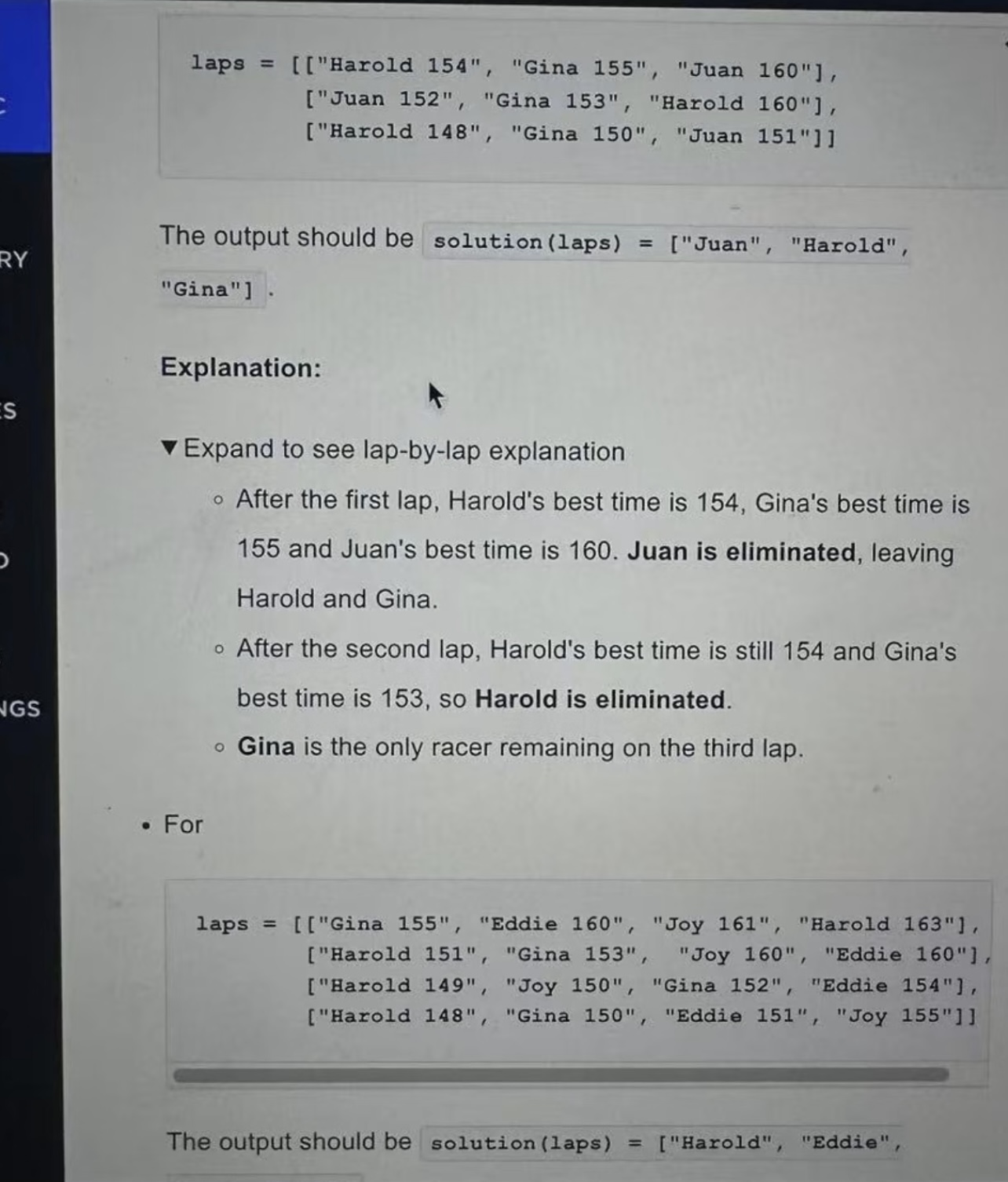

Example 1

laps = [

["Harold 154", "Gina 155", "Juan 160"],

["Juan 152", "Gina 153", "Harold 160"],

["Harold 148", "Gina 150", "Juan 151"]

]

solution(laps) = ["Juan", "Harold", "Gina"]

Step-by-step:

Lap 1 bests: Harold 154, Gina 155, Juan 160 → Juan (slowest) is eliminated.

Lap 2 (only Harold, Gina): Harold best = min(154, 160) = 154; Gina best = min(155, 153) = 153 → Harold is eliminated.

Gina is the only driver left.

Example 2

laps = [

["Gina 155", "Eddie 160", "Joy 161", "Harold 163"],

["Harold 151", "Gina 153", "Joy 160", "Eddie 160"],

["Harold 149", "Joy 150", "Gina 152", "Eddie 154"],

["Harold 148", "Gina 150", "Eddie 151", "Joy 155"]

]

solution(laps) = ["Harold", "Eddie", "Joy", "Gina"]

Here some eliminations happen in groups when several drivers share the slowest best time; group members are output in alphabetical order.

Algorithm (direct simulation)

Initialise structures.

best = {} # name -> best time so far active = set() # names still in the race # first lap tells us who is racing for rec in laps[0]: name, t = rec.split() active.add(name) best[name] = float('inf')Process each lap.

result = [] for lap in laps: # update best times for active drivers for rec in lap: name, t = rec.split() t = int(t) if name in active: best[name] = min(best[name], t) if len(active) <= 1: # nothing to eliminate anymore continue # find slowest current best among active drivers worst_time = max(best[name] for name in active) # all drivers with this worst best time are eliminated eliminated = sorted( [name for name in active if best[name] == worst_time] ) if eliminated: result.extend(eliminated) active -= set(eliminated)Append any remaining drivers.

if active: result.extend(sorted(active)) return result

Complexity

Let L = laps.length and D = laps[0].length:

We parse each

"Name time"once:O(L × D)Every lap we scan all active drivers to find the maximum:

O(D)

So overall complexity is O(L × D) with O(D) extra space — exactly what the problem expects.

This is a very typical CodeSignal “stateful simulation with running stats” problem: lots of string parsing and book-keeping, no fancy data structures.

Practical Strategies That Helped Me Score Well in Instacart Codesignal Assessment

1. Step-by-Step Debugging

With only 70 minutes, debugging must be controlled, not chaotic:

Write small helper functions: parsing, updating maps, BFS/DFS, etc.

Add targeted logs:

For DFS/BFS: current node, neighbours, visited set size

For sliding windows: window boundaries and current aggregate

When a test fails, I always check in this order:

Obvious off-by-one errors

Edge cases: empty inputs, one element, maximum sizes

Whether I reset state correctly between test cases

2. Strip the Story Down to the Core

Most CodeSignal questions are long stories hiding simple cores:

Arrays + maps (aggregate by key)

Sliding windows / queues (last X minutes / last N events)

Graphs (BFS/DFS) (reachability, connected components, shortest paths)

DP / greedy (best value under constraints)

Train yourself to read a wall of text and say, “Ah, this is just graph + BFS” or “This is fixed-window frequency counting.”

The two real questions above are good examples: one is “walk a simple cycle in a degree-2 graph,” the other is “simulate elimination with running minimums.”

3. Safety-First Optimization

On GCA-style tests, it’s often better to have three fully correct solutions + partial progress on Q4 than an unfinished fancy answer.

My strategy:

Lock in Q1 quickly (≤10 minutes).

Spend ~15–18 minutes on Q2, make it clean and fully correct.

For Q3, aim to get a correct solution with acceptable complexity, even if not optimal.

Treat Q4 as a bonus: sketch a straightforward DP / greedy solution first, then optimise if time remains.

Because CodeSignal uses a scoring model where later questions are weighted more while still crediting earlier ones, this balance tends to work well.

Targeted Practice Plan for Instacart’s Codesignal OA

Based on my OA and public information about CodeSignal:

Focus on these problem types:

Arrays & Hash Maps

Frequency counts, grouping, prefix sums

LeetCode examples: Two Sum, Group Anagrams, Subarray Sum Equals K

Sliding Windows & Queues

Moving averages, last X seconds events, “longest substring without duplicates”

LeetCode: Sliding Window Maximum, Longest Substring Without Repeating Characters

Graphs (BFS/DFS)

Grids and adjacency lists, connected components, shortest unweighted paths

LeetCode: Number of Islands, Shortest Path in Binary Matrix

Dynamic Programming / Greedy

Knapsack-style, interval scheduling, resource allocation

LeetCode: House Robber, Coin Change, Non-overlapping Intervals

Simulation & Data Pivoting

Exactly like the stock-car laps question: parse rows, maintain running best/worst stats, eliminate or classify items.

Instacart Codesignal Time Management Template (70-Minute GCA)

Here’s the rough schedule I used:

Task | Target time |

|---|---|

Skim all 4 questions | 3–5 min |

Q1 (easy) | ≤ 10 min |

Q2 (easy–medium) | 15–18 min |

Q3 (medium) | 18–20 min |

Q4 (medium–hard) + review | 15–20 min |

Final sanity checks | 2–3 min |

I kept a small timer on the desk (outside the screen) just to make sure I didn’t sink 40 minutes into one problem.

FAQ

What happens after I pass the Instacart OA?

Typically a recruiter call, then a virtual onsite with 2 coding rounds, 1 design round, and 1 behavioural round. Details vary by role and office, but this pattern shows up repeatedly in recent candidate reports.

What CodeSignal score do I need?

Instacart doesn’t publish a hard cutoff. Some companies using the new 200–600 Coding Score require around 390–400+ for progression, but that varies a lot by company and year. Treat those numbers as rough context, not guaranteed thresholds.

Which language should I use?

Use the language you debug fastest in. CodeSignal supports most mainstream languages, and switching mid-test just wastes time.

How should I use AI tools like Linkjob.ai?

You can simply use its screenshot analysis feature directly. The AI will provide the coding approach and a reference answer. The app is completely invisible/stealthy, so you can use it without worry.

See Also

How to Use Technology to Cheat on CodeSignal Proctored Exams

How I Navigated the Visa CodeSignal Assessment and What I Learned

My Journey Through Capital One CodeSignal Questions: What I Learned

I Cracked the Coinbase CodeSignal Assessment: My Insider Guide

How I Cracked the Ramp CodeSignal Assessment and What You Can Learn