How I Mastered NVIDIA Interview Questions for 2026

I recently cleared the NVIDIA Deep Learning Software Engineer interview, and honestly, it wasn’t just about coding. It pushed me to really master NVIDIA interview questions for 2026--not just by memorizing, but by building solid technical fundamentals, thinking through systems, and communicating clearly under pressure. That combination made the biggest difference in helping me feel confident and actually stand out in each round.

I'm really grateful to Linkjob AI for helping me pass my interview, which is why I'm sharing my NVIDIA interview questions and experience here. Having an invisible AI interview assistant during the interview indeed provides a significant edge. If you're preparing, this guide is exactly what I wish I had earlier.

Key Takeaways

I underestimated how deep NVIDIA goes into fundamentals. It’s not enough to "know"—I had to explain why, and sometimes I got stuck when I couldn’t.

Coding wasn't the hardest part, but weak fundamentals (like NumPy or bit ops) slowed me down a lot. Small gaps matter here.

Interviewers often guided me, but only when I spoke my thoughts clearly. Silence = no help.

The scope is wide: ML theory, systems, product thinking, and coding all showed up. I had to switch contexts fast.

Some questions felt simple at first, but follow-ups made them much harder. Depth always came after the surface.

NVIDIA Interview Process Overview

Interview Stages

From what I experienced and cross-checked with other real interview reports, the process is usually structured like this:

Stage | Duration | Purpose |

|---|---|---|

ML Fundamentals | 45 mins | Deep learning theory & reasoning |

Coding Rounds | 45 mins × 1-2 | Algorithms + practical implementation |

System Design | 45-60 mins | Backend + distributed systems |

Resume Deep Dive | 45 mins | Project discussion + light design |

Applied ML & Product Thinking | 45 mins | Evaluate fit with company values and job needs through behavioral questions |

Behavioral & Hiring Manager | 45 mins | BQ + team fit + light design |

Most candidates go through three to six rounds of interviews. The whole process usually takes one to four weeks. I found that each round tested a different skill, so I made sure to practice both technical and behavioral questions.

What to Expect

I expected a normal intro → coding → behavioral flow. That didn’t happen.

Some rounds skipped intro completely and jumped straight into technical questions.

Coding rounds sometimes had minimal interaction, almost like a silent evaluation.

Follow-up questions went deeper quickly—especially in ML theory.

Even non-SDE roles still had coding + system thinking.

NVIDIA Interview Question Types

During my preparation for my NVIDIA interview, I searched for various real SDE interview questions and experiences, such as the experience of NVIDIA deep learning interview, to familiarize myself with the types of questions asked. Below, I will provide a detailed analysis of the major categories of questions I encountered and summarized.

Technical NVIDIA Interview Questions

Based on real interviews I went through:

Machine Learning Fundamentals

Gradient descent, convex optimization

SGD vs full batch vs mini-batch

Generalization gap

AI Systems & Optimization

Model lifecycle: frontend → ONNX → GPU

Kernel fusion, quantization

Coding

Array problems (Three Sum)

Matrix ops (2D convolution)

Hashing (Anagram)

Bit manipulation (custom allocator)

Distributed Systems

Concurrency control

API design

Behavioral Interview Questions

I didn't get heavy Amazon-style BQ, but some still came up:

Handling disagreement with teammates

What kind of team/environment I prefer

Why I want to switch roles

Role-Specific Questions

These depended heavily on the role:

Deep Learning / AI roles

Optimization techniques

Parallelism (tensor vs pipeline)

Technical Marketing / Product-facing roles

How to evaluate usability

How to market a technical feature

Real NVIDIA SDE Interview Questions

Stage 1. ML Fundamentals (45 mins)

The interviewer skipped introductions and went straight into questions.

Gradient Descent

Explain how it works

Does it guarantee global optimum?

What kind of loss surface guarantees convergence?

I started with gradient descent—explaining how it works and whether it guarantees a global optimum. He then asked what kind of loss surface guarantees convergence, pointing me toward convex functions.

He moved on to SGD vs full-batch vs mini-batch.

SGD vs Full Batch vs Mini-batch

Trade-offs?

Which one escapes local minima better?

I gave a general answer at first, but he pushed me to be precise about variance. He also asked which method escapes local minima better, and I realized SGD’s randomness helps.

In the last part, he asked about generalization gap.

Generalization Gap

Given:

g_populationvsg_train

Which optimization method would you choose?

I initially chose full-batch, but he guided me toward flat vs sharp minima and why smaller batch sizes generalize better.

Stage 2. Coding Rounds (45 mins × 2)

The Coding round consisted of 2 rounds, and the type of coding questions were quite similar to real questions of NVIDIA technical interviews.

Coding Round I (45 mins)

In this round, I got classic problems, but under time pressure they didn’t feel that easy.

Problem 1: Three Sum

The interviewer asked me to find all triplets that sum to zero.

I immediately thought of sorting + two pointers, but I slowed myself down by overthinking edge cases too early.

My Approach

Sort the array

Fix one number

Use two pointers to find pairs

Skip duplicates carefully

Code I wrote

def threeSum(nums):

nums.sort()

res = []

for i in range(len(nums)):

if i > 0 and nums[i] == nums[i - 1]:

continue

l, r = i + 1, len(nums) - 1

while l < r:

total = nums[i] + nums[l] + nums[r]

if total == 0:

res.append([nums[i], nums[l], nums[r]])

l += 1

r -= 1

while l < r and nums[l] == nums[l - 1]:

l += 1

elif total < 0:

l += 1

else:

r -= 1

return resLooking back, the logic was fine.

I just spent too long trying to make it “perfect” instead of getting a working version quickly.

Problem 2: Group Anagram

This one was more straightforward.

The interviewer wanted me to group strings that are anagrams.

My Approach

Use sorted string as key

Store in hashmap

Code I wrote

from collections import defaultdict

def groupAnagrams(strs):

mp = defaultdict(list)

for s in strs:

key = ''.join(sorted(s))

mp[key].append(s)

return list(mp.values())This part went smoothly.

The interviewer didn’t push optimization—he mainly checked if I could structure it cleanly.

Coding Round II (45 mins)

This round felt very different—less LeetCode, more real ML work.

Problem: 2D Convolution (NumPy)

I was given:

A 4×4 input matrix

A 3×3 filter

stride = 1

And asked to implement convolution.

At first, I defaulted to nested loops.

It worked, but it was slow and messy.

The interviewer hinted I should try using NumPy slicing, which is where I struggled.

My Approach

Slide a 3×3 window over the matrix

Multiply element-wise

Sum results

Code I wrote (loop version)

import numpy as np

def conv2d(A, K):

n = A.shape[0]

k = K.shape[0]

output_size = n - k + 1

result = np.zeros((output_size, output_size))

for i in range(output_size):

for j in range(output_size):

window = A[i:i+k, j:j+k]

result[i][j] = np.sum(window * K)

return resultThis worked, but wasn’t ideal.

The interviewer was clearly looking for a more vectorized solution, and that’s where I felt my NumPy fundamentals weren't strong enough.

Problem 3: Memory Allocator (Bit Manipulation)

This one caught me off guard.

32-bit array

Each element stores 64-bit data

Implement

allocate()andfree()❗ No extra space allowed

My Thought Process

Treat the array as a bitmap

Use bits to mark used/free slots

Scan for available segments

I eventually got something working.

But I took too long because I hadn’t practiced bit manipulation recently.

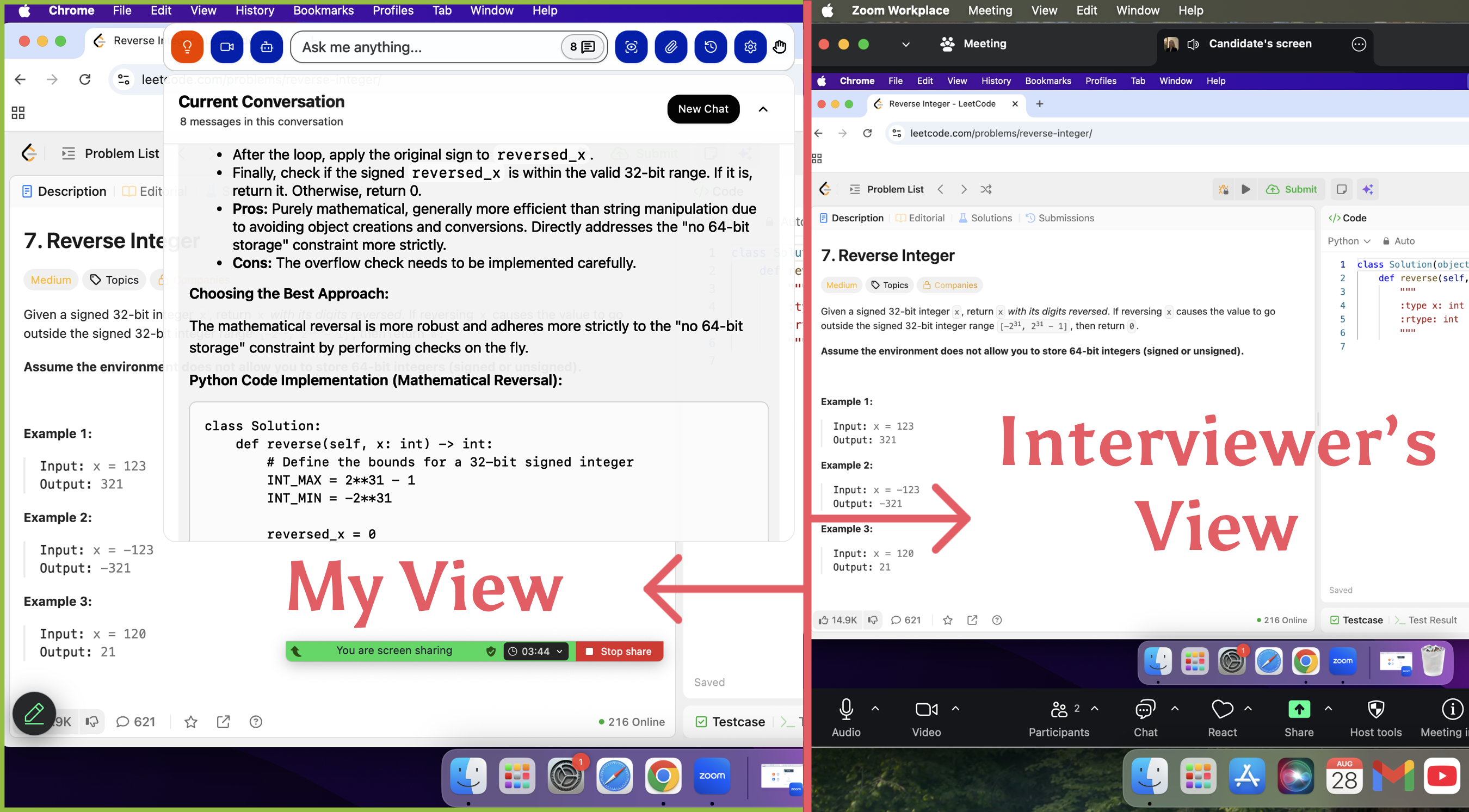

Thanks to Linkjob AI, I could finish all the questions in time, without being noticed by the interviewer.

Invisible AI Coding Interview Tool

Stage 3. System Design (45–60 mins)

This round focused on backend/system design.

Problem:

Artifact storage system:

Built on K8s + Cassandra

Requirement:

Artifact created only once

Questions:

How to handle concurrent creation?

What about delete + re-add?

How to optimize read API?

What they were testing:

Concurrency control

Idempotency

Distributed consistency

I focused on consistency, caching, and performance trade-offs.

Stage 4. Resume Deep Dive (45 mins)

This felt more like a real engineer conversation.

Walk through projects

Deep dive into one ML system

Explain design choices

They asked me to write a module on the spot (Not full code, but structure + logic)

Stage 5. Applied ML & Product Thinking (45 mins)

In this round, I started by discussing ML frameworks like NumPy, PyTorch, and JAX. The interviewer also asked me to walk through how a model goes from definition to GPU execution.

He then shifted to product thinking. I talked about how to evaluate a feature and what metrics matter for usability. One question was about comparing NVIDIA with competitors. I explained that it should be done carefully, focusing on transparency and trust.

Stage 6. Behavioral & Hiring Manager (45 mins)

In this round, the interviewer focused more on behavioral questions. I was asked how I handle disagreements and what kind of team I prefer. He also asked about my expectations for the next role.

The questions felt open-ended but still required clear structure. There was also a light design discussion. I briefly talked through a simple system idea and potential challenges.

Preparation Strategy for NVIDIA Interview Questions

When I started preparing for NVIDIA interviews, I realized that having a clear strategy made all the difference. I broke my approach into three parts: gathering the best resources, building a study plan, and practicing with intention. Let me walk you through how I tackled each step.

Preparation Resources

What actually helped me (based on what was asked):

ML fundamentals (especially optimization & generalization)

Basic NumPy operations (slicing, shape, vectorization)

LeetCode medium-level problems

System design basics (APIs, concurrency)

This directly matches what showed up in:

DL fundamentals round

NumPy convolution coding

System design questions

Study Plan

What worked for me:

Week 1–2

Focus on ML fundamentals (gradient descent, SGD, loss surfaces)Week 3

Practice coding:Arrays

Hashmaps

Basic math operations

Week 4

Review:One strong project deeply

System basics (API + concurrency)

I wish I had spent more time on NumPy earlier.

Practice Techniques

I practiced explaining answers out loud, not just solving

I simulated interview pressure (timed coding)

I revisited mistakes and rewrote solutions cleanly

This helped a lot when interviewers asked follow-ups.

NVIDIA Interview Question Solving Tips

Tackling Technical Questions

I started by stating assumptions clearly

Then broke the problem into smaller steps

When stuck, I kept talking instead of going silent

This often triggered hints from the interviewer.

Handling Behavioral Questions

I kept answers short and concrete

Focused on real experiences, not generic stories

Avoided over-explaining unless asked

HM rounds were more relaxed than expected.

Managing Stress

Some rounds felt awkward (like silent coding), but I stayed focused

When I got stuck, I didn't panic—I tried simpler approaches first, and reminded myself: they care about thinking, not perfection

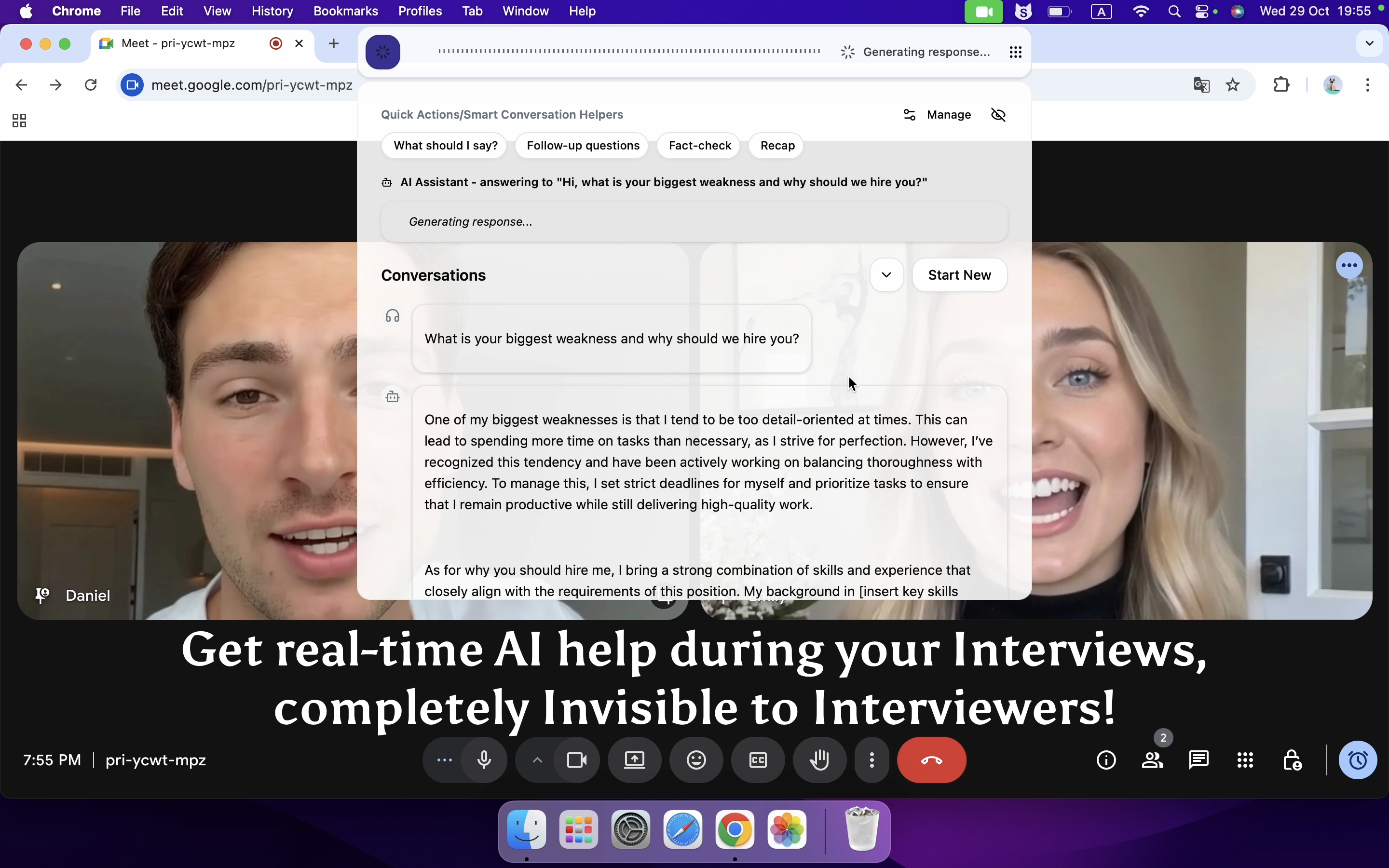

At the same time, I turned to Linkjob AI for help, which could offer real-time hints to the questions given by the interviewer

Real-time AI Interview Assistant

Actionable Tips for Success in NVIDIA Interview Questions

Common Pitfalls

Ignoring ML fundamentals → biggest mistake

Weak NumPy / implementation skills

Spending too long on one coding problem

Not explaining thought process

I hit at least two of these myself.

Focus Areas

If I had to redo prep:

ML optimization + generalization

NumPy + matrix operations

Clean coding fundamentals

One strong project (deep dive ready)

Stay confident, keep learning, and remember—every challenge is a step toward your dream job!

FAQ

How did I stay motivated during my NVIDIA interview prep?

I treated each weak area as a signal, not a failure. Every mistake I made in practice showed up in interviews—so fixing them felt meaningful.

What should I do if I get stuck on NVIDIA technical questions?

I managed to stay calm, and with the help of Linkjob AI, I kept talking through my thinking. Even partial ideas helped the interviewer guide me in the right direction.

How much time did I spend preparing each day?

On average, a few hours daily. More importantly, I focused on quality over quantity--understanding deeply instead of rushing.

What resources helped me the most?

ML fundamentals and real interview questions helped the most. I collected the questions from Nvidia HackerRank test, especially topics like gradient descent and generalization, which came up directly.

Can I apply these strategies to other tech interviews?

Yes, but NVIDIA emphasizes fundamentals more than many companies. If I prepare this way, other interviews actually feel easier.

See Also

My 2026 NVDIA Software Engineer Interview and Questions

NVIDIA Coding Interview: Problems I Faced and My Solutions

How I Cracked 2026 Nvidia Internship: Real Questions I Faced