My Data Analyst Interview Experience in Tower Research

Recently, I successfully passed the interview for a Data Analyst position at Tower Research. I went through multiple rounds of interviews that assessed my data knowledge and problem - solving skills. Each round was highly pressured. During the process, I benefited greatly from discussing my past projects and the support of Linkjob.ai. This invisible AI interview assistant, which remained undetected by the interviewers, provided me with valuable insights and guidance, helping me to better present my capabilities and secure the position.

Key Takeaways

Understand the application process, technical assessments.Sometimes certain interview stages may be omitted. Stay calm and demonstrate your full potential.

Practice programming and data analysis skills through the question bank. Use real datasets and tools like SQL and Python to sharpen your abilities.

Communicate your thought process clearly during interviews. Explain how you approach problems and share stories from past projects to demonstrate your skills.

Stay calm under pressure. Focus on problem-solving and maintain a positive attitude, even when faced with challenging questions.

Tower Data Analyst Interview Questions

When I prepared for the Tower Research data analyst interview, I realized that the questions covered a wide range of topics. I want to break down the types of questions you might face and share how I tackled them. If you want to do well, you need to practice different types of data analysis, brush up on your technical skills, and get comfortable explaining your answers.

Technical and Analytical Questions

The technical and analytical questions tested my understanding of data, statistics, and programming. I saw questions about sql, Python, and data analysis case studies. Tower Research wanted to know if I could use the right tools to process data and turn it into insights. They also checked my knowledge of probability, statistics, and data visualization.

Here’s a table that shows the core concepts I encountered during the interview:

Field

Core Test points

Key Methods/Tools

Statistic Inference

1.Point estimation (MLE/OLS)

2.Interval estimation (confidence intervals)

3.Hypothesis testing (unit root tests)

1.Maximum Likelihood Estimation (MLE

2.Ordinary Least Squares (OLS)

3.Confidence interval construction

Optimization Theory

1.Constrained optimization (focus)

2.Lagrange multipliers

3.KKT conditions

4.Convex optimization

1.Lagrange multipliers

2.KKT conditions

3.Convex optimization

Time Series Modeling

1.ARMA family models (theory & application)

2.Model selection (AIC/BIC)

3.Stationarity testing

1.AR/MA/ARMA/ARIMA models

2.AIC/BIC information criteria

3.Unit root tests

Portfolio Theory

1.Markowitz framework

2.Mean-variance optimization (core)

3.Asset pricing theories (CAPM/APT/Fama-French)

1.Mean-variance optimization

2.CAPM/APT/Fama-French three-factor model

Derivatives Pricing

1.Black-Scholes framework

2.PDE derivation

3.Risk-neutral valuation

4.Greeks calculation

5.Monte Carlo methodsl

1.Black-Scholes PDE

2.Risk-neutral valuation

3.Greeks (Delta/Gamma/Vega, etc.)

4.Monte Carlo simulation

Risk Management

1.VaR (Value at Risk)

2.Coherent risk measures (advanced)

3.Expected shortfall

4.Stress testing/scenario analysis

1.VaR calculation

2.Coherent risk measures

3.Expected shortfall

4.Stress testing/scenario analysis

Market Microstructure

1.Trading algorithm design

2.Optimal execution

3.Market making

4.Statistical arbitrage

1.Trading algorithm design

2.Optimal execution

3.Market making strategies

4.Mathematical foundations of statistical arbitrage

I found that the interviewers expected me to know more than just the basics. They asked about stochastic processes, real analysis, and time series analysis. I also needed to show that I understood probability, betting strategies, expected-value calculations, and market-making. If you want to prepare, make sure you review these topics and practice using sql and Python for data analysis.

Some of the technical questions I remember include:

Implement a Monte Carlo method to price an Asian call option with arithmetic average. Discuss convergence properties and variance reduction strategies.

For a European put option under Black-Scholes assumptions, derive expressions for delta, gamma, and theta. Explain their practical applications in portfolio hedging.

Apply Extreme Value Theory to model the tail behavior of portfolio returns. Compare the Generalized Extreme Value distribution with the Generalized Pareto Distribution for risk measurement.

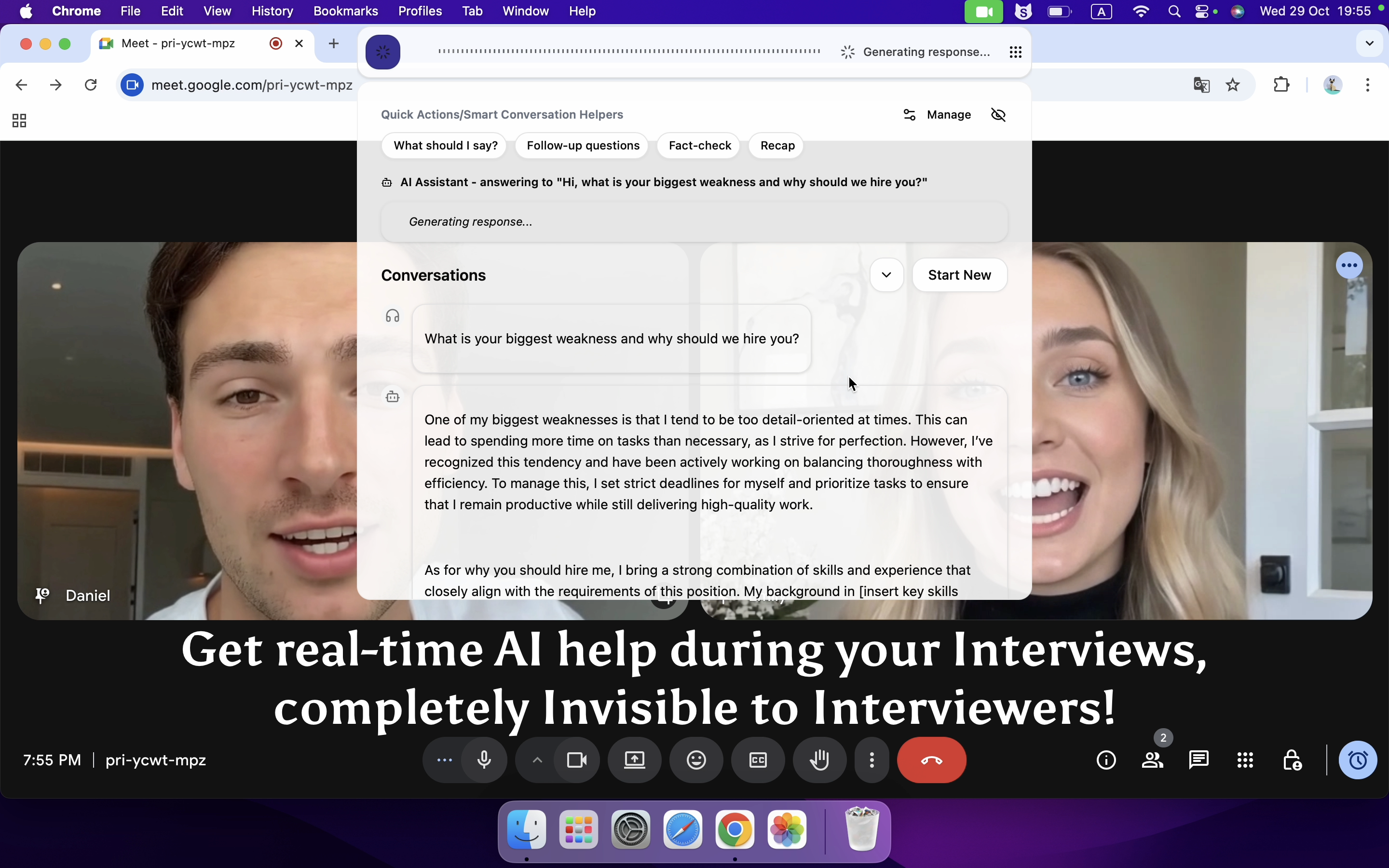

To be honest, I've never been very good at deriving the delta, gamma, and theta expressions, so I used the AI tool below, which quickly provided me with the answers.And I also enlisted the help of Linkjob during the later stages of code testing,and it still generated with high accuracy and comprehensiveness at an exceptionally fast pace.

The interviewer couldn't see linkjob, so I used it throughout the entire process. Besides helping me tackle tests I don't know, it can organize my thoughts in real time as you articulate them.

The project discussion was one of the most important parts of my interview. The team wanted to hear about my past data analysis projects, the challenges I faced, and the impact of my work. They asked me to walk them through my process, from gathering data to delivering insights.

Here’s how I structured my answers:

I described the goal of the project and the data I used.

I explained the tools and methods I chose for data analysis, like sql, Python, or Excel.

I talked about the challenges I faced, such as missing data or unclear requirements.

I shared how I solved these problems and what insights I discovered.

I highlighted the impact of my analysis on the business or team.

Note: Always focus on the results of your data analysis. Show how your work led to actionable insights or helped solve a real problem.

The interviewers wanted to see that I could handle real-world data, use the right tools, and communicate my findings. They also looked for examples where I learned from mistakes or improved my process. If you want to stand out, pick a few strong projects and practice telling your story. Use clear language and focus on the value of your analysis.

I learned that the best way to prepare for the data analyst interview questions is to practice with real data, review key concepts in statistics and probability, and get comfortable talking about your projects. If you can explain your answers clearly and show your passion for data analysis, you’ll have a great chance of success.

Tower Data Analyst Interview Process

Application and Screening

I started my journey by submitting my resume through the Tower Research campus recruitment portal. Sometimes, candidates use online applications. After that, I received a call from Tower Research. The call lasted about 30 to 45 minutes. The recruiter asked about my background, my skills, and why I wanted to become a data analyst. We also talked about the company culture and what the data analyst role involved.

Here’s a quick look at the process:

Stage | Description |

|---|---|

Application | Candidates submit resumes through campus recruitment or online applications. |

Phone Screening | A recruiter conducts a 30-45 minute phone call to discuss the candidate's background and ask two simple brain teasers. |

Skills and Culture | The conversation also covers company culture and specifics of the Data Analyst role. |

I found the phone/online interview format helpful. I felt less nervous because I could focus on my answers without worrying about body language or eye contact.And I can promptly utilize tools to help myself resolve issues.

Technical Assessment

After the screening, I moved to the technical assessment. This stage tested my data analysis skills.Tower Research asked questions about statistics, probability theory, and SQL. I had to explain how I would use data to solve real business problems. The interviewer wanted to see if I could turn raw data into useful insights.

I practiced using different tools before the interview. I worked with Excel, Python, and sql. I also reviewed basic concepts in data analysis, such as hypothesis testing and regression. The technical assessment felt challenging, but I stayed calm and focused on showing my thought process.

Coding Challenge

Next, I faced a coding challenge. Tower Research uses this round to check if you can write code that handles data efficiently. I could choose between C++, Python, or Rust. I picked Python because I use it most for data analysis and building insights.

Here’s a table of the programming languages they test:

Programming Language | Purpose |

|---|---|

C++ | Powers latency-sensitive systems |

Python | Used in research, data analysis, orchestration |

The challenge included sql problems, data cleaning tasks, and logic puzzles. I had to write code that processed large datasets and produced clear insights. I made sure to comment my code and explain my approach. Practicing sql and Python before the interview helped me a lot.

HR Interview

The final stage was the HR interview. This round felt more relaxed, but it still mattered a lot. The HR manager asked behavioral questions to understand my personality and fit for the data analyst role. Here are some common questions:

Tell me about a time you used data to make a decision.

Describe a challenging data project and how you handled it.

How do you handle unclear requirements or ambiguity?

Why did you apply to our company?

What are your biggest strengths and weaknesses?

I answered honestly and gave examples from my experience. I talked about how I use data analysis to solve problems and deliver insights. I also explained how I handle stress and learn from mistakes.

Each stage of the Tower Research data analyst interview tested my skills in data, sql, and analysis. I learned that preparation and practice with real data analyst interview questions make a big difference. If you want to succeed, focus on your data analysis projects, sharpen your sql skills, and get comfortable with the tools you use every day.

Data Skills and Preparation for Tower Data Analyst Interview

Study Resources

When preparing for the data analyst interview at Tower Research, I primarily selected two core books to solidify my foundation. One was Statistics Made Simple, which explains core statistical concepts like descriptive statistics, probability, and hypothesis testing in an exceptionally accessible way. It helped me quickly grasp the underlying logic of data analysis and tackle potential statistics-related questions in the interview. The other was Data Analysis with Python, a classic work by the creator of the Pandas library. It seamlessly integrates theory with practice. I followed the book's case studies step-by-step to practice data cleaning, processing, analysis, and visualization, building solid hands-on Python skills. After all, the core competency of a data analyst is using tools to solve real-world problems.

Beyond books, I also learned foundational skills through interactive courses on Kaggle Learn and DataCamp, practicing Python, R, SQL, Pandas. I also enrolled in Coursera's Google Data Analytics Professional Certificate to systematically learn the full data analysis workflow and relevant tools. Additionally, I took the University of Michigan's Applied Data Science with Python Specialization, delving into advanced Pandas operations, data visualization with Matplotlib and Seaborn, and an introduction to machine learning with Scikit-learn. Additionally, I systematically worked through SQL problems on LeetCode and SQLZoo, progressing step-by-step from basic syntax to window functions. I also frequently visited the Kaggle community to review project experiences and code notes shared by other data analysts. These practices significantly enhanced my understanding of real-world project workflows and problem-solving approaches.

Practice Methods

I believe that hands-on practice is the best way to master data analysis. I worked with real datasets and practiced data cleaning, transformation, and data wrangling every day. I wrote sql queries to extract and process data. I built dashboards to visualize trends and patterns. I used Python for machine learning and analytics projects. I also created data visualization charts to share insights with others.

I set up mock interviews with friends. We asked each other data analyst questions and discussed our approaches to data cleaning, transformation, and dashboard design.

Time Management

Managing my time became a key part of my preparation. I created a study schedule that balanced data analysis, machine learning, and dashboard building. I set aside time for data cleaning, transformation, and data wrangling practice. I used short study sessions to review sql, data visualization, and analytics concepts. I tracked my progress by building dashboards that showed my strengths and areas for improvement.

I learned to focus on one skill at a time. For example, I would spend a day on data preprocessing and cleaning, then switch to machine learning or dashboard creation. I also took breaks to avoid burnout. Staying organized helped me cover all the important topics and tools for the data analyst interview.

Tip: Use dashboards to track your study goals and progress. This makes your preparation more visual and keeps you motivated.

My Data Analyst interview experience in Tower

Challenges Faced

I faced many challenges during my data analyst interview at Tower Research. The biggest one was the sheer volume of data and analysis questions. I had to switch quickly between coding, statistics, and business analysis. Sometimes, I felt overwhelmed by the amount of data I needed to process in a short time. I also struggled with brain teasers that required creative analysis and fast thinking. The team expected me to explain my data analysis process clearly, even when I felt nervous. I realized that handling pressure and staying focused on the data helped me move forward.

Lessons Learned

Looking back, I learned a lot from this experience. I discovered that a strong academic record matters. Many successful candidates have a high CPI, usually above 8.0. Competitive programming skills also help, especially when you need to write code for data analysis. I found that an innovative approach to problem-solving sets you apart. Here’s a table that summarizes the key lessons I picked up:

Key Lesson | Description |

|---|---|

Strong Academic Record | A high CPI, ideally above 8.0, makes you a stronger candidate. |

Competitive Programming Skills | Achievements in programming contests are highly valued. |

Innovative Problem-Solving | Creative approaches to analysis challenges are crucial for success. |

Tips for Success

If you want to succeed in a data analyst interview, here are some tips that worked for me:

Ask clarifying questions to make the most of your time.

Inquire about how the role fits into the company’s long-term vision.

Understand team dynamics and how people collaborate on data analysis.

Explore opportunities for professional growth within the company.

Learn about feedback mechanisms in the team.

Prepare stories using the STAR Method for behavioral questions.

I learned that the Tower Research data analyst interview rewards clear thinking and strong preparation. Here’s what helped me most:

Review your data projects and practice explaining your process.

Focus on problem-solving and stay calm during tough questions.

Use real datasets to sharpen your analyst skills.

FAQ

How did I prepare for the technical rounds?

I practiced SQL queries and Python scripts every day. I solved problems on LeetCode and HackerRank. I reviewed statistics and probability basics. I also worked with real datasets to build my confidence.

What should I focus on for the coding challenge?

I focused on writing clean code that solves the problem. I commented on my code to explain my logic. I practiced data cleaning and SQL queries. I made sure I could handle large datasets without getting stuck.

How can I stand out in the project discussion?

I always picked one or two strong projects. I explained my process step by step. I showed the impact of my work with numbers or visuals. I made sure to highlight what I learned from each project.

What resources helped me the most?

Here’s a quick list of my favorite resources:

Advanced in Financial Machine Learning(book)

Kaggle Learn and DataCamp(coding practice)

LeetCode and HackerRank

Mock interviews with friends

Tip: Use these resources to build your skills and boost your confidence.

See Also

How to Cheat HackerRank Tests With AI: My 2026 Update

How I Cracked 2026 Citadel HackerRank Assesment Questions

How I prepared for and succeeded in the 2025 JP Morgan HireVue interview