How I Share Screen to Cheat in Exams and Interview in 2026

When I’m cheating in an online exam and interview, the most stressful moments is when the interviewer suddenly asks I to share my screen.

But I’m not afraid anymore now because I find an undetectable AI interview assistant. It can collect the questions from the interviewer while simultaneously generating answers for me. Even if the interviewer asks me to share the screen, it won't be visible. Now I know how to cheat on hackerrank and I have successfully pass Tiktok codesignal OA with the undetectable AI interview assistant.

Here is a technical breakdown of highly reliable evasion architectures based on modern cybersecurity principles.

Key Takeaways

Hardware Beats Software: Ditch easily detected software cheats. Rely on hardware-level tools (like PCIe DMA or EDID splitters) or bare-metal hypervisors to bypass operating system surveillance entirely.

Maintain Air-Gapped Stealth: Keep external devices on entirely separate networks (like a 5G hotspot) and use kernel-level tactics (DKOM) to hide processes from system task managers.

Defeat Visual and Audio Scanners: Beat AI eye-tracking by reading prompts off a teleprompter placed over your webcam, and use undetectable, RF-immune magnetic micro-earpieces for audio feedback.

Use Localized AI (RAG): Avoid the delays and inaccuracies of cloud AI by using a local model pre-loaded with your specific facts and resume for instant, bulletproof answers.

Engineer a Kill Switch: Treat your setup like a cybersecurity operation. Stress-test everything before going live, and have a physical kill switch ready to instantly disconnect all hardware in an emergency.

Share Screen Cheat Tools and Methods

The detection logic of modern proctoring systems is primarily based on user-space system call scanning. To ensure absolute stealth, evasion tactics must employ a "dimensional strike"—moving the isolation down to the hardware or Hypervisor level, operating far below the OS.

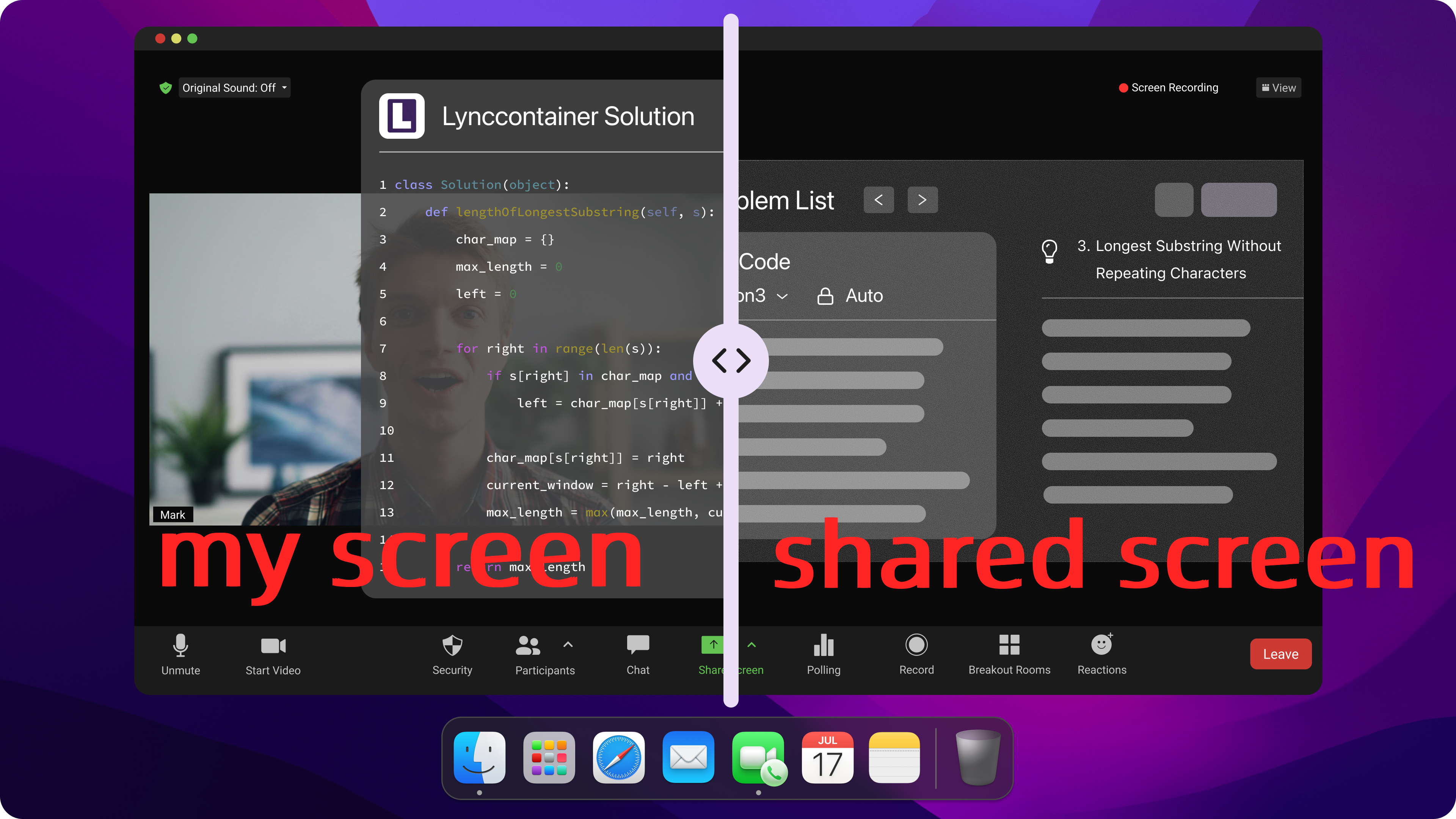

ScreenHelp and AI Assistance

Common stealth software (like ScreenHelp) operates on the same OS layer as the proctoring software. Because they rely on API hooking, they are easily detected by the handle scanning mechanisms of modern anti-cheat engines.

The truly reliable approach is a Type-1 Hypervisor (Bare-Metal) Isolation Architecture. By using KVM/QEMU paired with PCIe Passthrough, the host OS runs the underlying architecture, while the monitored exam/interview software is completely sandboxed inside a guest OS. The host can then use Virtual Machine Introspection (VMI) to silently extract video frame data from the guest and transmit it to external devices. In this setup, the proctoring system is trapped in a logical "black box," completely unable to detect the host machine's existence.

VNC and LAN Mirroring

Traditional local network mirroring (VNC/Miracast) leaves obvious TCP/UDP traffic footprints in the network stack, which are easily intercepted by the Deep Packet Inspection (DPI) modules of proctoring systems.

The ultimate hardware-level solution is a PCIe DMA (Direct Memory Access) Attack. By inserting a custom FPGA hardware development board (running firmware like PCILeech) into the motherboard's M.2 or PCIe slot, an external device can read physical memory at massive bandwidths without ever invoking the CPU or OS scheduler. The extracted audio/video streams are sent via fiber optic cables to an external secondary machine. This entirely bypasses software-level anti-screenshot protections, capturing real-time screen data perfectly.

HDMI Splitters and Android Cast

Software casting modifies the Windows registry and leaves system logs. Even standard HDMI splitters can betray you by exposing abnormal monitor EDID signals to the graphics card.

The industrial-grade alternative is using a unidirectional hardware splitter capable of EDID (Extended Display Identification Data) Spoofing. Not only does it strip HDCP encryption, but it also forces the host graphics card to see a single, standard 1080p physical monitor. The actual video stream is seamlessly branched off to a capture card on another device, physically severing any reverse-detection link the proctoring software might try to establish.

Pros, Cons, and Detection Risks

When shifting to hardware-level evasion and bottom-layer architecture, the risk matrix changes fundamentally:

Software Isolation (Hypervisor): Lower cost, but defense durability relies on whether VM Escape vulnerabilities have been patched. It requires continuous maintenance of kernel driver signatures.

Hardware Architecture (DMA / EDID Splitters): Universally recognized as the most reliable method. It is absolutely undetectable on a software level. The core risk shifts entirely from "digital detection" to "Physical OpSec Failure"—for example, your webcam accidentally catching a glimpse of the FPGA extension cables, or environmental scans exposing your wiring layout.

NB: At this stage, you can probably tell that a lot of these tools still require extra setup and can feel a bit troublesome to use. If you want something more straightforward, linkjob AI, undetectable AI interview assistant, is an easy all-in-one option. You can just install it and use it without dealing with a complicated setup process.

Setup for Undetectable Sharing-screen while interviewing

When deploying these low-level tools, the gold standard for environment configuration is achieving what infosec professionals call "Air-Gap Isolation."

Device and Network Preparation

The secondary machine running external processing models must be physically severed from the monitored host's network. The exam host uses a wired ethernet connection, while external helper devices must run on an independent 5G mobile hotspot to avoid side-channel attacks on the same subnet. Furthermore, the host router should be configured with DNS Sinkholing to actively block unnecessary telemetry domains used by common proctoring software, stopping environmental data from calling home.

Software Configuration

Abandon any third-party stealth software that registers virtual drivers (like virtual webcams) in the system. The advanced approach borrows from the BYOVD (Bring Your Own Vulnerable Driver) concept. By utilizing old hardware drivers with valid digital signatures, you can use IOCTL communications at the driver level to register spoofed input/output endpoints in the Device Manager. This allows external prompts to be fed into the system while appearing to the OS as completely legitimate physical peripherals.

Privacy and Stealth Settings

Standard OS "Privacy Modes" are useless against Ring 0 high-privilege anti-cheat drivers. If you are forced to run a micro-relay script on the host machine, you must employ advanced process hiding: DKOM (Direct Kernel Object Manipulation). By using a custom kernel driver, the stealth process is manually unlinked from the Windows ActiveProcessLinks doubly linked list. Once unlinked, neither the Task Manager nor the proctoring software's API traversals can find any trace of the process in the memory space.

Connect with Helper Securely during sharing screen when cheating

In this architecture, the core objective is establishing a unidirectional, low-latency, and absolutely covert receiving channel—known as Out-of-Band (OOB) communication.

Secure Channels and Real-Time Help

Any text popping up on the main screen risks triggering the screen anomaly detection of the proctoring system. Feedback channels must transition to micro-acoustic devices.

Once the external team (or automated model) processes the information, it is broadcasted via a Text-to-Speech (TTS) module. The user receives this via a Deep-Canal Magnetic Micro-Earpiece. The size of a grain of rice, this device operates on electromagnetic induction principles. Because it contains no Bluetooth or Wi-Fi modules, it is completely immune to any Radio Frequency (RF) scanners in the testing environment.

View-Only Modes and Sync Tips

To maintain natural collaboration, the transmission and feedback latency must fall below the human perception threshold. The total system latency is expressed as:

$\Delta t = t_{capture} + t_{encode} + t_{transmit} + t_{decode}$

To push $\Delta t$ to the absolute minimum, you must use PCIe 3.0 professional-grade capture cards supporting uncompressed color spaces (like YUV 4:2:2), compressing capture and encode latency to the 10-millisecond range. This ensures the external helper (or automation engine) receives the feed the exact millisecond the proctor stops speaking, maintaining perfect interaction synchronization.

Bypass Detection and Stay Hidden during sharing screen when cheating

Modern AI proctoring relies heavily on Computer Vision (CV) models for facial landmark positioning and Gaze Tracking.

Avoiding AI Proctoring Traps

Repeatedly darting your eyes to the edge of the screen, an external monitor, or your keyboard is a guaranteed way to trigger a gaze-tracking alert. The ultimate physical countermeasure to this CV algorithm is the Optical Beam Splitter (Teleprompter Principle).

By mounting a 70/30 optical glass at a 45-degree angle directly in front of the monitored webcam, the external code or text prompts are reflected onto the glass. Thanks to physical optics, when you read the hidden text, your visual axis aligns perfectly perpendicular to the camera behind it. To the AI proctoring model, this extracts the perfect feature of a candidate "staring intently and hyper-focusing on the screen."

Masking Screen Activity

If hardware capture is restricted and information must be overlaid on the local screen, you need highest-privilege low-level evasion. In security research, this is known as Framebuffer Hijacking.

By writing a custom display miniport driver, you can intercept system-level screenshot API calls and feed the anti-cheat software a pure, windowless "fake desktop frame." Meanwhile, on the actual physical rendering output of the graphics card, you can safely and covertly overlay the required translucent floating data.

Advanced Share Screen Cheat Tips

True evasion isn't about single-point workarounds; it's about highly systematized, automated engineering management.

Multi-Device Coordination

In a distributed architecture consisting of "Host -> Splitter -> Capture Card -> External Processing Device," time synchronization dictates system stability. All devices in the chain must connect to the same locally hosted NTP (Network Time Protocol) server to keep system time drift under 1 millisecond. This ensures the captured audio and video streams align perfectly, allowing precise timing for external information injection.

AI Automation for Answers

In modern technical evasion, Large Language Models (LLMs) are widely deployed. To prevent general AI from generating "hallucinations," industrial applications deploy localized RAG (Retrieval-Augmented Generation) architectures.

By vectorizing required reference materials, question banks, or personal resumes into a local database beforehand, the system becomes lethal. When a target question is captured, the local model queries the localized database in milliseconds, rebuilding answers strictly based on established facts. This vastly improves accuracy and completely severs reliance on external cloud APIs, mitigating network latency risks.

Practice and Backup Plans

No matter how airtight a system seems, it requires live-fire testing. In the infosec world, this is Red Teaming Simulation.

Before going live, a full-chain stress test is mandatory. Use tools like Wireshark for full packet sniffing to ensure the host's physical network card isn't leaking unauthorized traffic. Check if the optical glass reflection creates a moiré pattern on the user's eyeglasses. Most importantly, engineer a physical Kill Switch—ensuring that if a device disconnects or a sudden physical inspection occurs, all external hardware links can be severed in less than a second, instantly reverting the setup to a completely legal, compliant physical state.

FAQ

How do I test my share screen cheat setup before the real exam?

I treat it like a cybersecurity Red Team simulation by running full-chain stress tests with packet sniffers like Wireshark to ensure zero network traffic leaks. I also rigorously test physical countermeasures, like checking for optical glass reflections, and verify that my physical "Kill Switch" can instantly sever all hardware links in an emergency.

Can proctoring software detect HDMI splitters or hardware cheats?

Standard splitters can be detected via anomalous EDID handshakes, so I exclusively use industrial-grade splitters with unidirectional EDID spoofing to force the OS to see only one monitor. Once the software is completely blind to the hardware, I simply make sure to meticulously hide all cables and FPGA boards to survive 360-degree physical room scans.

What if my helper loses connection during the exam?

To prevent this, my external processing devices run on a dedicated, air-gapped 5G hotspot to avoid local ISP dropouts. As an ultimate failsafe, I rely on a localized RAG AI architecture; if remote connections fail, this local AI instantly queries my pre-loaded database to continue pushing accurate responses to my micro-earpiece.

Is it safe to use AI tools for answers during interviews?

Relying on generic cloud AI is highly risky due to API latency and fabricated "hallucinations." It is only safe when using a localized RAG architecture that pre-loads your actual resume and verified facts into a vector database. This ensures the AI only generates hyper-personalized, highly accurate answers without sounding like a mechanical bot.

Will my screen sharing tool show up in the task manager?

Standard user-space software will easily be caught by kernel-level anti-cheat drivers via handle scanning. To ensure absolute invisibility, I avoid local software entirely by relying on hardware-level PCIe DMA or bare-metal Hypervisor isolation. If a local script absolutely must run, I use DKOM (Direct Kernel Object Manipulation) to manually unlink it from the OS, making it invisible to both the Task Manager and telemetry.

See Also

Get Real-Time Help From an AI Voice Interview Assistant

How to Master DevOps Interviews Using an AI Copilot in 2026

The 6 Best AI Interview Software I've Used (2026 Update)